healthyverse_tsa

Time Series Analysis, Modeling and Forecasting of the Healthyverse Packages

Steven P. Sanderson II, MPH - Date: 2026-04-15

Introduction

This analysis follows a Nested Modeltime Workflow from modeltime

along with using the NNS package. I use this to monitor the

downloads of all of my packages:

Get Data

glimpse(downloads_tbl)

Rows: 175,071

Columns: 11

$ date <date> 2020-11-23, 2020-11-23, 2020-11-23, 2020-11-23, 2020-11-23,…

$ time <Period> 15H 36M 55S, 11H 26M 39S, 23H 34M 44S, 18H 39M 32S, 9H 0M…

$ date_time <dttm> 2020-11-23 15:36:55, 2020-11-23 11:26:39, 2020-11-23 23:34:…

$ size <int> 4858294, 4858294, 4858301, 4858295, 361, 4863722, 4864794, 4…

$ r_version <chr> NA, "4.0.3", "3.5.3", "3.5.2", NA, NA, NA, NA, NA, NA, NA, N…

$ r_arch <chr> NA, "x86_64", "x86_64", "x86_64", NA, NA, NA, NA, NA, NA, NA…

$ r_os <chr> NA, "mingw32", "mingw32", "linux-gnu", NA, NA, NA, NA, NA, N…

$ package <chr> "healthyR.data", "healthyR.data", "healthyR.data", "healthyR…

$ version <chr> "1.0.0", "1.0.0", "1.0.0", "1.0.0", "1.0.0", "1.0.0", "1.0.0…

$ country <chr> "US", "US", "US", "GB", "US", "US", "DE", "HK", "JP", "US", …

$ ip_id <int> 2069, 2804, 78827, 27595, 90474, 90474, 42435, 74, 7655, 638…

The last day in the data set is 2026-04-13 23:57:41, the file was birthed on: 2025-10-31 10:47:59.603742, and at report knit time is 3945.16 hours old. Happy analyzing!

Now that we have our data lets take a look at it using the skimr

package.

skim(downloads_tbl)

| Name | downloads_tbl |

| Number of rows | 175071 |

| Number of columns | 11 |

| _______________________ | |

| Column type frequency: | |

| character | 6 |

| Date | 1 |

| numeric | 2 |

| POSIXct | 1 |

| Timespan | 1 |

| ________________________ | |

| Group variables | None |

Data summary

Variable type: character

| skim_variable | n_missing | complete_rate | min | max | empty | n_unique | whitespace |

|---|---|---|---|---|---|---|---|

| r_version | 130091 | 0.26 | 5 | 7 | 0 | 51 | 0 |

| r_arch | 130091 | 0.26 | 1 | 7 | 0 | 6 | 0 |

| r_os | 130091 | 0.26 | 7 | 19 | 0 | 24 | 0 |

| package | 0 | 1.00 | 7 | 13 | 0 | 8 | 0 |

| version | 0 | 1.00 | 5 | 17 | 0 | 63 | 0 |

| country | 16222 | 0.91 | 2 | 2 | 0 | 167 | 0 |

Variable type: Date

| skim_variable | n_missing | complete_rate | min | max | median | n_unique |

|---|---|---|---|---|---|---|

| date | 0 | 1 | 2020-11-23 | 2026-04-13 | 2024-01-12 | 1961 |

Variable type: numeric

| skim_variable | n_missing | complete_rate | mean | sd | p0 | p25 | p50 | p75 | p100 | hist |

|---|---|---|---|---|---|---|---|---|---|---|

| size | 0 | 1 | 1127330.2 | 1477623.59 | 355 | 43539 | 325161 | 2333727 | 5677952 | ▇▁▂▁▁ |

| ip_id | 0 | 1 | 11450.1 | 22848.72 | 1 | 192 | 2741 | 11717 | 299146 | ▇▁▁▁▁ |

Variable type: POSIXct

| skim_variable | n_missing | complete_rate | min | max | median | n_unique |

|---|---|---|---|---|---|---|

| date_time | 0 | 1 | 2020-11-23 09:00:41 | 2026-04-13 23:57:41 | 2024-01-12 19:44:05 | 111412 |

Variable type: Timespan

| skim_variable | n_missing | complete_rate | min | max | median | n_unique |

|---|---|---|---|---|---|---|

| time | 0 | 1 | 0 | 59 | 12H 8M 44S | 60 |

We can see that the following columns are missing a lot of data and for

us are most likely not useful anyways, so we will drop them

c(r_version, r_arch, r_os)

Plots

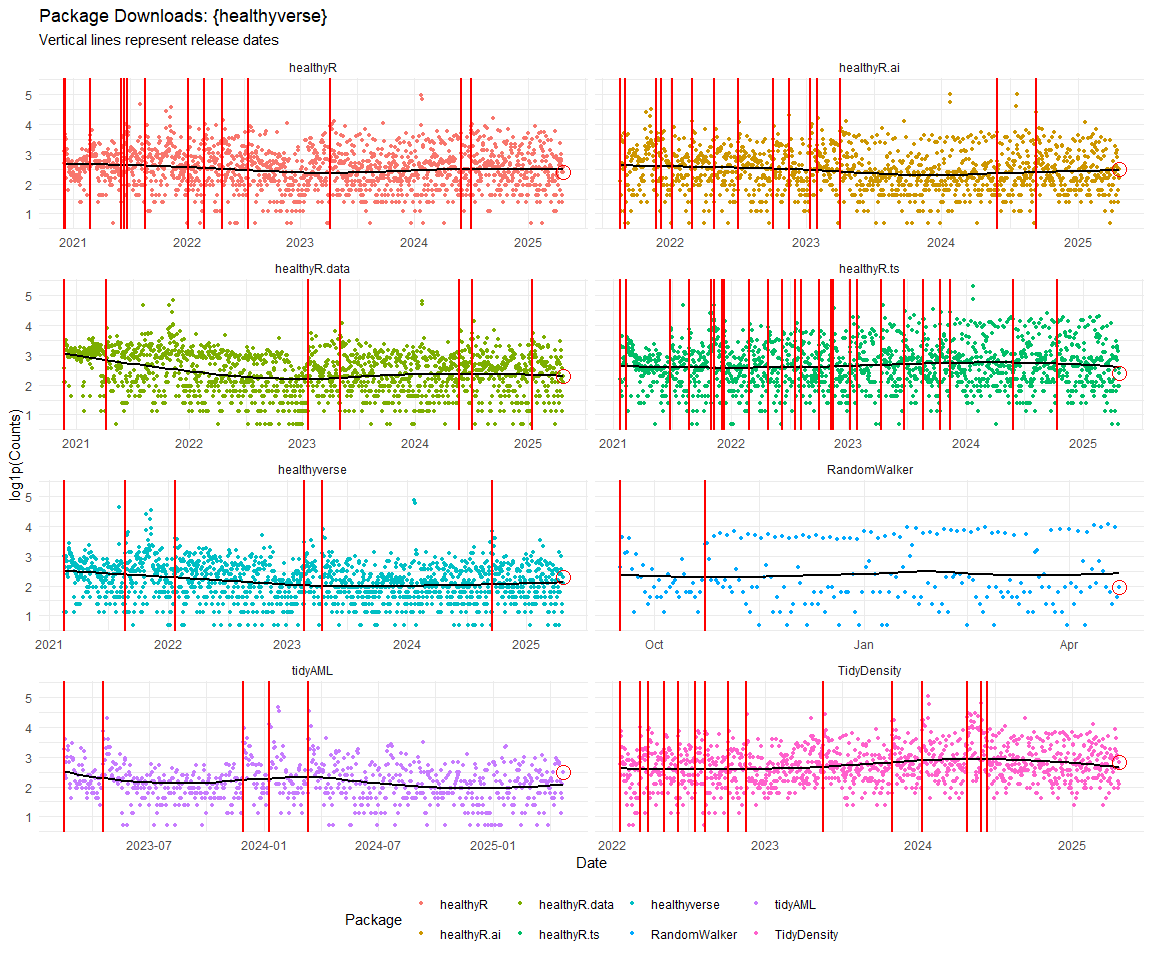

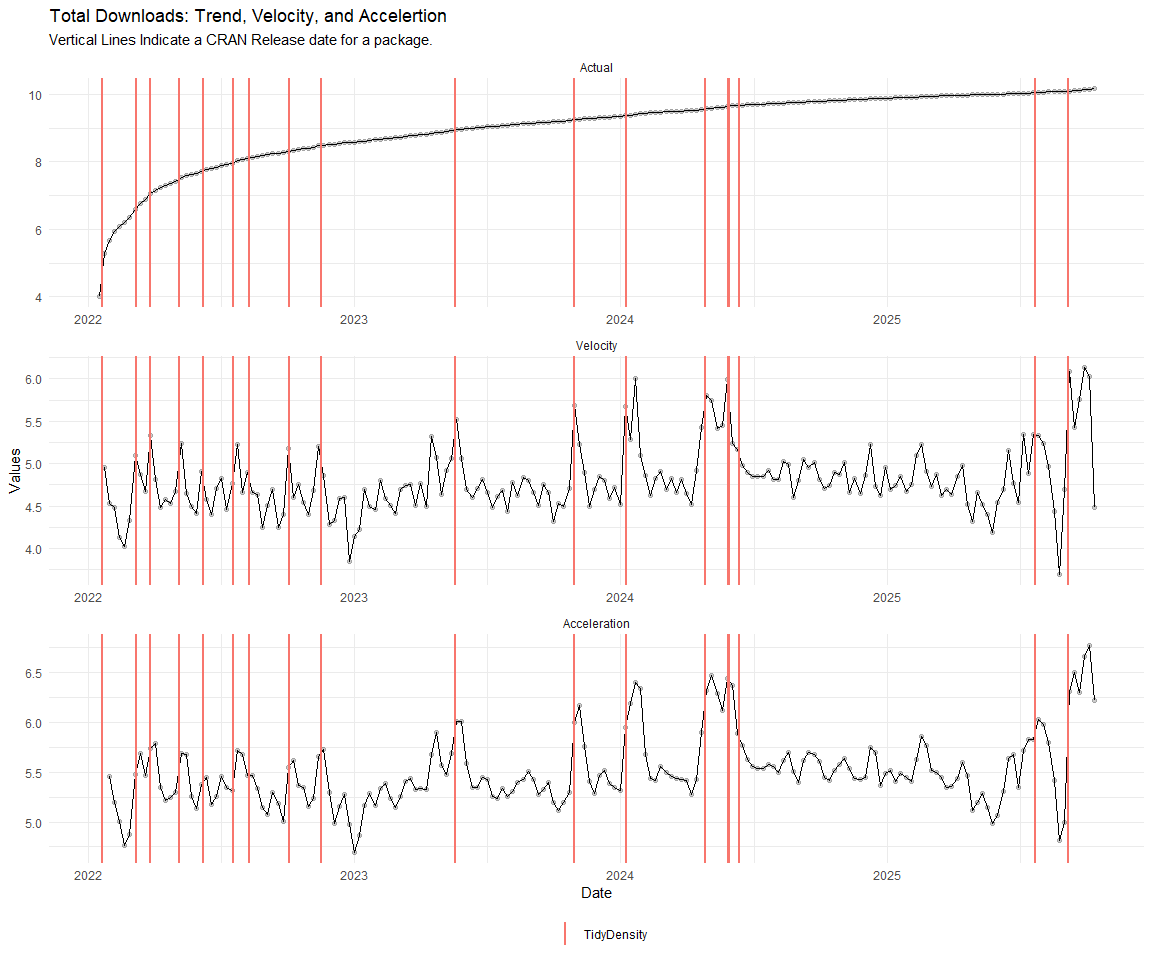

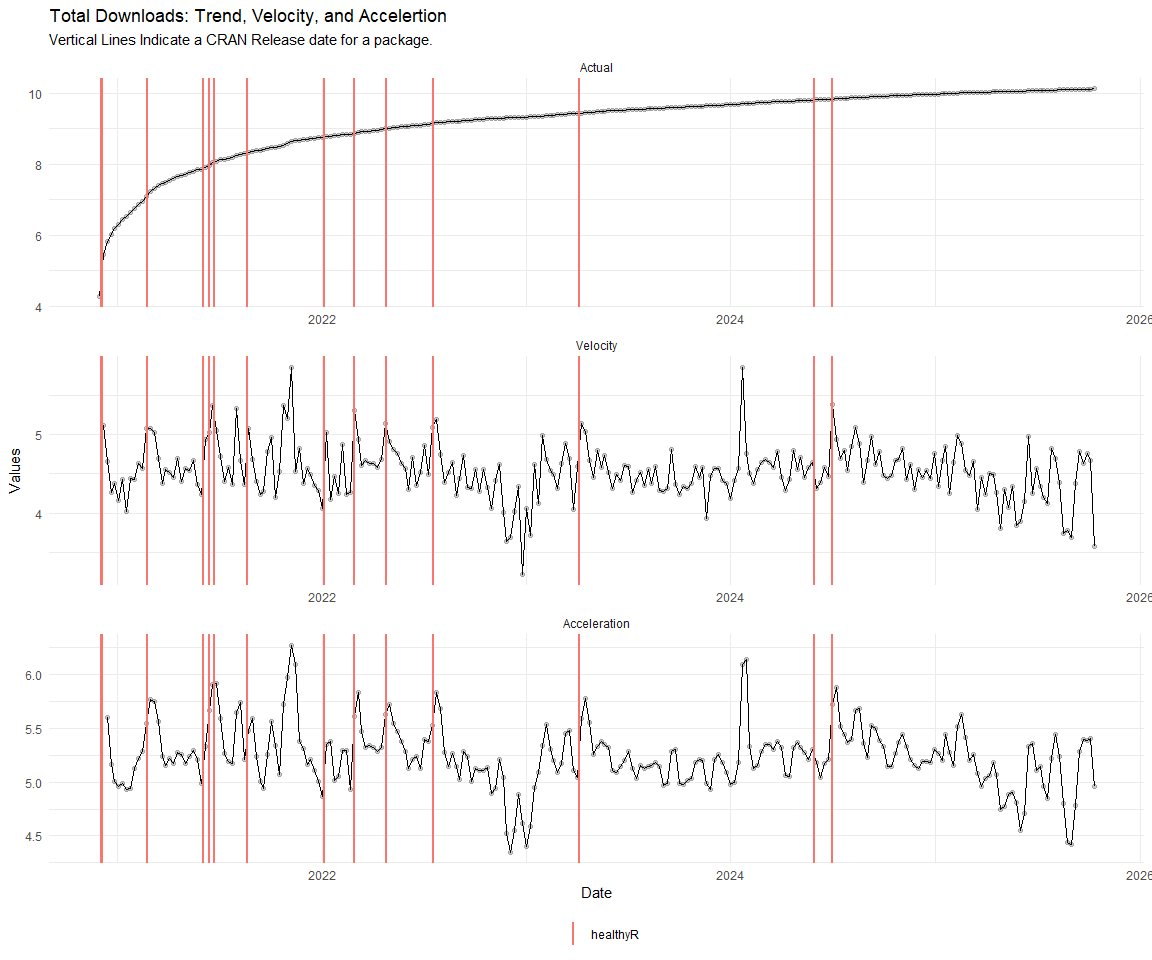

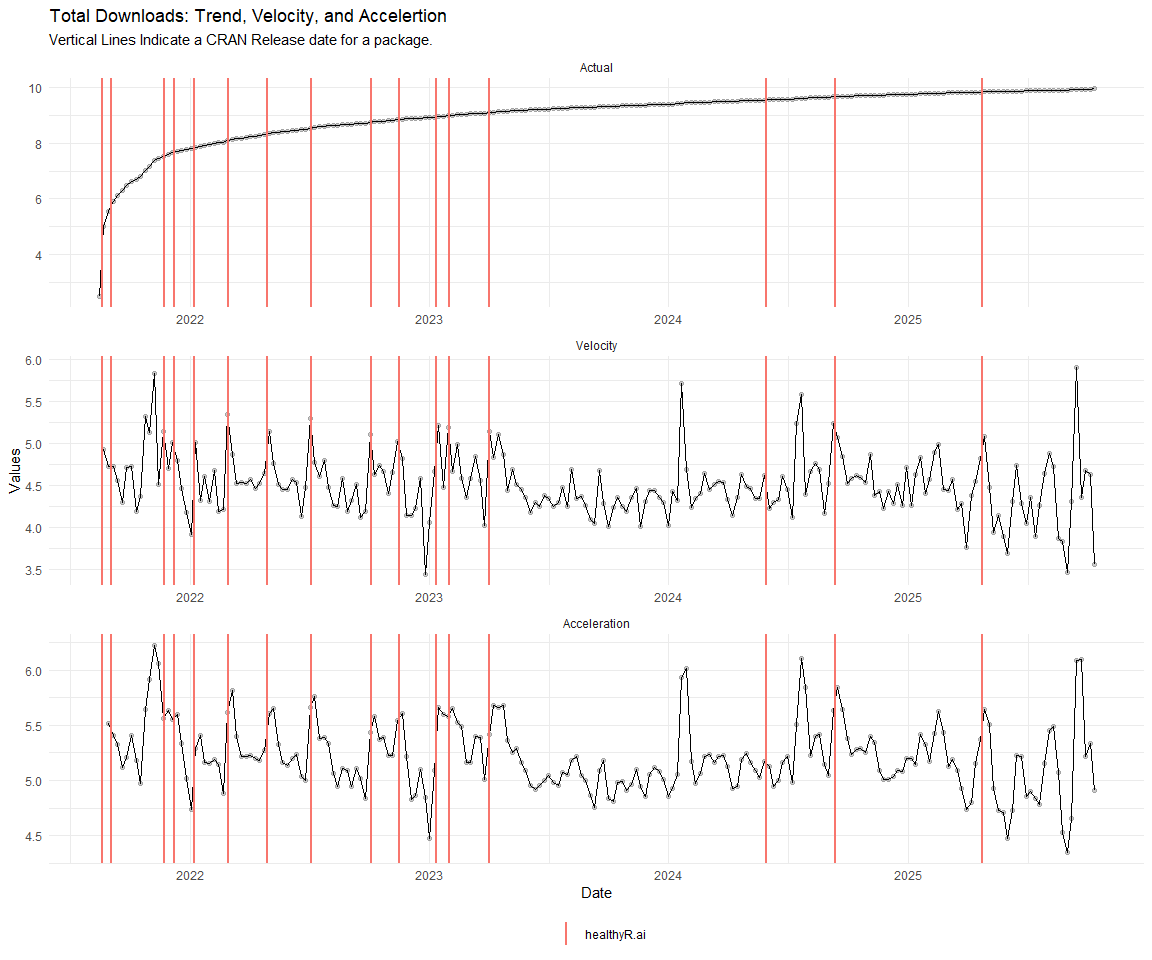

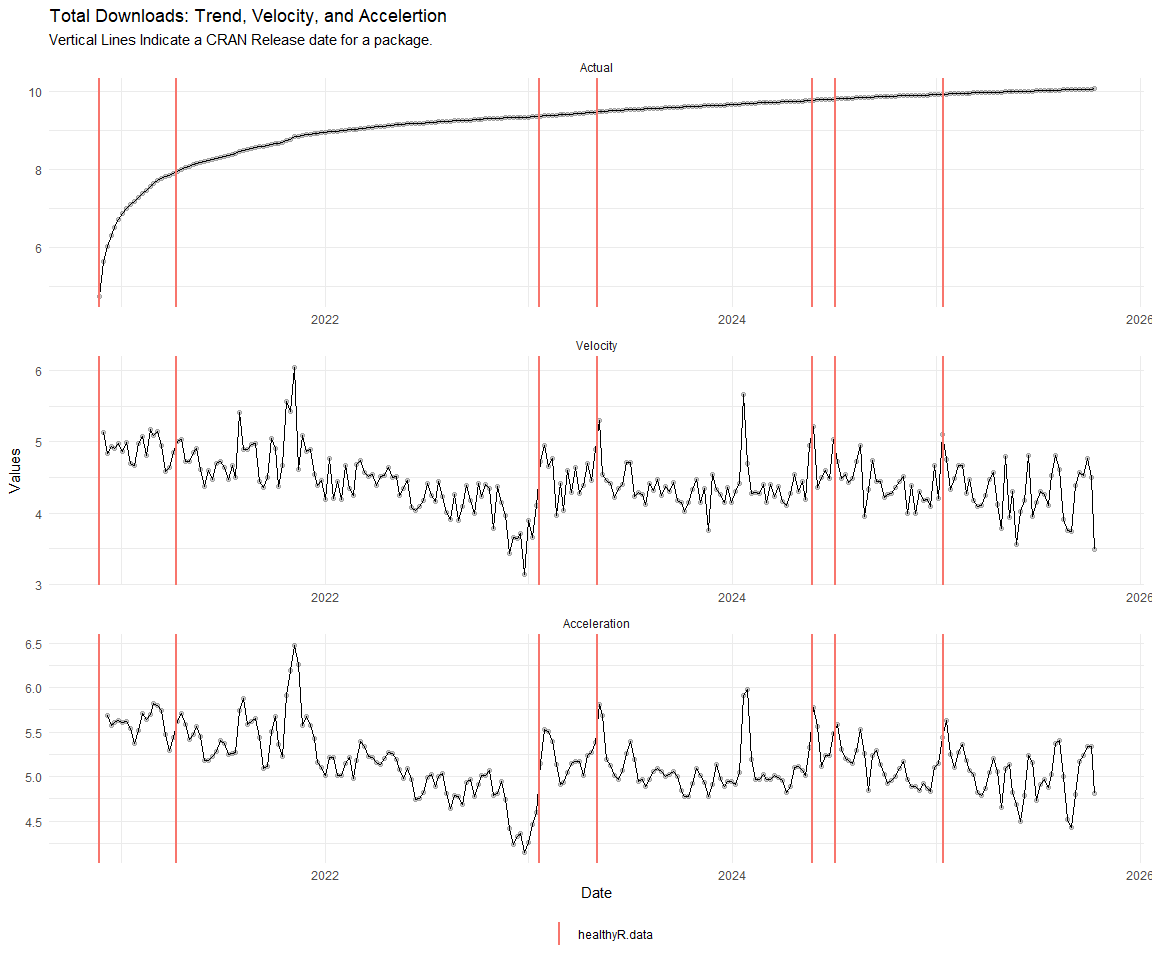

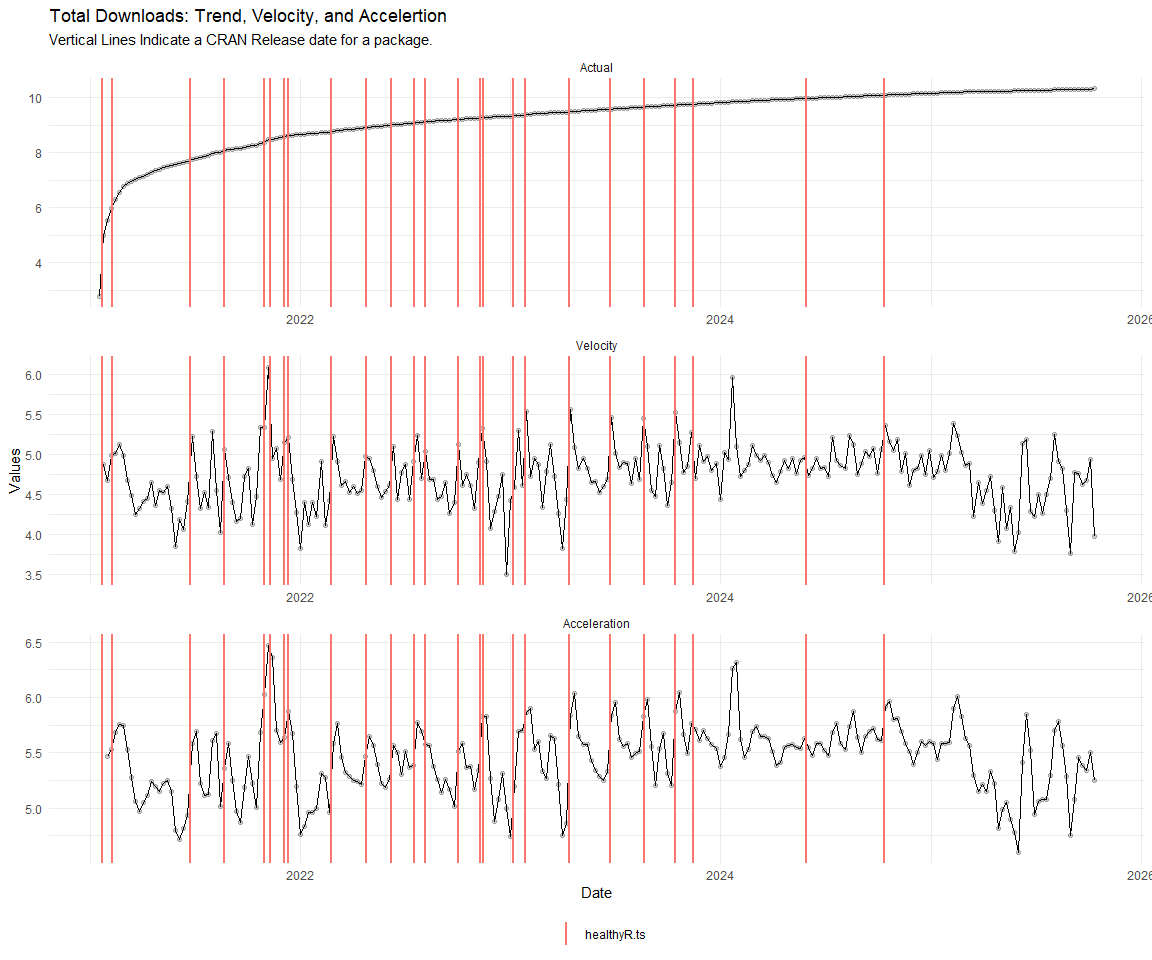

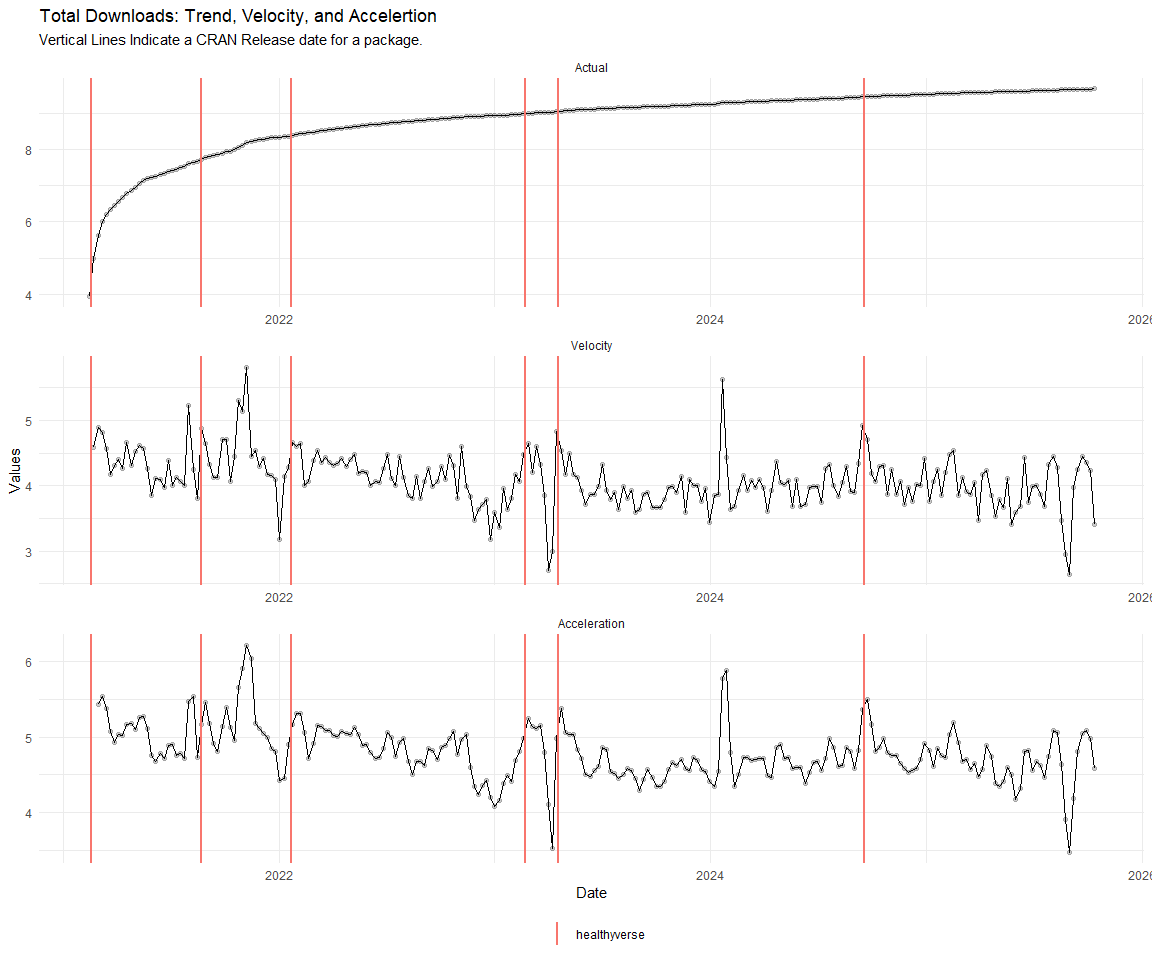

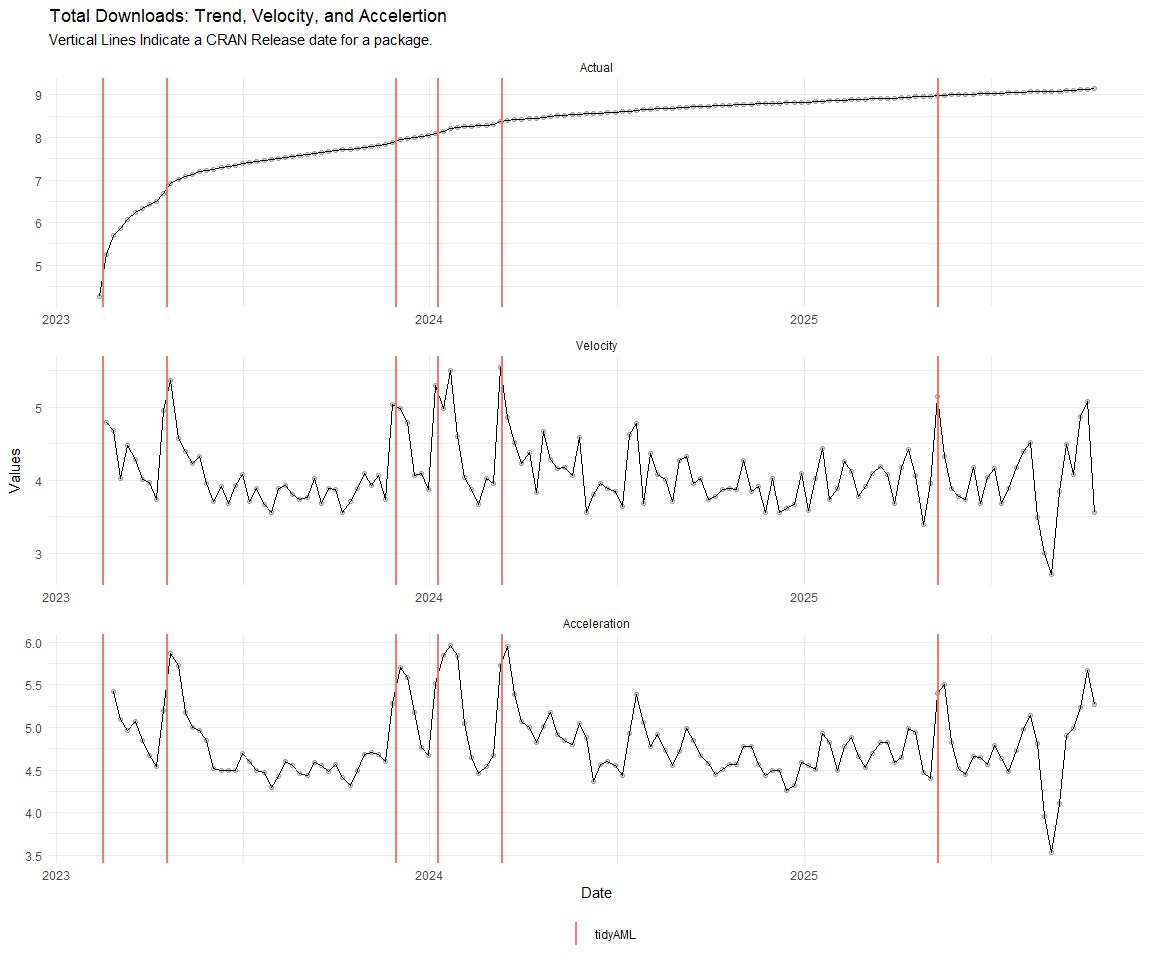

Now lets take a look at a time-series plot of the total daily downloads by package. We will use a log scale and place a vertical line at each version release for each package.

[[1]]

[[2]]

[[3]]

[[4]]

[[5]]

[[6]]

[[7]]

[[8]]

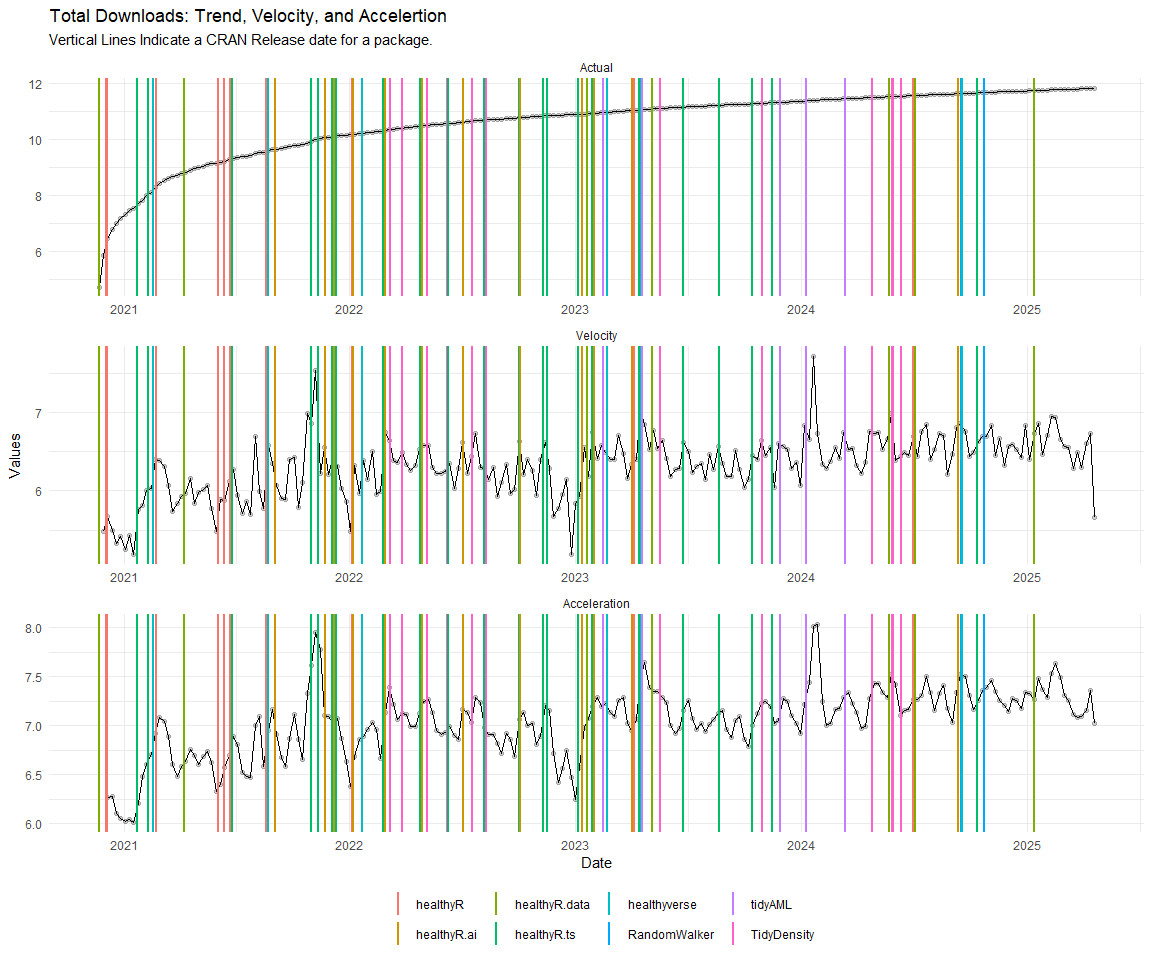

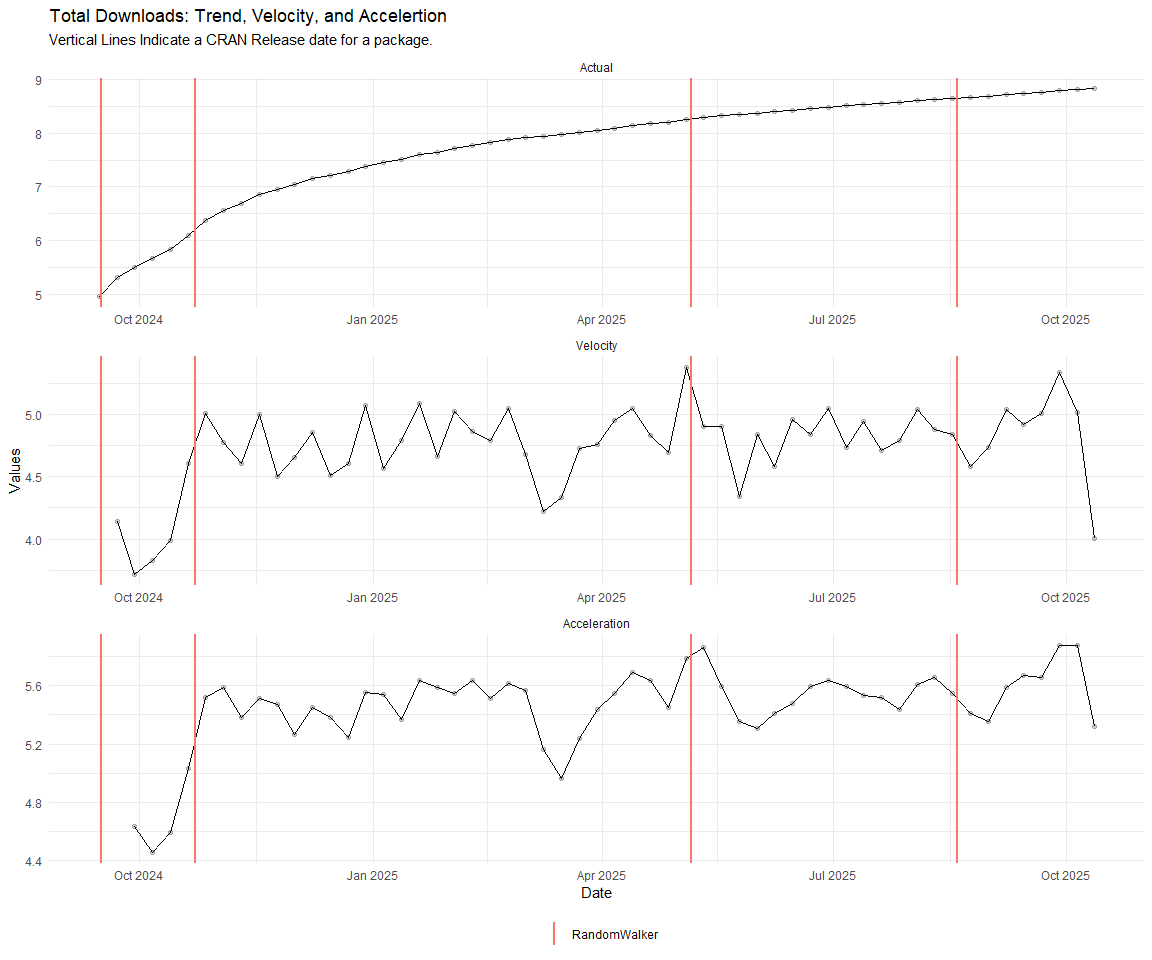

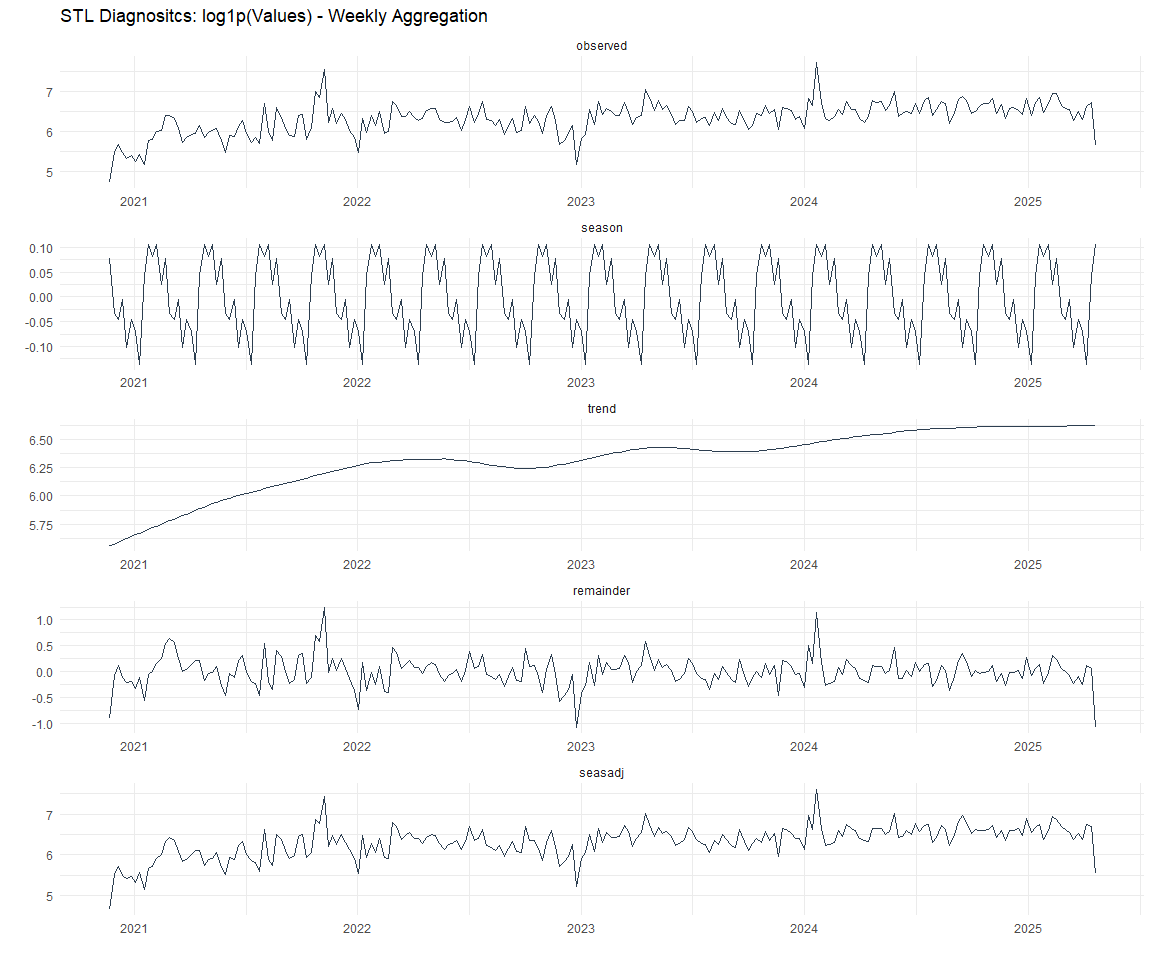

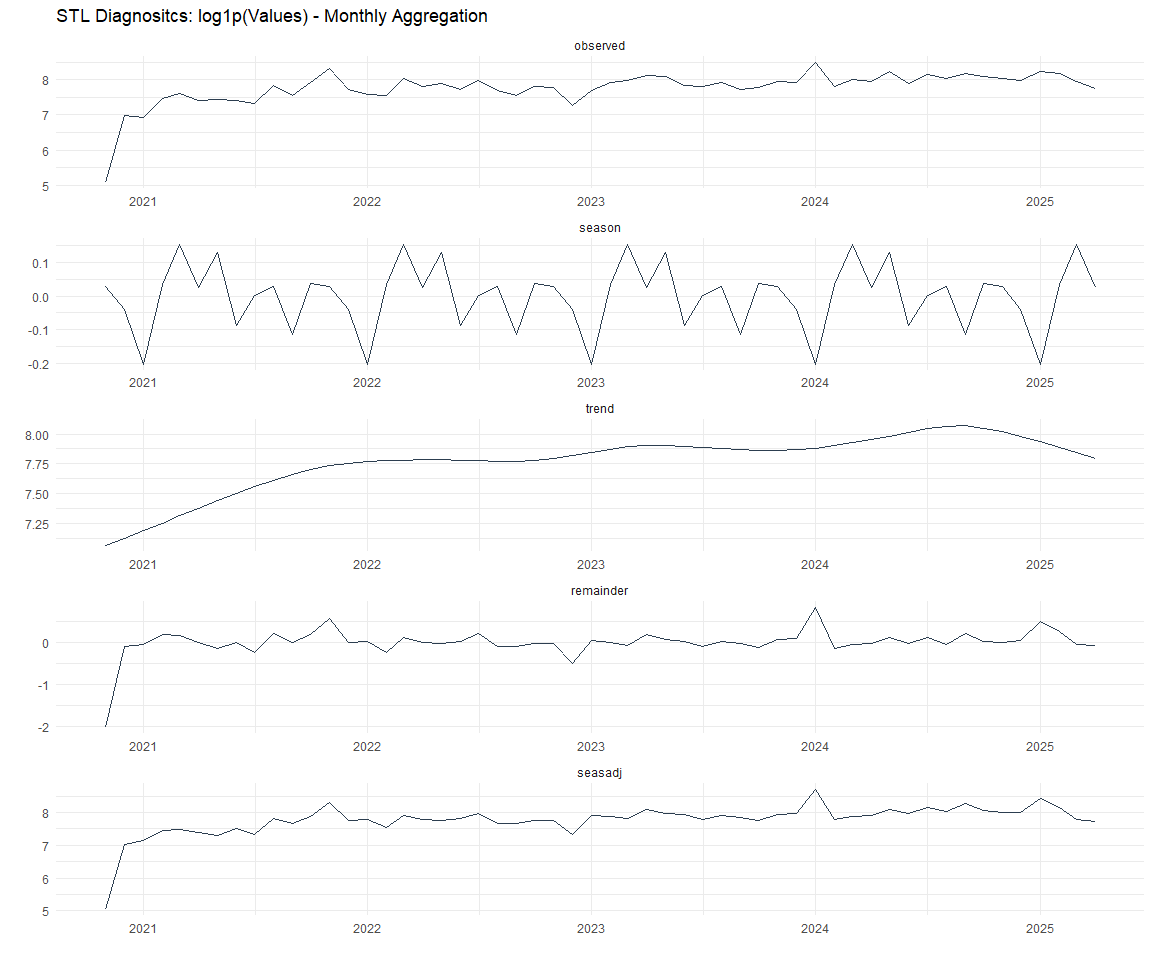

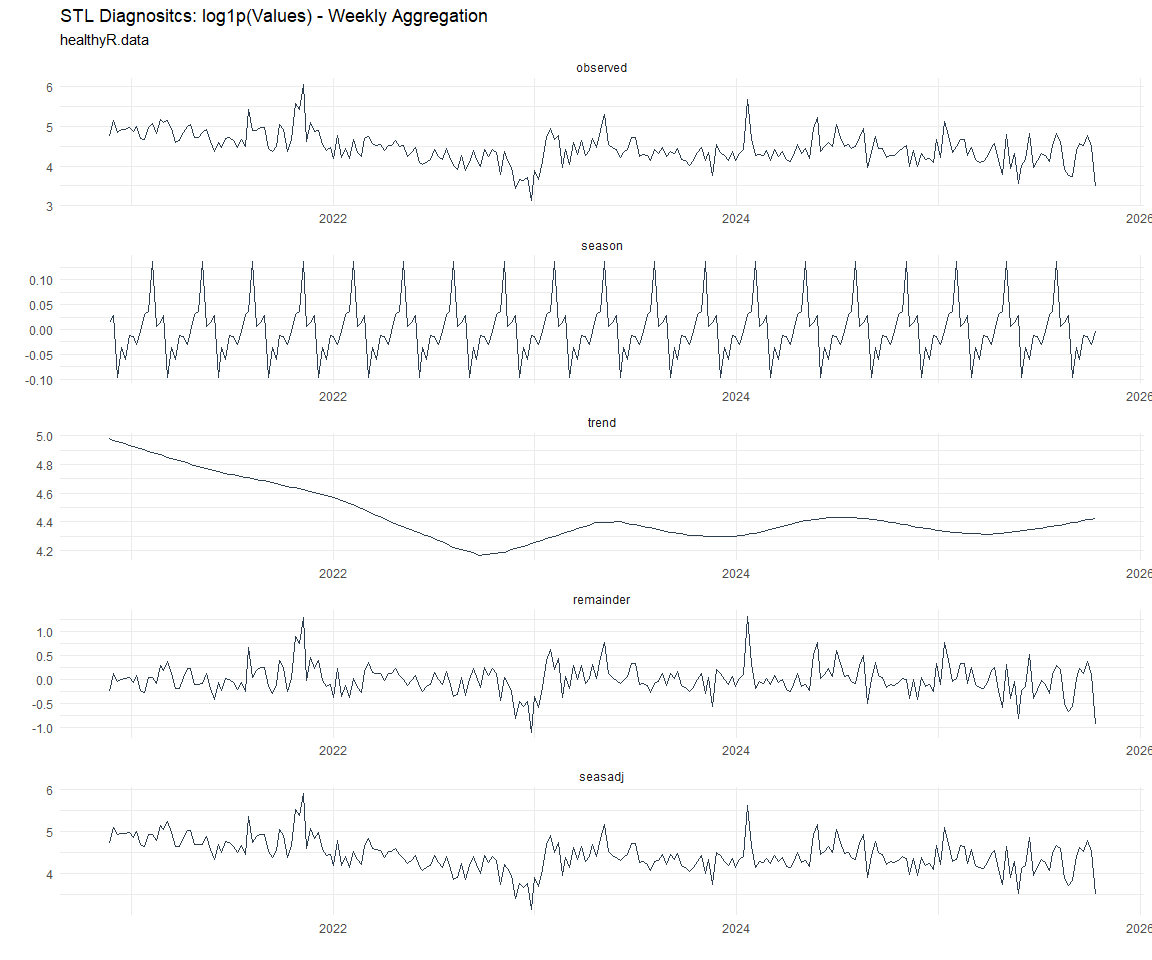

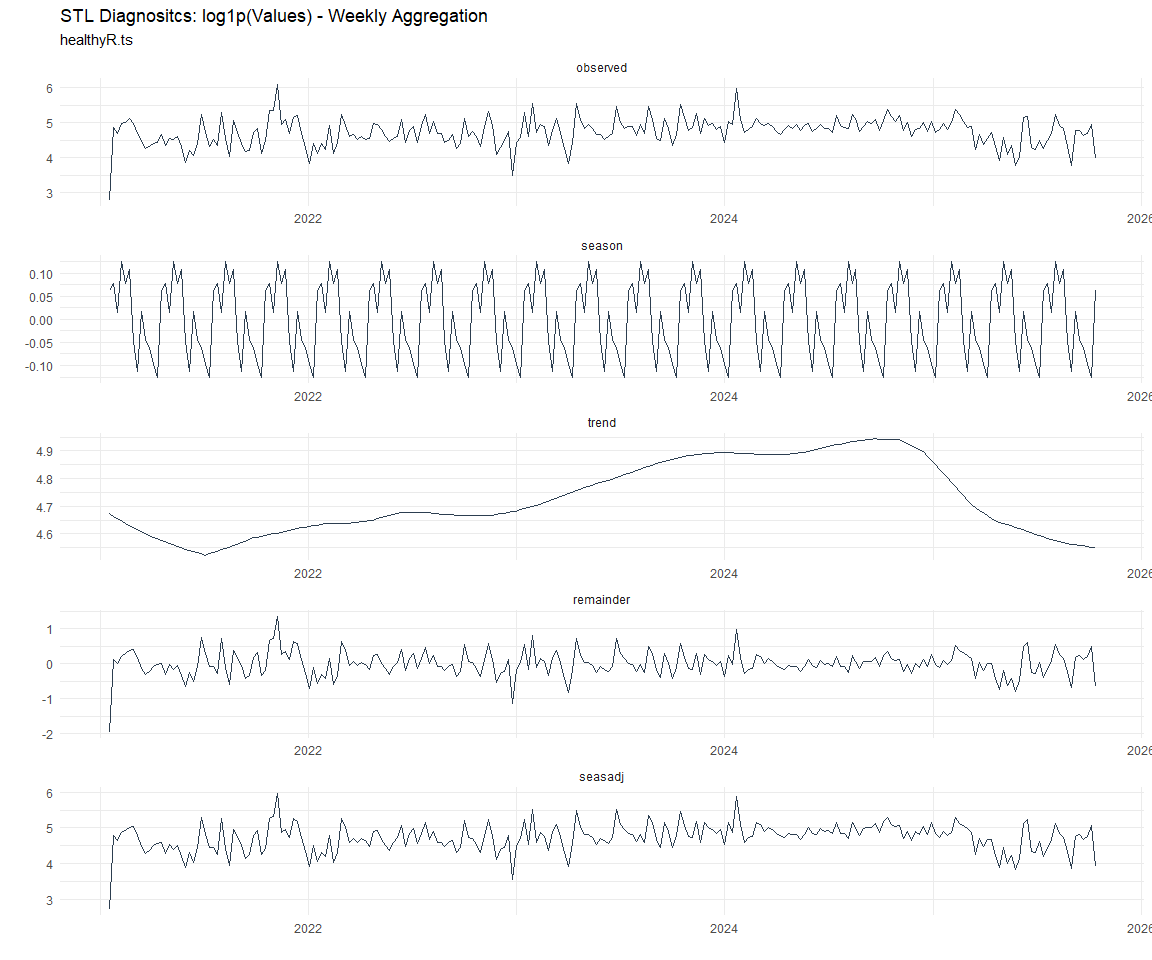

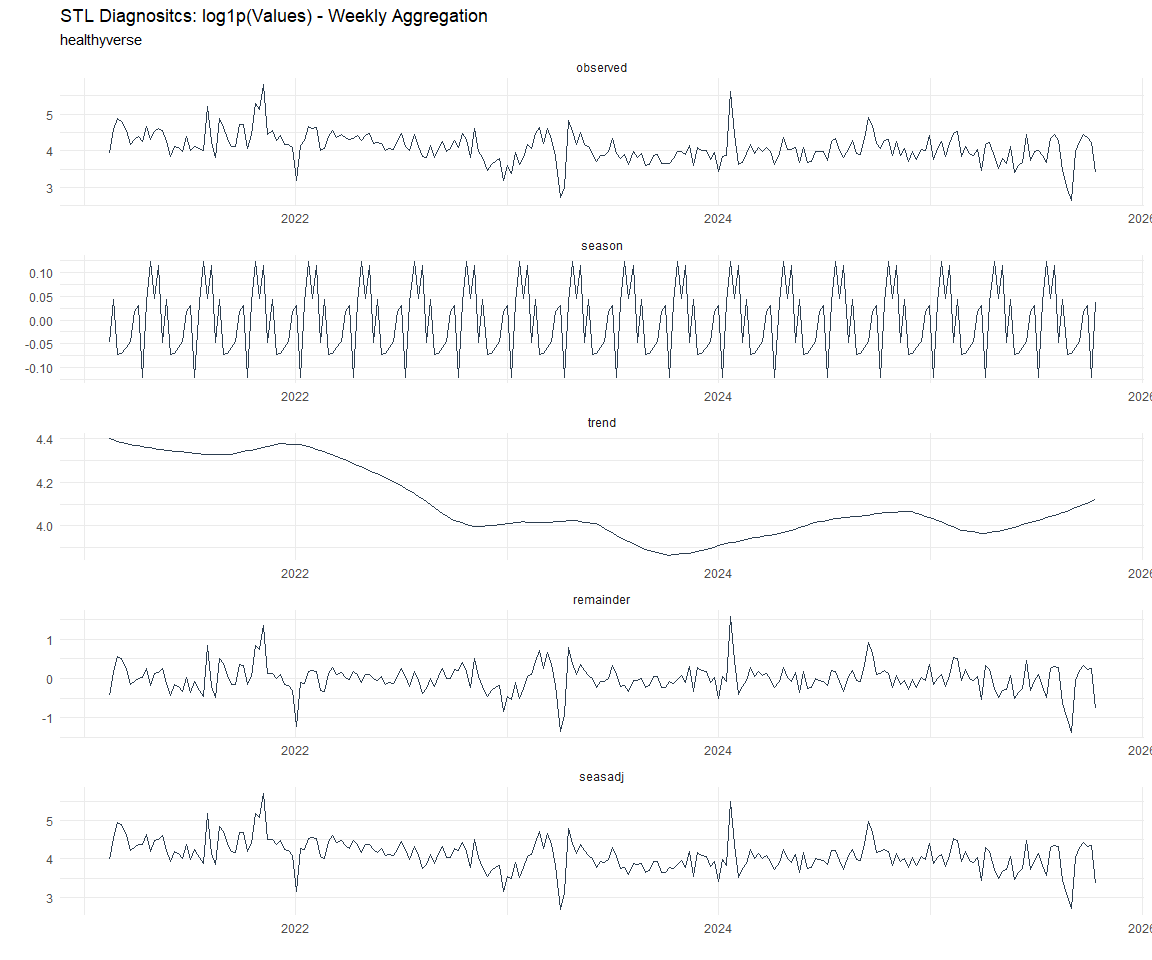

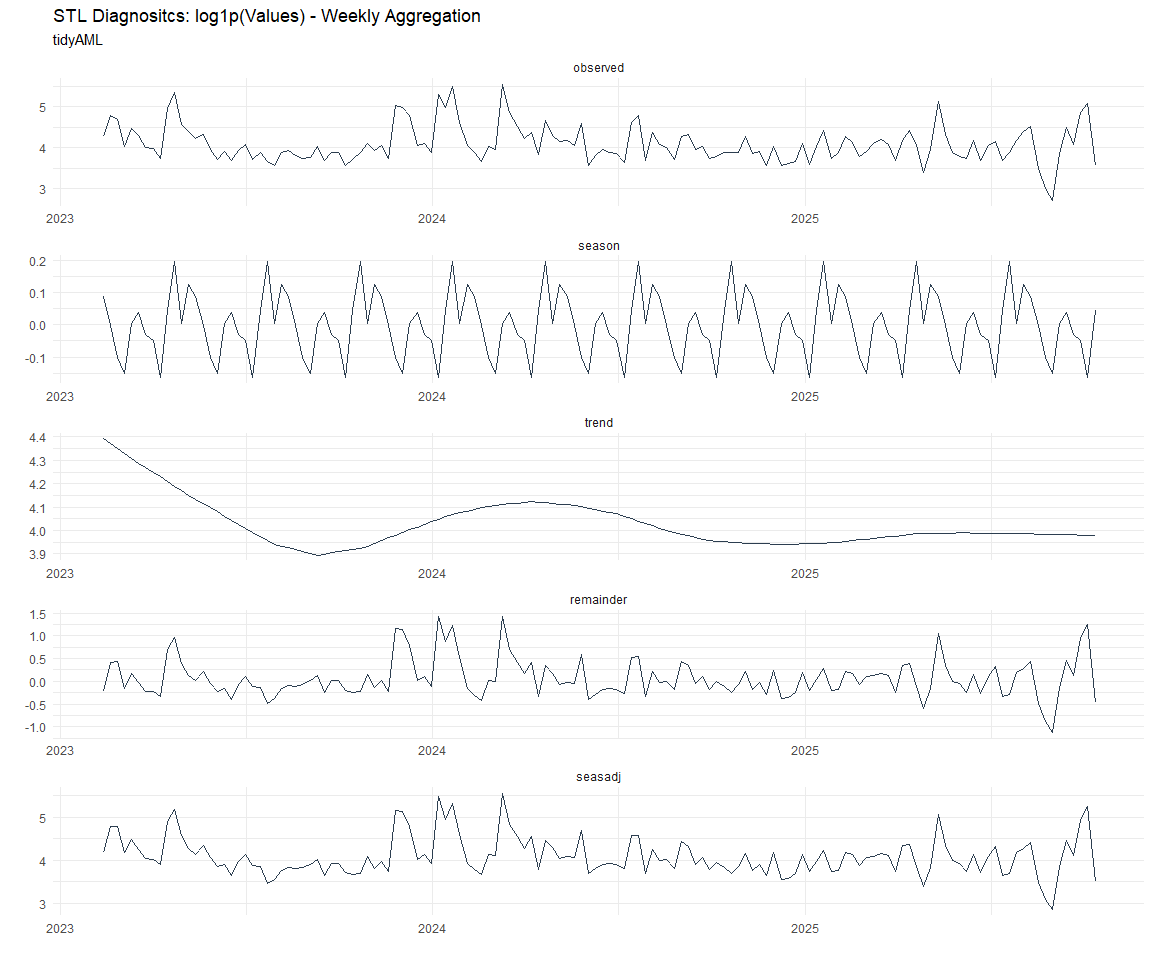

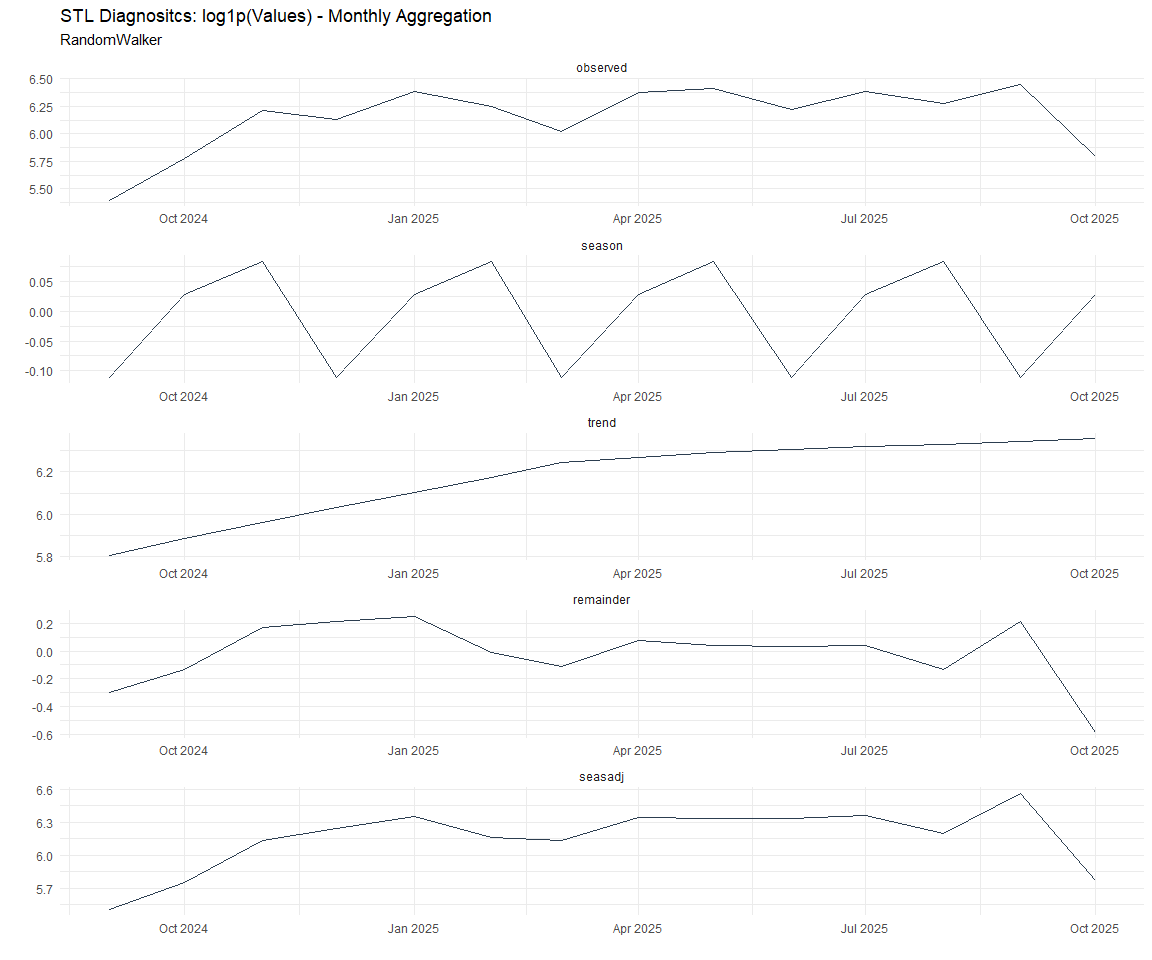

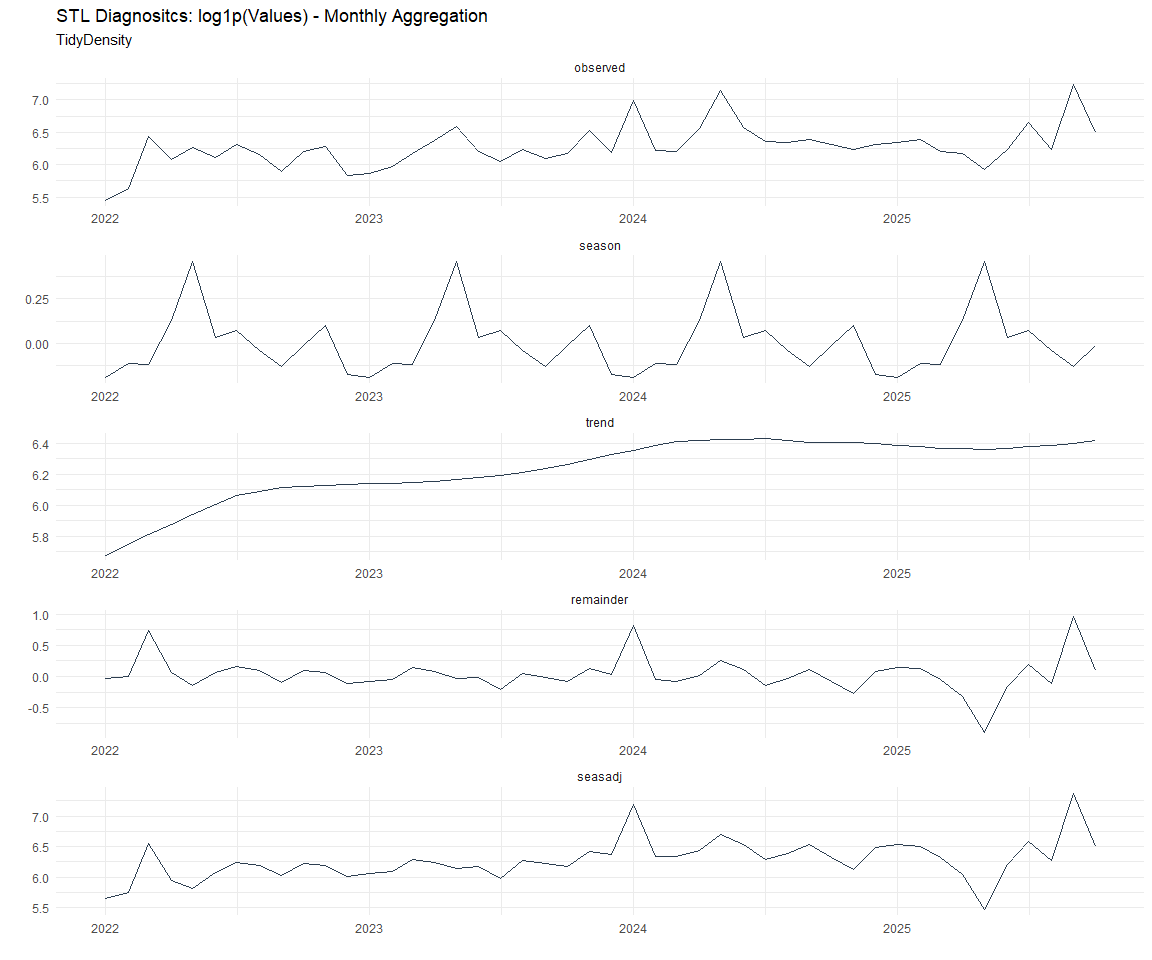

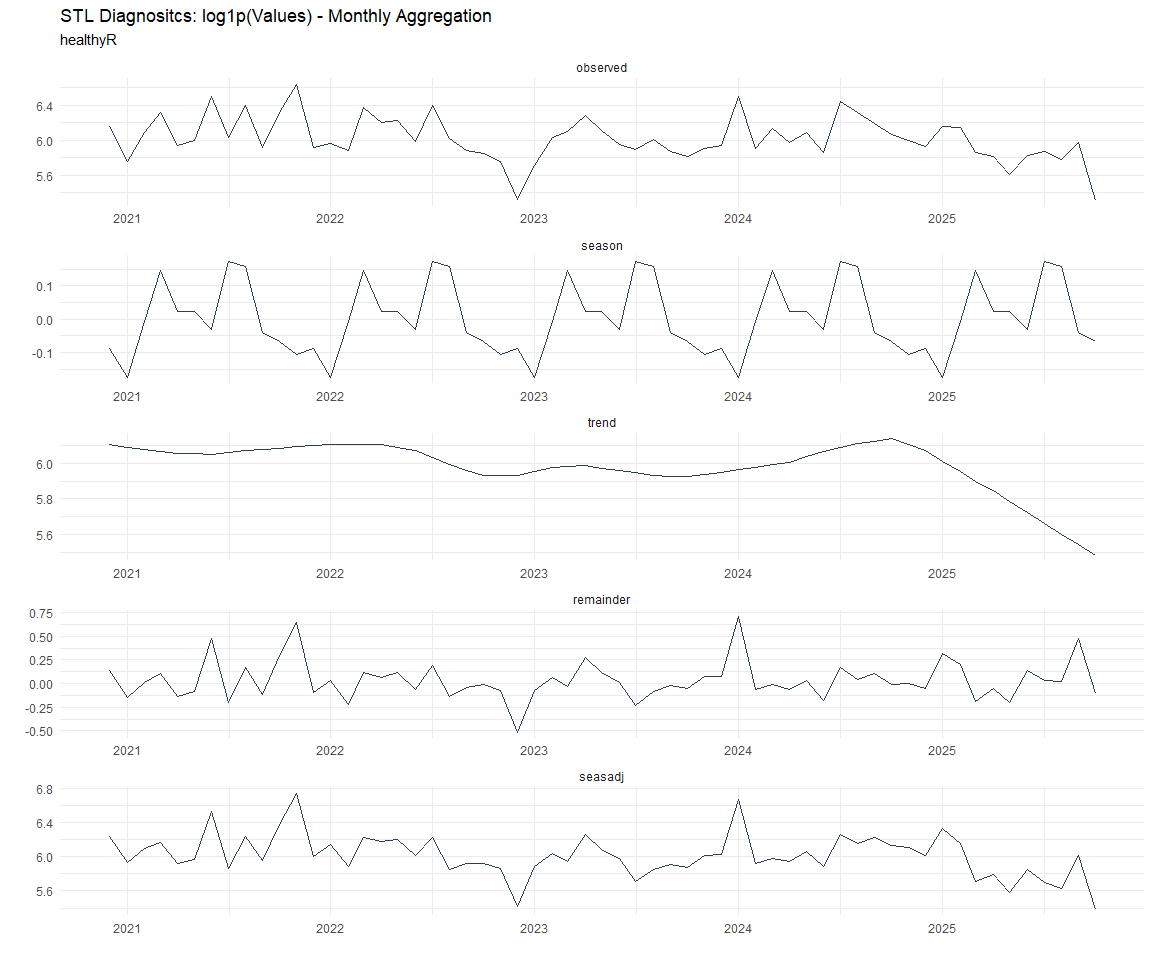

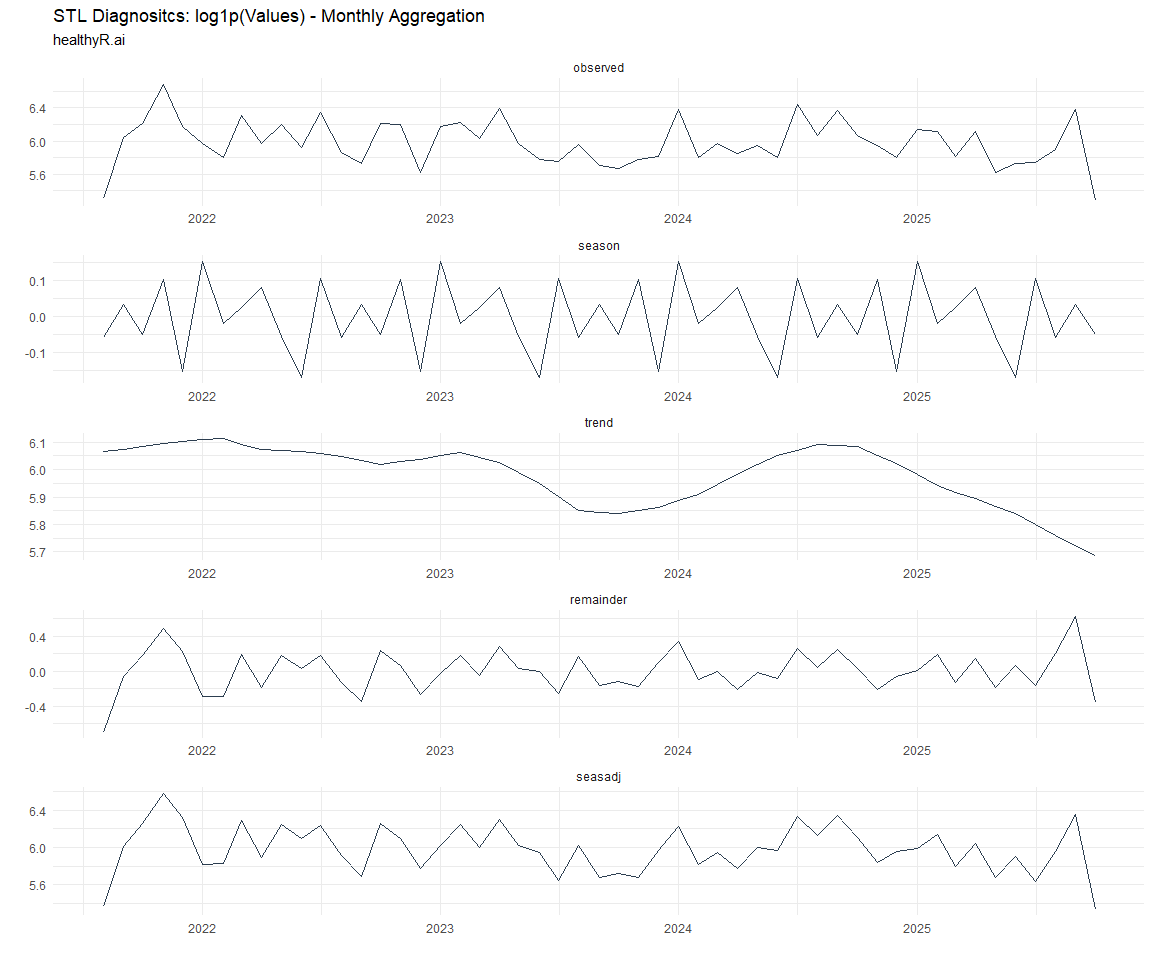

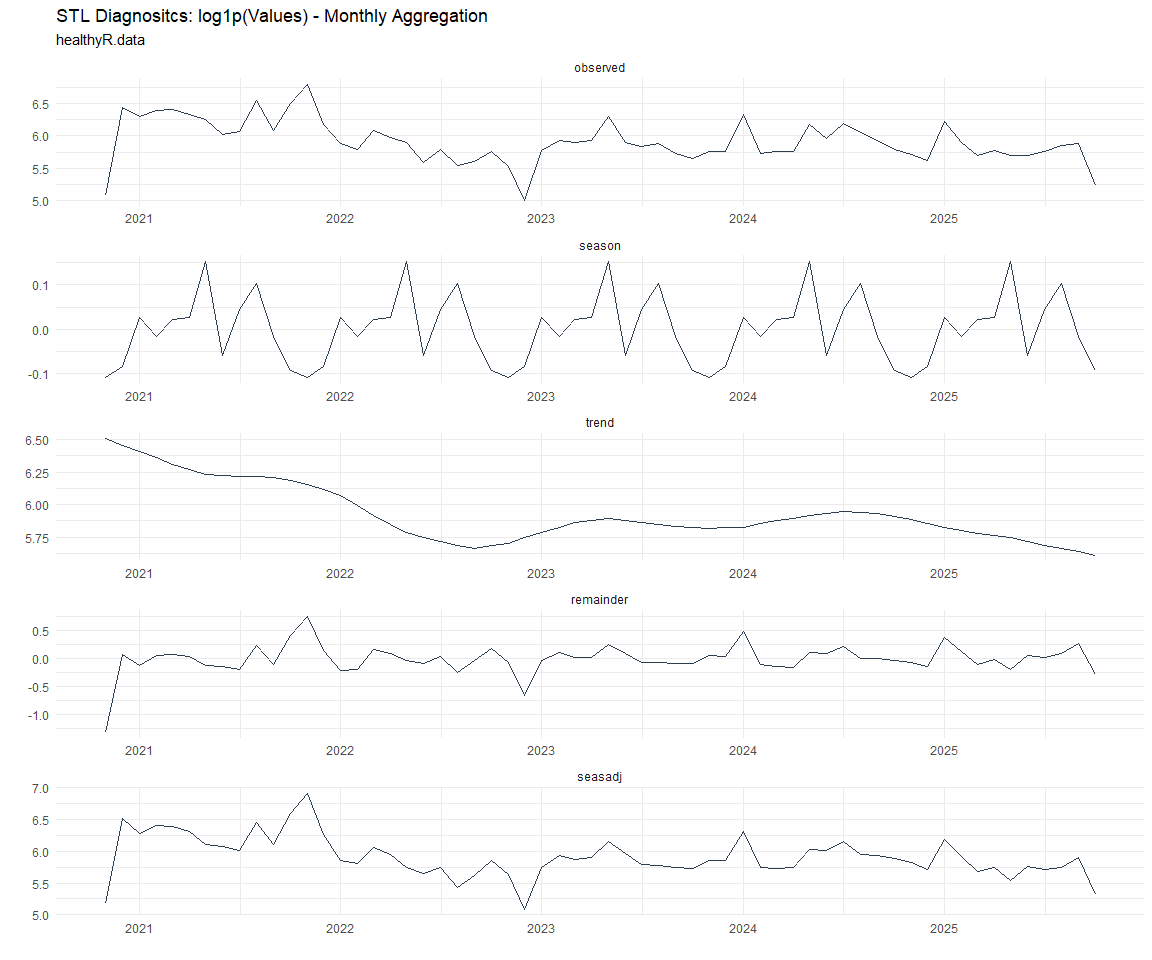

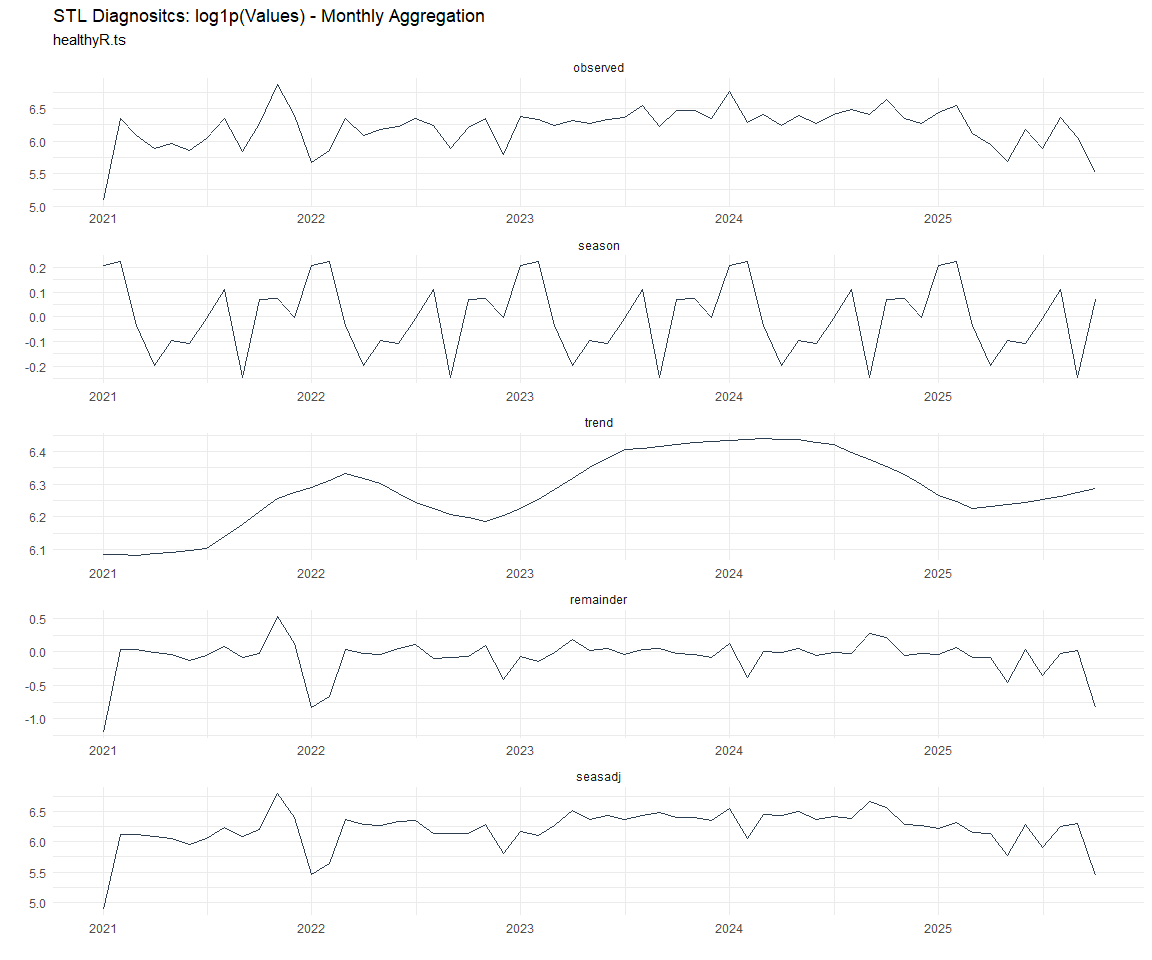

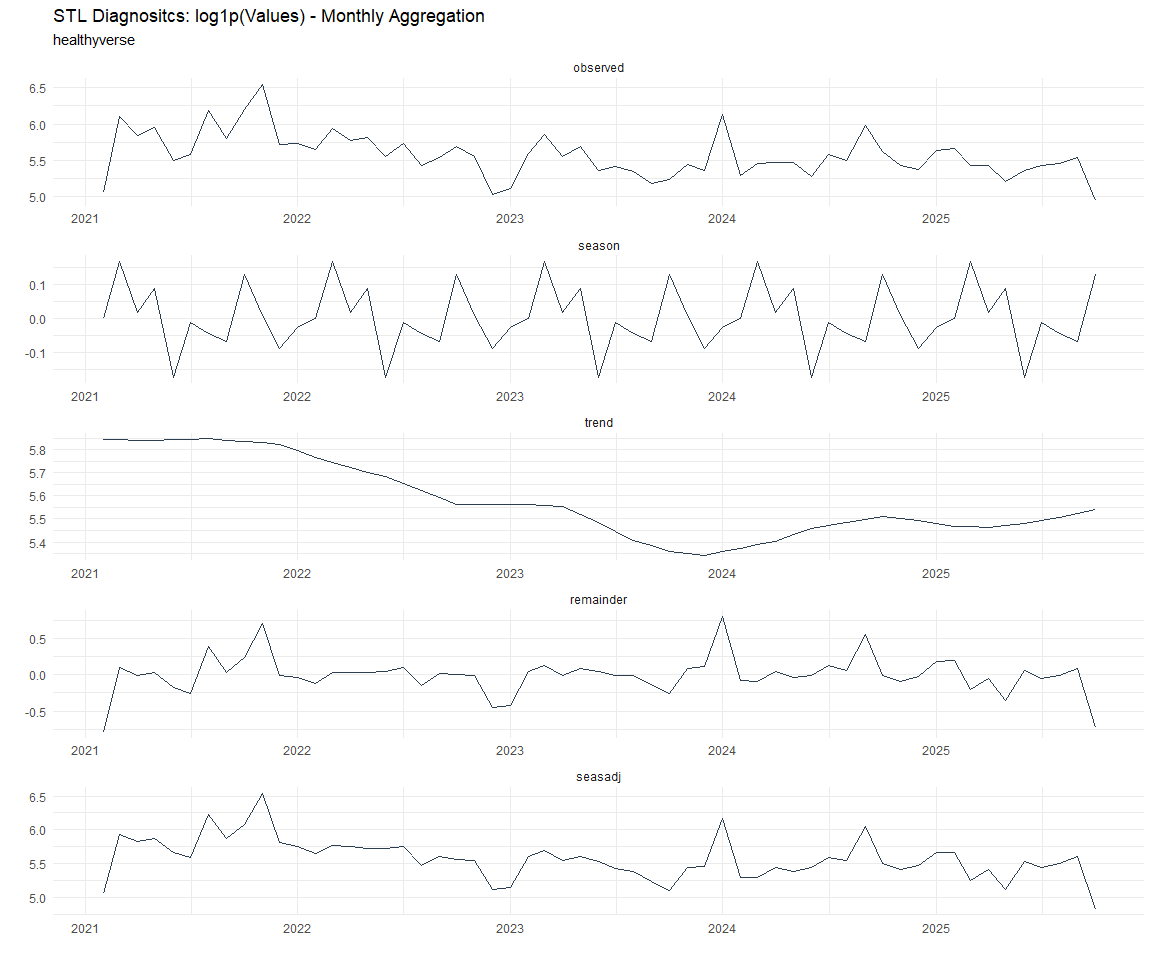

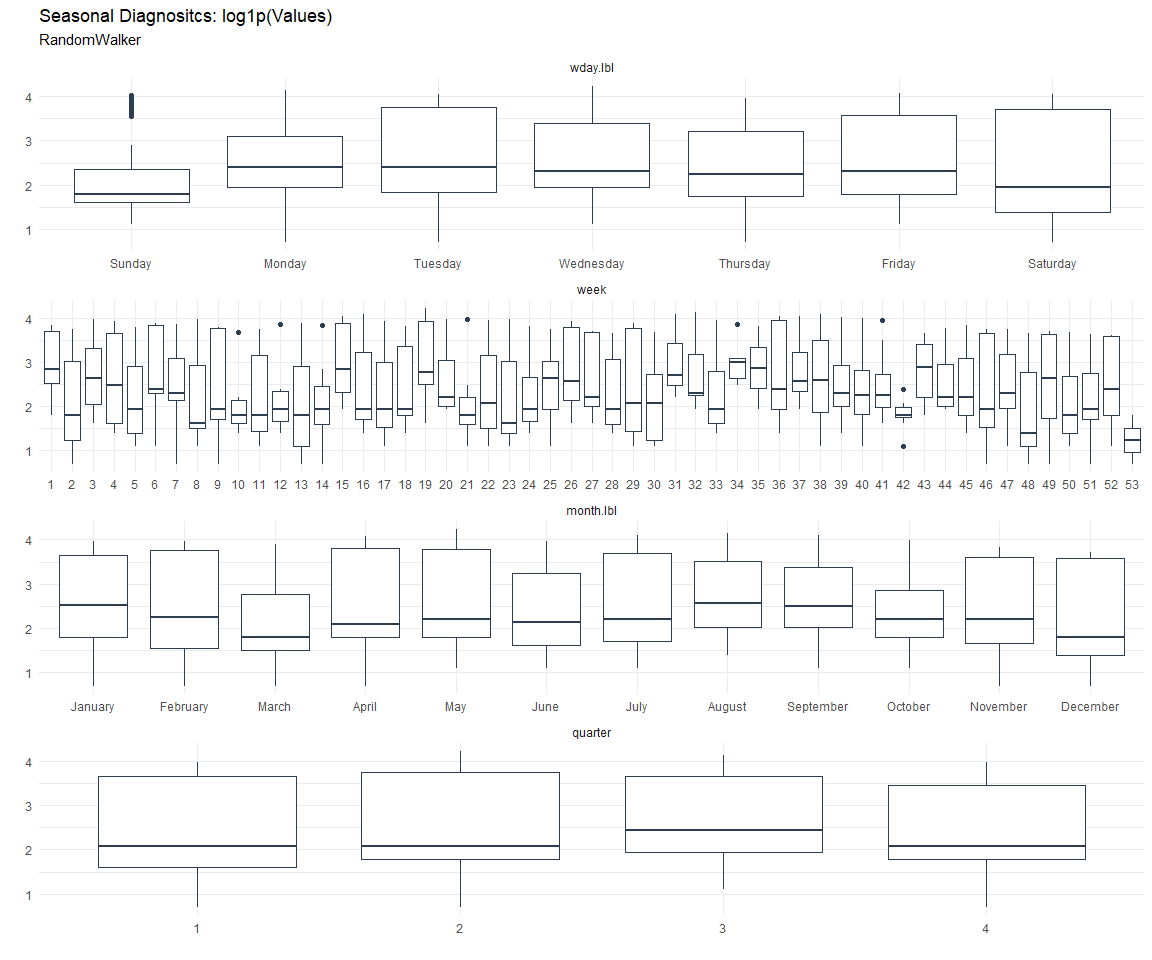

Now lets take a look at some time series decomposition graphs.

[[1]]

[[2]]

[[3]]

[[4]]

[[5]]

[[6]]

[[7]]

[[8]]

[[1]]

[[2]]

[[3]]

[[4]]

[[5]]

[[6]]

[[7]]

[[8]]

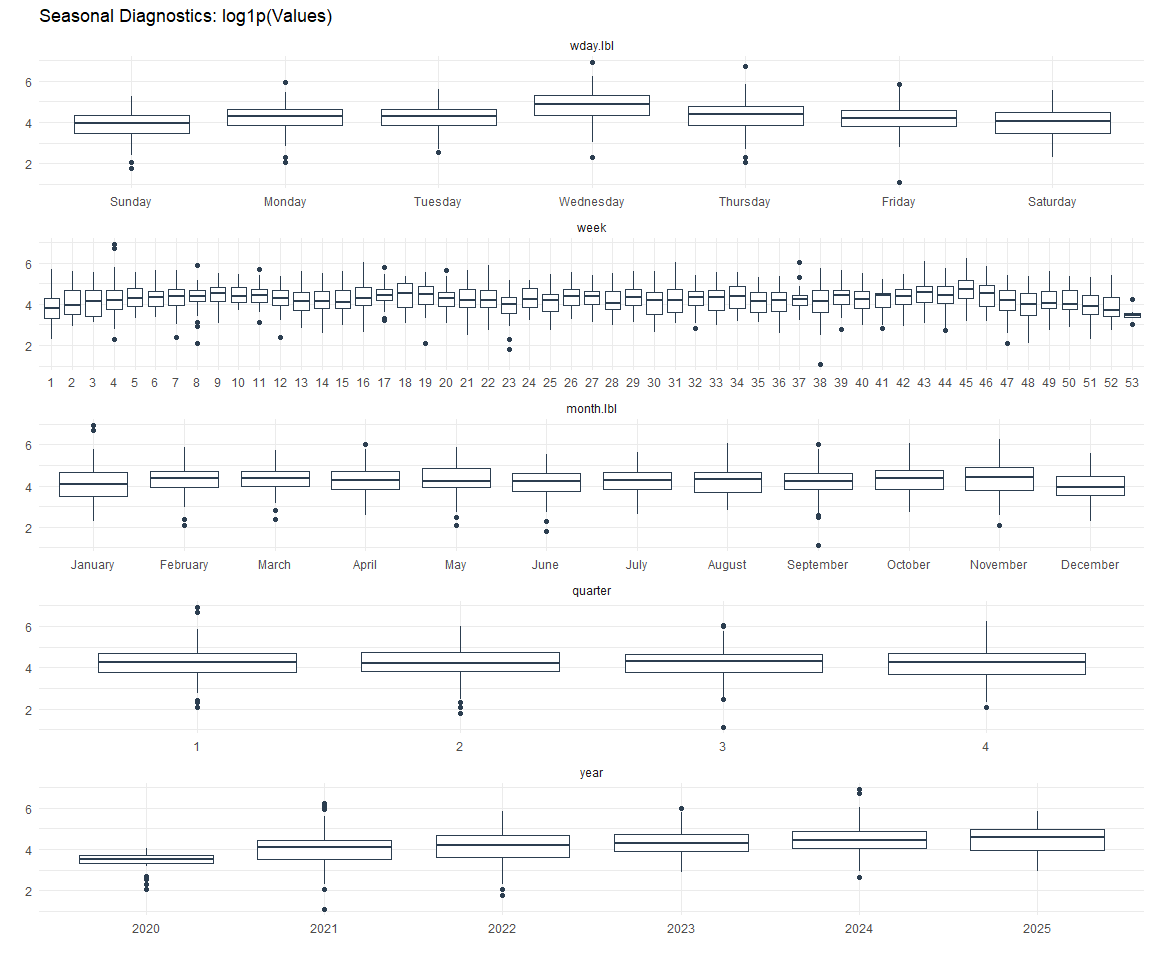

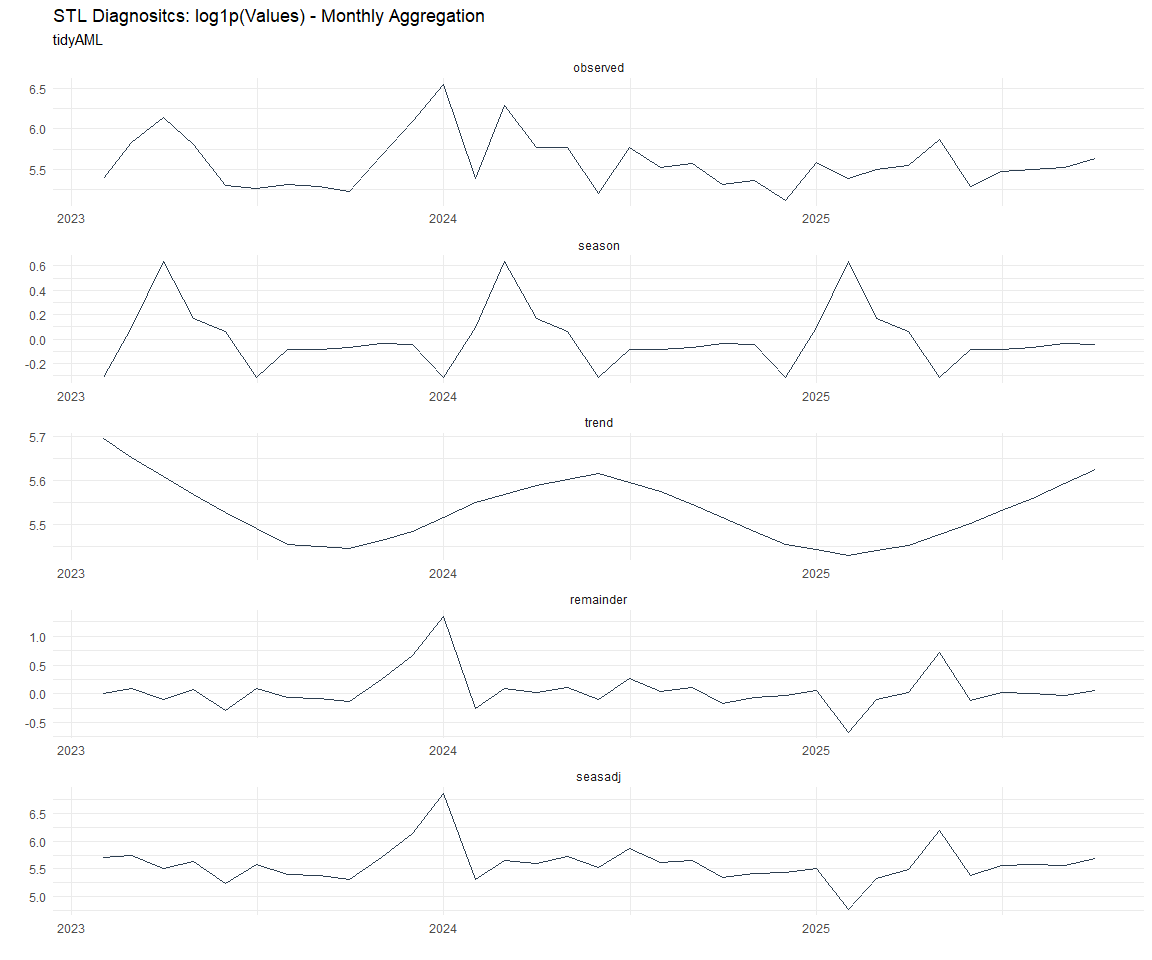

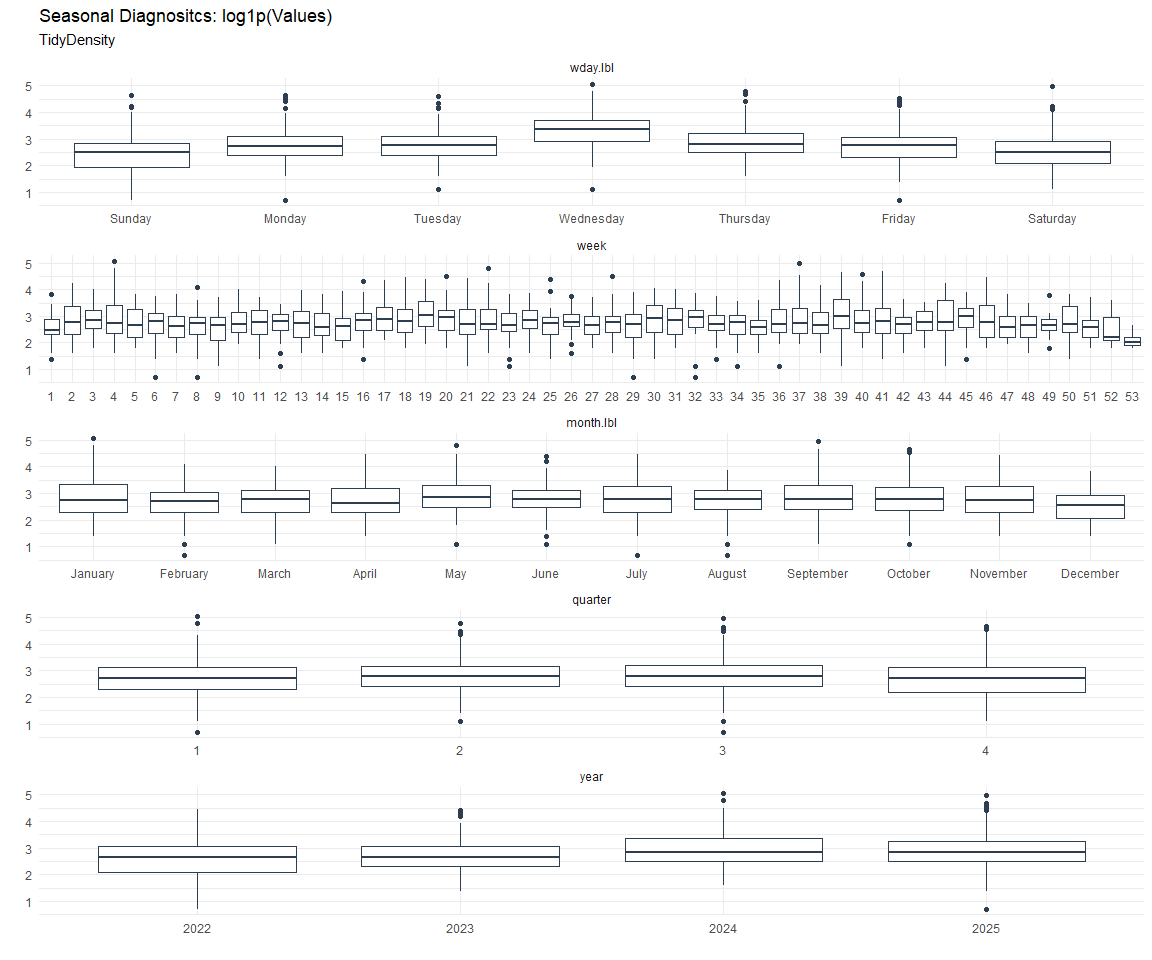

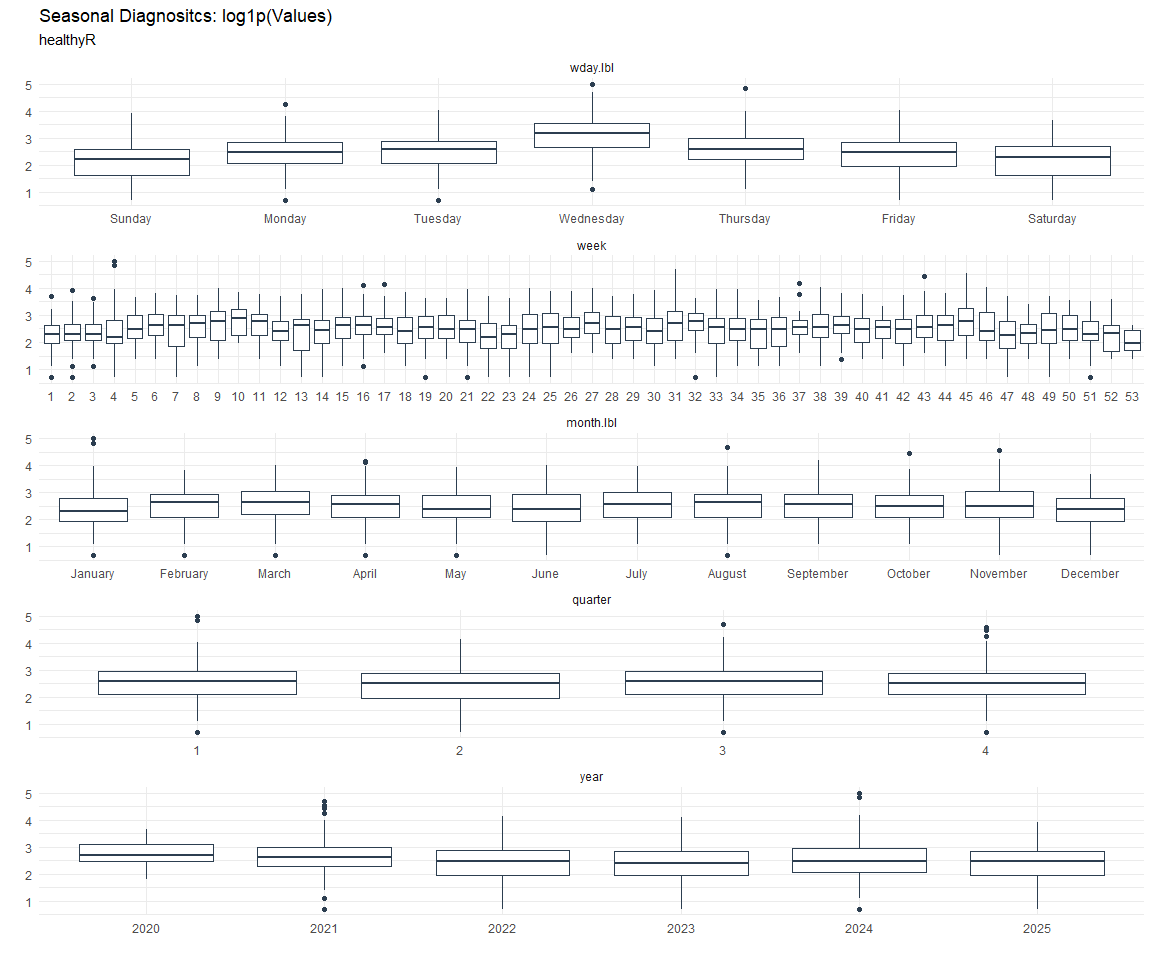

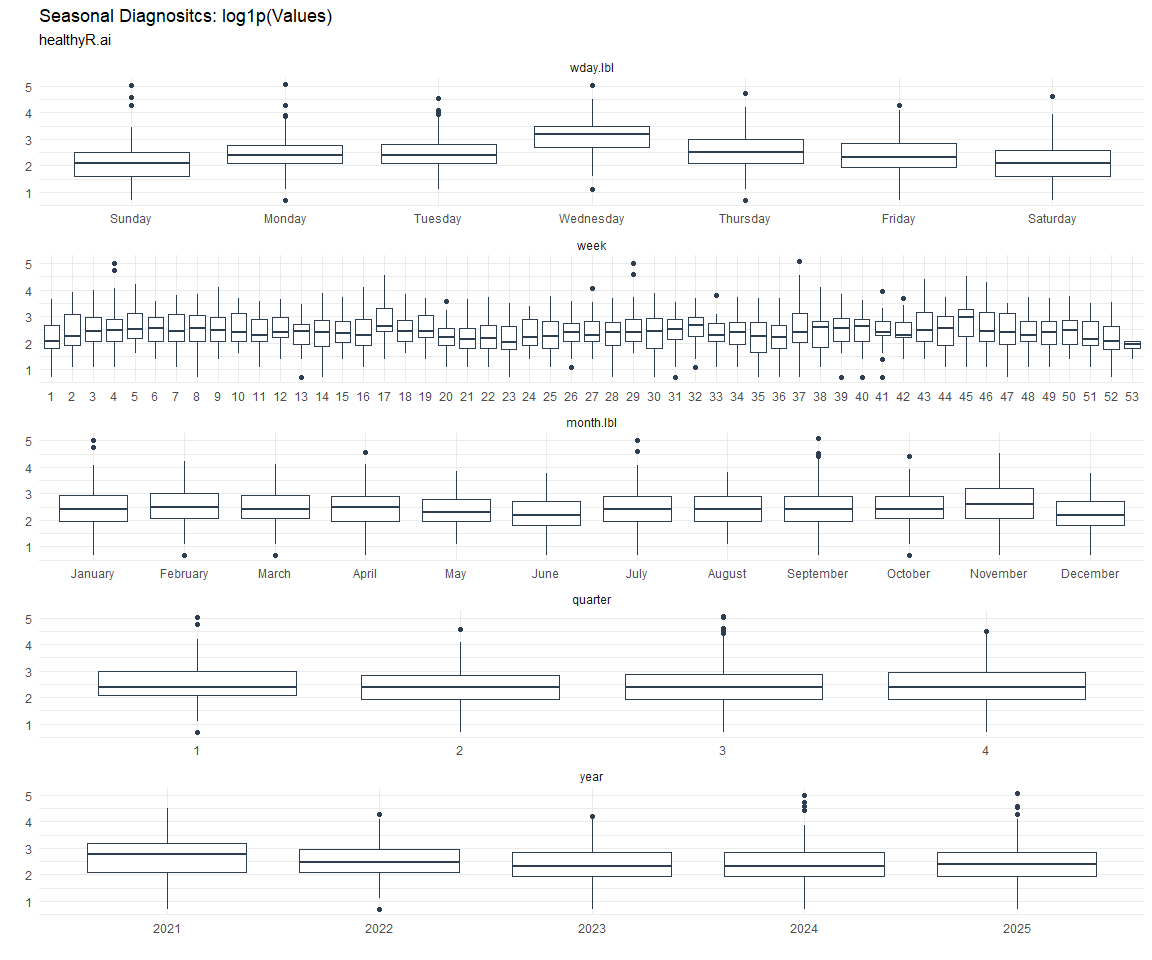

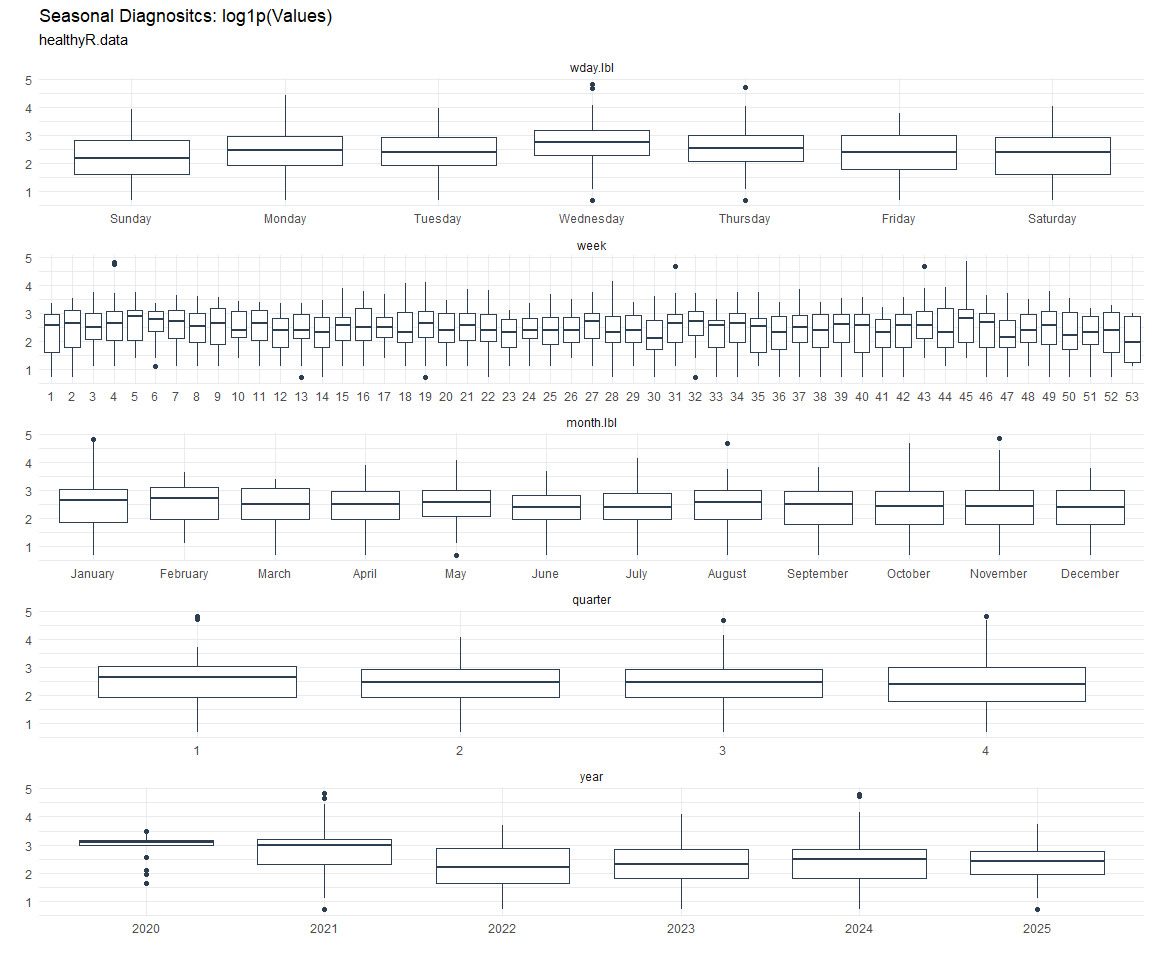

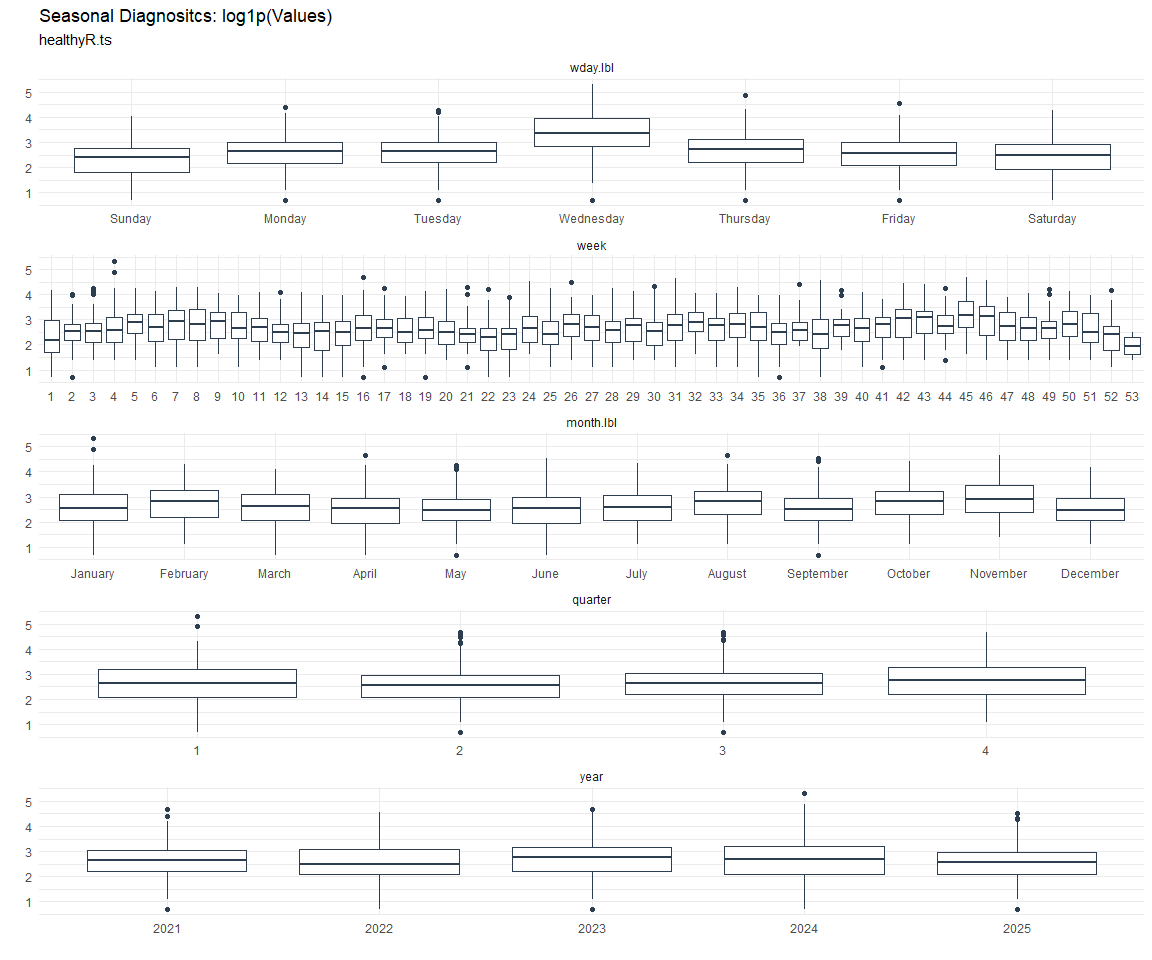

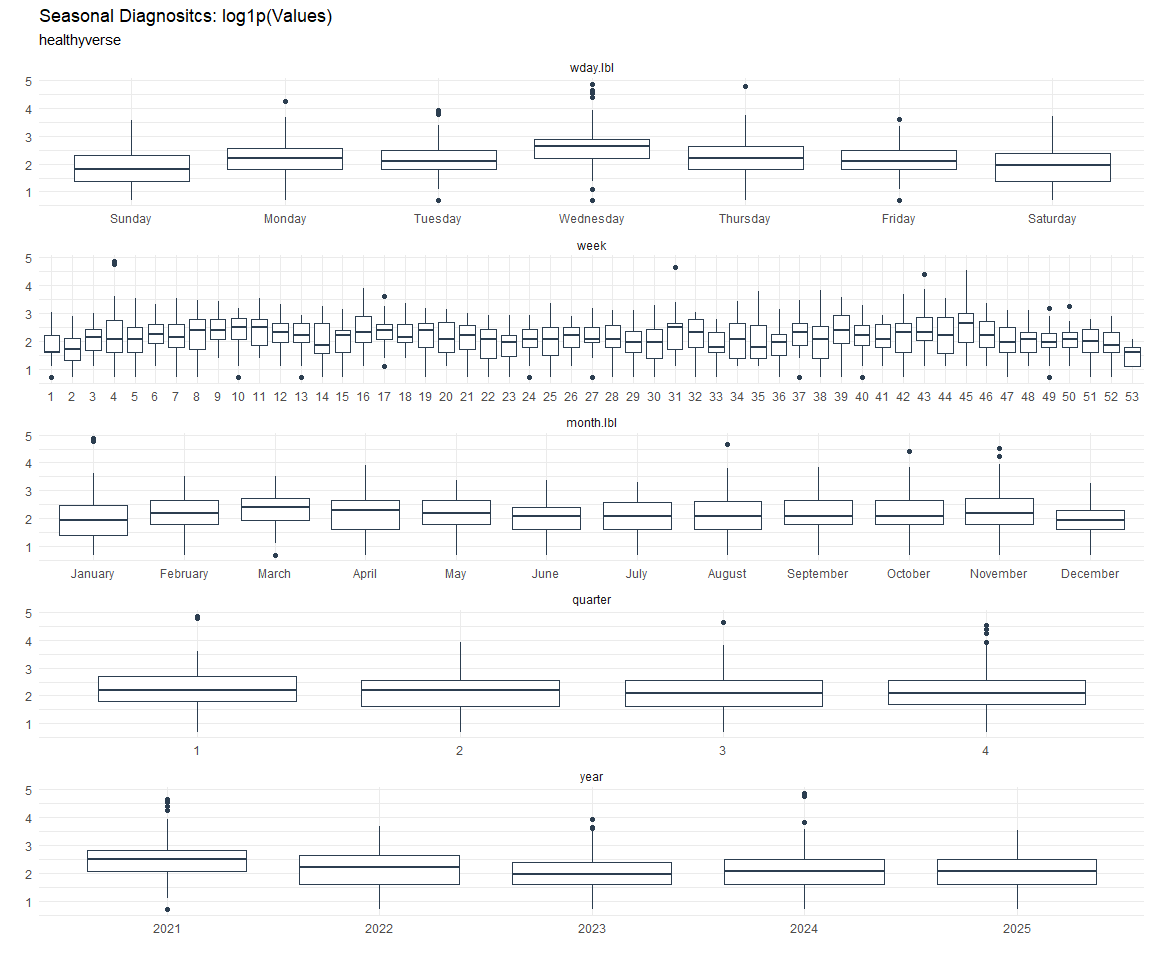

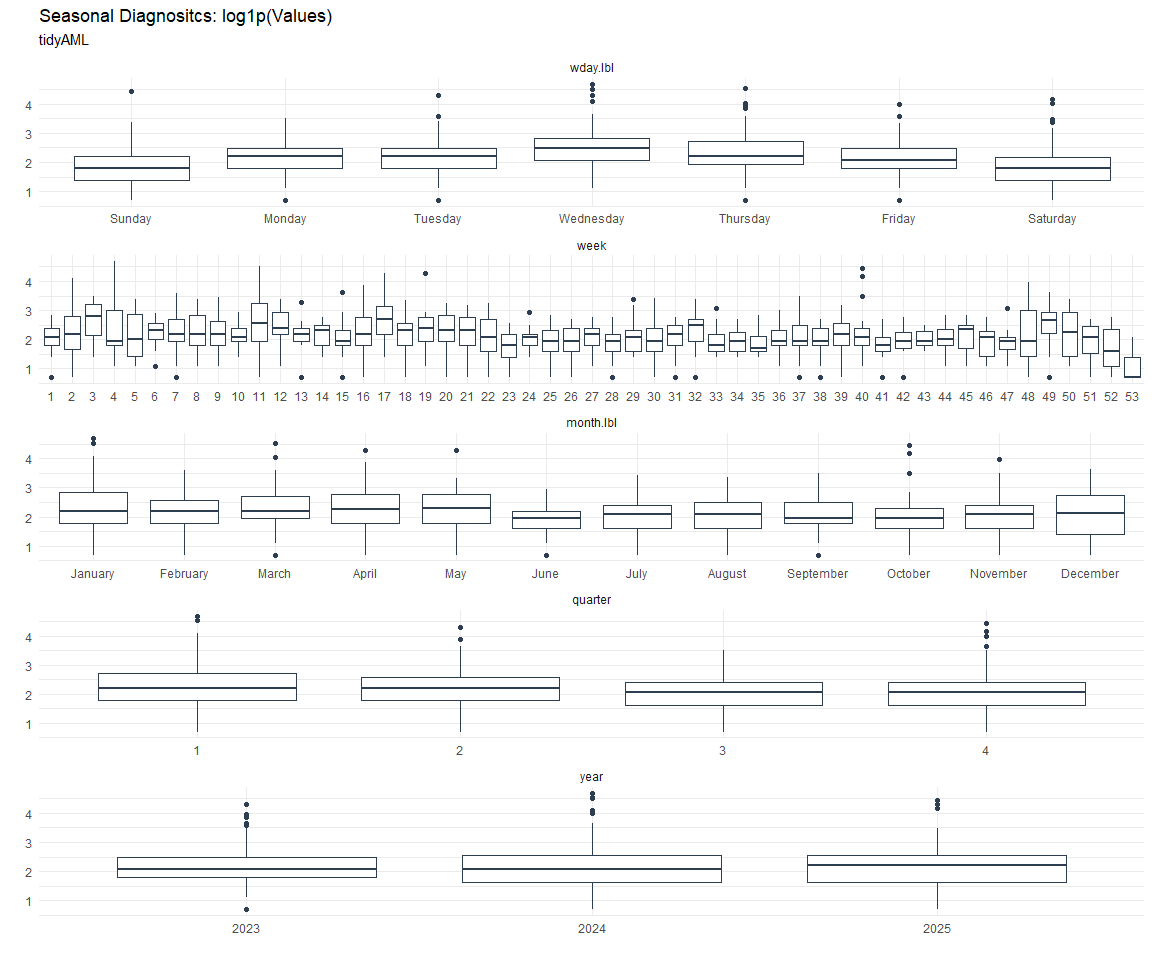

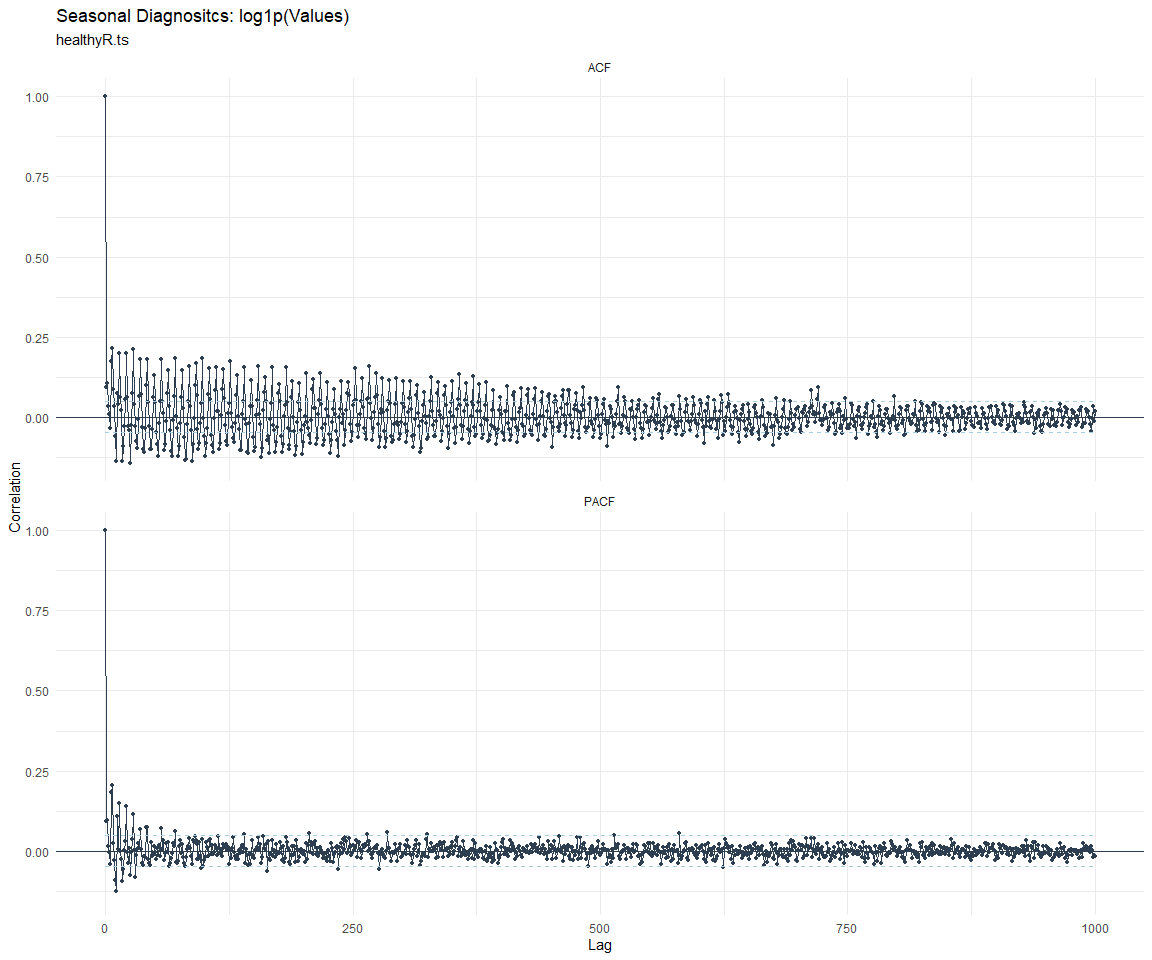

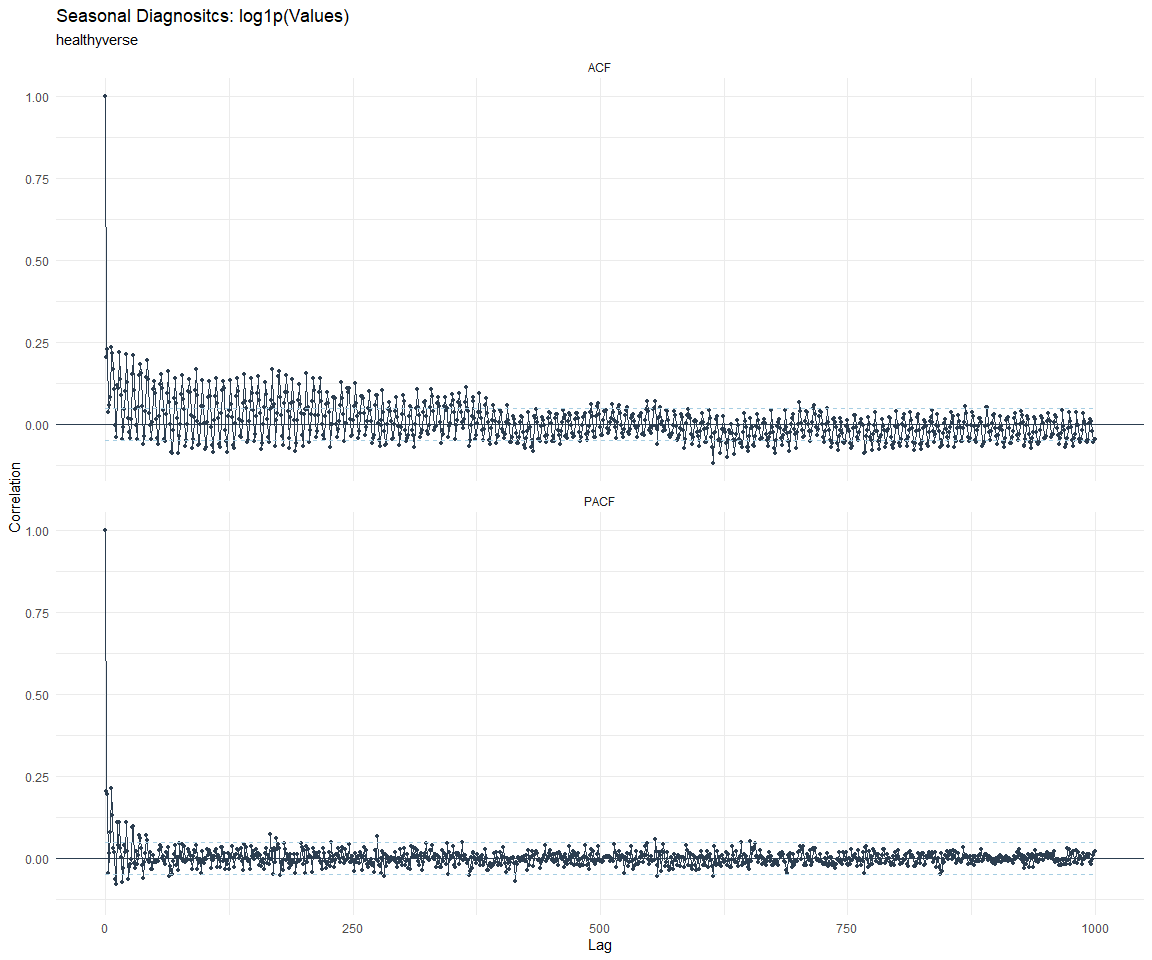

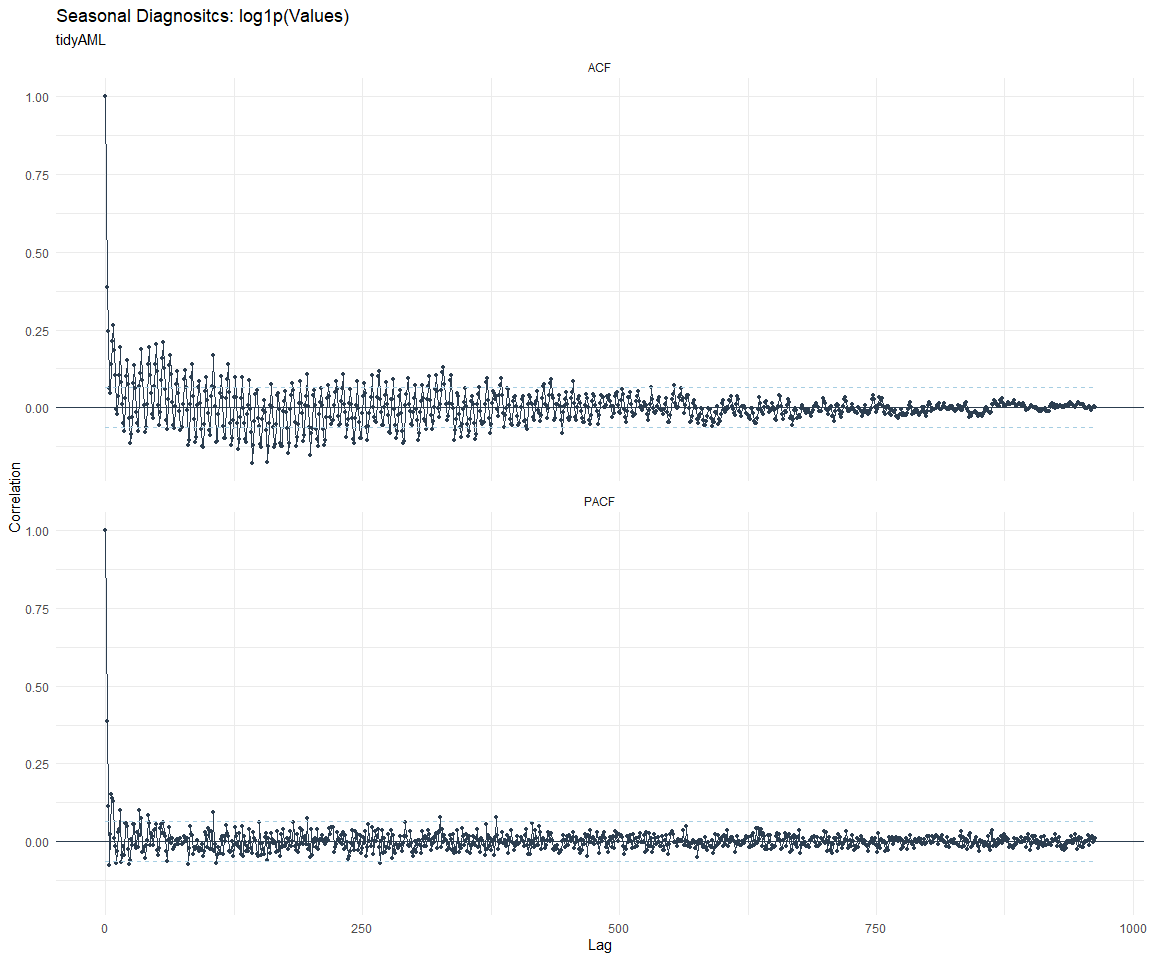

Seasonal Diagnostics:

[[1]]

[[2]]

[[3]]

[[4]]

[[5]]

[[6]]

[[7]]

[[8]]

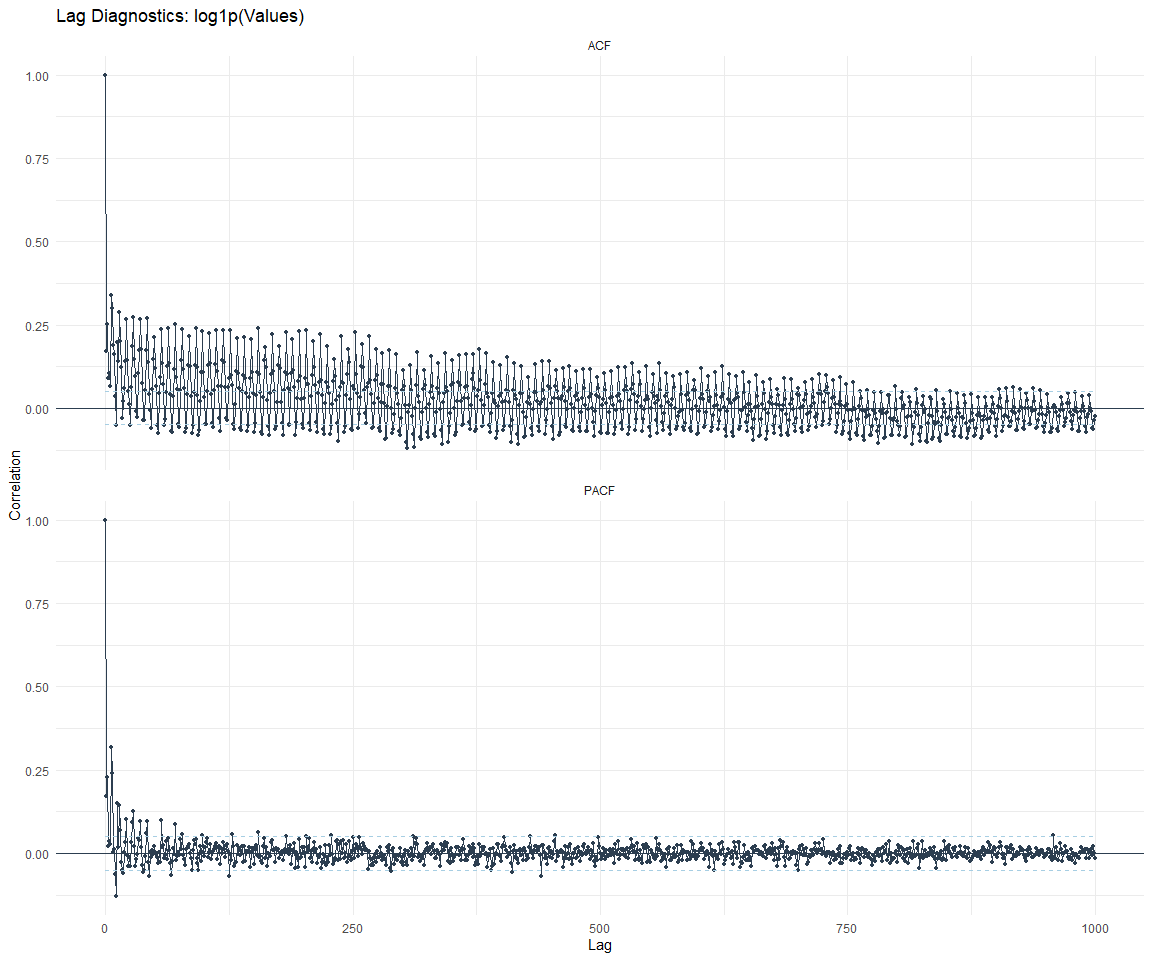

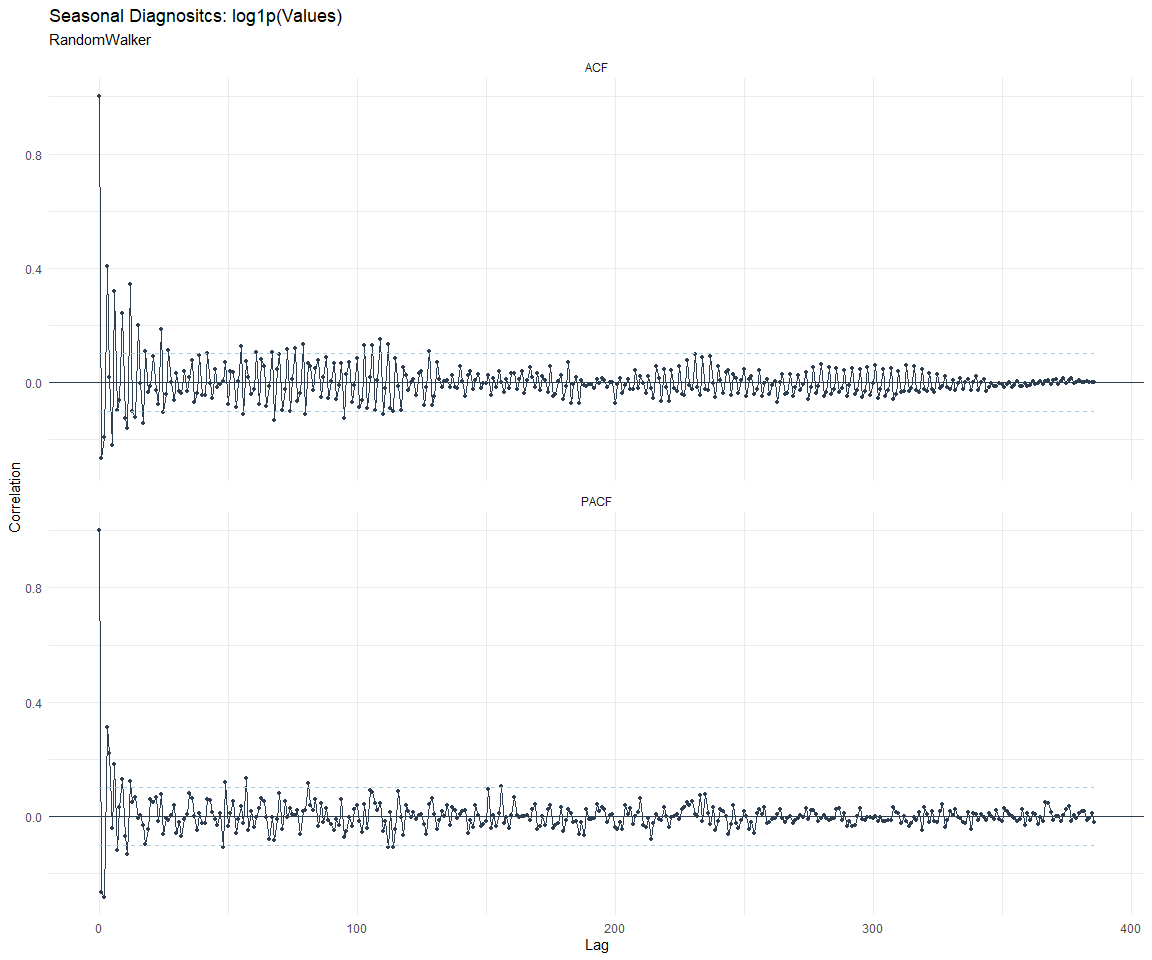

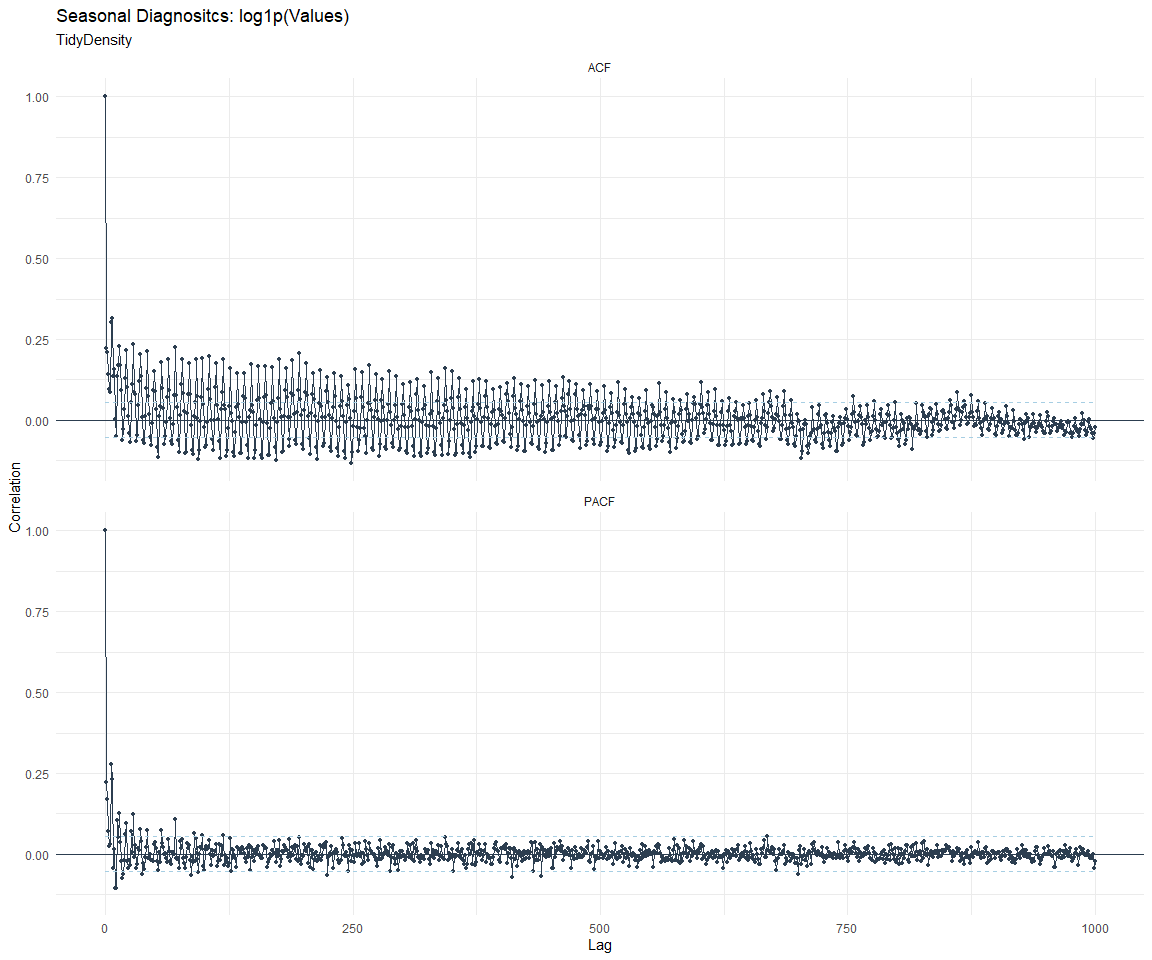

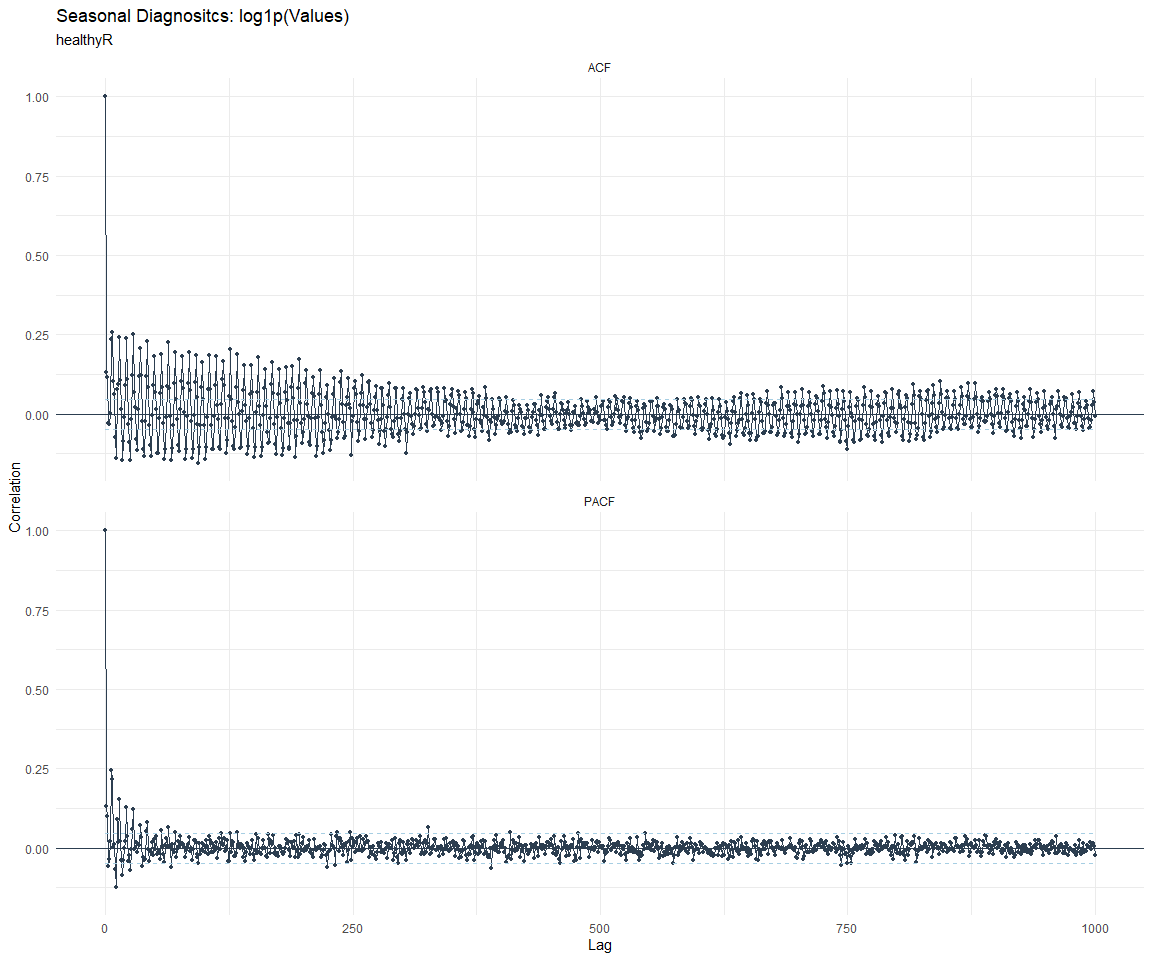

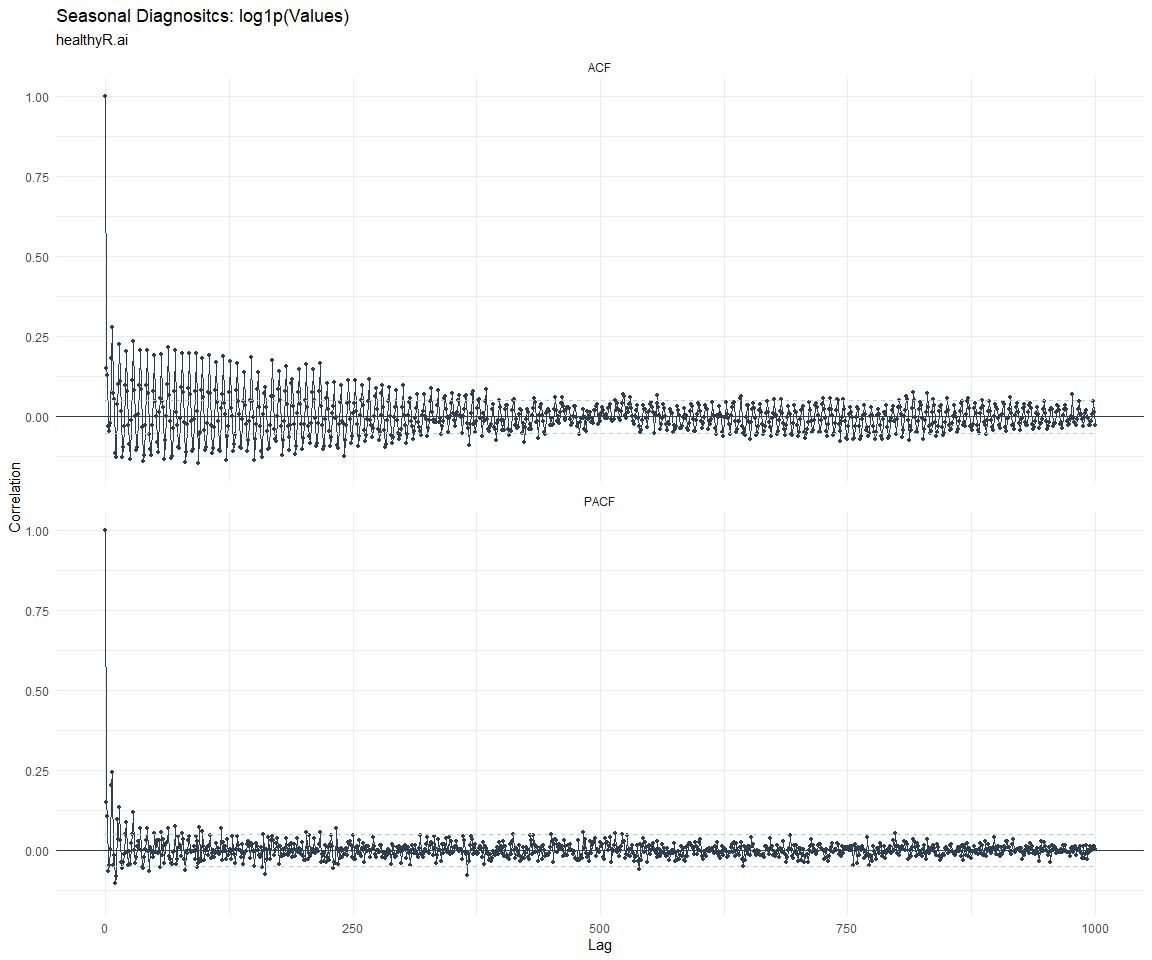

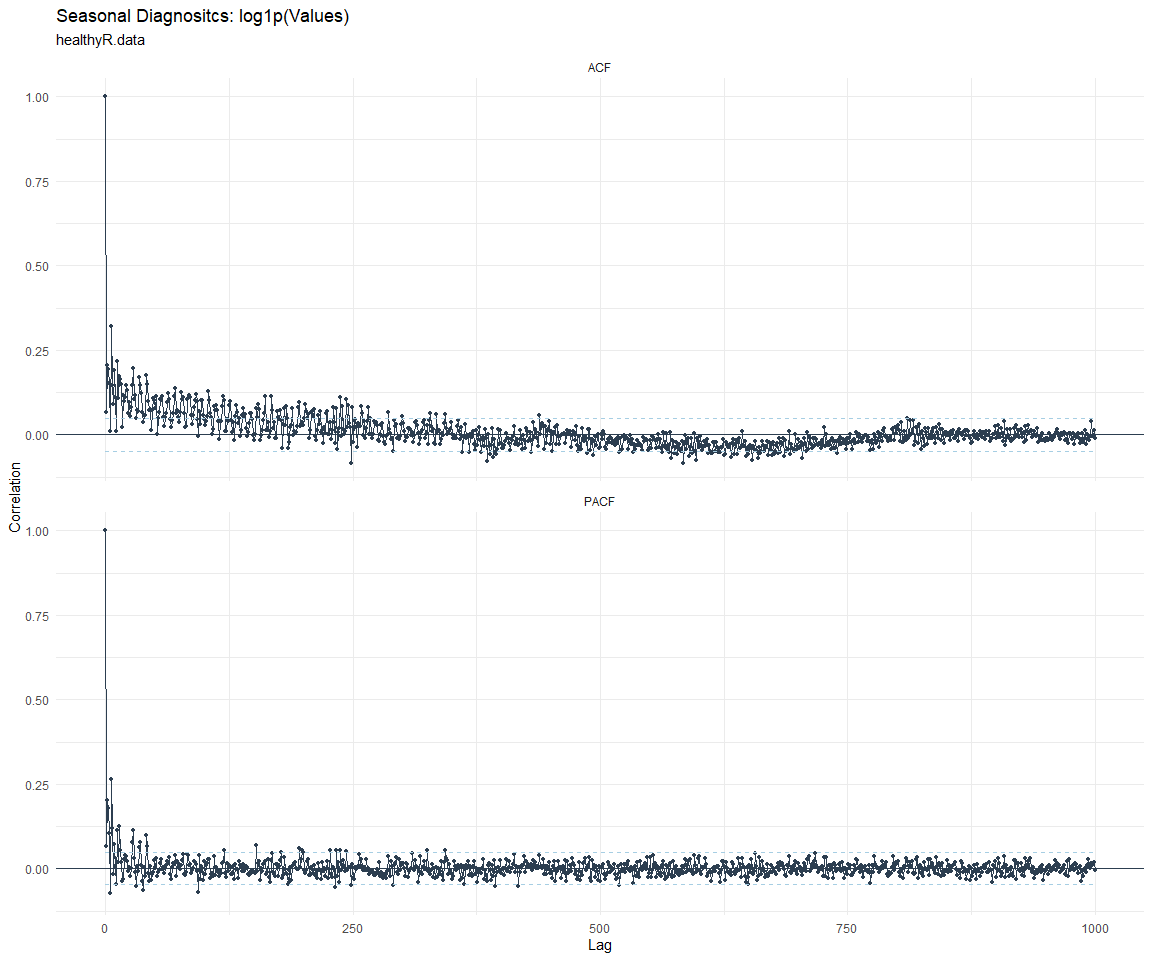

ACF and PACF Diagnostics:

[[1]]

[[2]]

[[3]]

[[4]]

[[5]]

[[6]]

[[7]]

[[8]]

Feature Engineering

Now that we have our basic data and a shot of what it looks like, let’s

add some features to our data which can be very helpful in modeling.

Lets start by making a tibble that is aggregated by the day and

package, as we are going to be interested in forecasting the next 4

weeks or 28 days for each package. First lets get our base data.

Call:

stats::lm(formula = .formula, data = df)

Residuals:

Min 1Q Median 3Q Max

-149.94 -37.70 -11.54 27.99 826.97

Coefficients:

Estimate Std. Error

(Intercept) -1.671e+02 5.389e+01

date 1.048e-02 2.851e-03

lag(value, 1) 9.281e-02 2.257e-02

lag(value, 7) 7.368e-02 2.321e-02

lag(value, 14) 6.323e-02 2.329e-02

lag(value, 21) 9.038e-02 2.338e-02

lag(value, 28) 8.209e-02 2.326e-02

lag(value, 35) 4.539e-02 2.331e-02

lag(value, 42) 6.045e-02 2.346e-02

lag(value, 49) 7.506e-02 2.339e-02

month(date, label = TRUE).L -8.856e+00 4.747e+00

month(date, label = TRUE).Q -6.873e-01 4.770e+00

month(date, label = TRUE).C -1.525e+01 4.767e+00

month(date, label = TRUE)^4 -8.043e+00 4.785e+00

month(date, label = TRUE)^5 -4.866e+00 4.784e+00

month(date, label = TRUE)^6 -9.725e-01 4.808e+00

month(date, label = TRUE)^7 -3.431e+00 4.755e+00

month(date, label = TRUE)^8 -4.548e+00 4.744e+00

month(date, label = TRUE)^9 2.394e+00 4.781e+00

month(date, label = TRUE)^10 1.772e+00 4.852e+00

month(date, label = TRUE)^11 -4.696e+00 4.876e+00

fourier_vec(date, type = "sin", K = 1, period = 7) -1.130e+01 2.148e+00

fourier_vec(date, type = "cos", K = 1, period = 7) 7.389e+00 2.217e+00

t value Pr(>|t|)

(Intercept) -3.100 0.001961 **

date 3.675 0.000244 ***

lag(value, 1) 4.112 4.08e-05 ***

lag(value, 7) 3.175 0.001523 **

lag(value, 14) 2.715 0.006690 **

lag(value, 21) 3.866 0.000115 ***

lag(value, 28) 3.529 0.000427 ***

lag(value, 35) 1.947 0.051652 .

lag(value, 42) 2.576 0.010060 *

lag(value, 49) 3.209 0.001353 **

month(date, label = TRUE).L -1.866 0.062265 .

month(date, label = TRUE).Q -0.144 0.885438

month(date, label = TRUE).C -3.199 0.001403 **

month(date, label = TRUE)^4 -1.681 0.092934 .

month(date, label = TRUE)^5 -1.017 0.309216

month(date, label = TRUE)^6 -0.202 0.839714

month(date, label = TRUE)^7 -0.722 0.470674

month(date, label = TRUE)^8 -0.959 0.337887

month(date, label = TRUE)^9 0.501 0.616554

month(date, label = TRUE)^10 0.365 0.714918

month(date, label = TRUE)^11 -0.963 0.335666

fourier_vec(date, type = "sin", K = 1, period = 7) -5.260 1.61e-07 ***

fourier_vec(date, type = "cos", K = 1, period = 7) 3.334 0.000874 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 59.95 on 1889 degrees of freedom

(49 observations deleted due to missingness)

Multiple R-squared: 0.2123, Adjusted R-squared: 0.2031

F-statistic: 23.14 on 22 and 1889 DF, p-value: < 2.2e-16

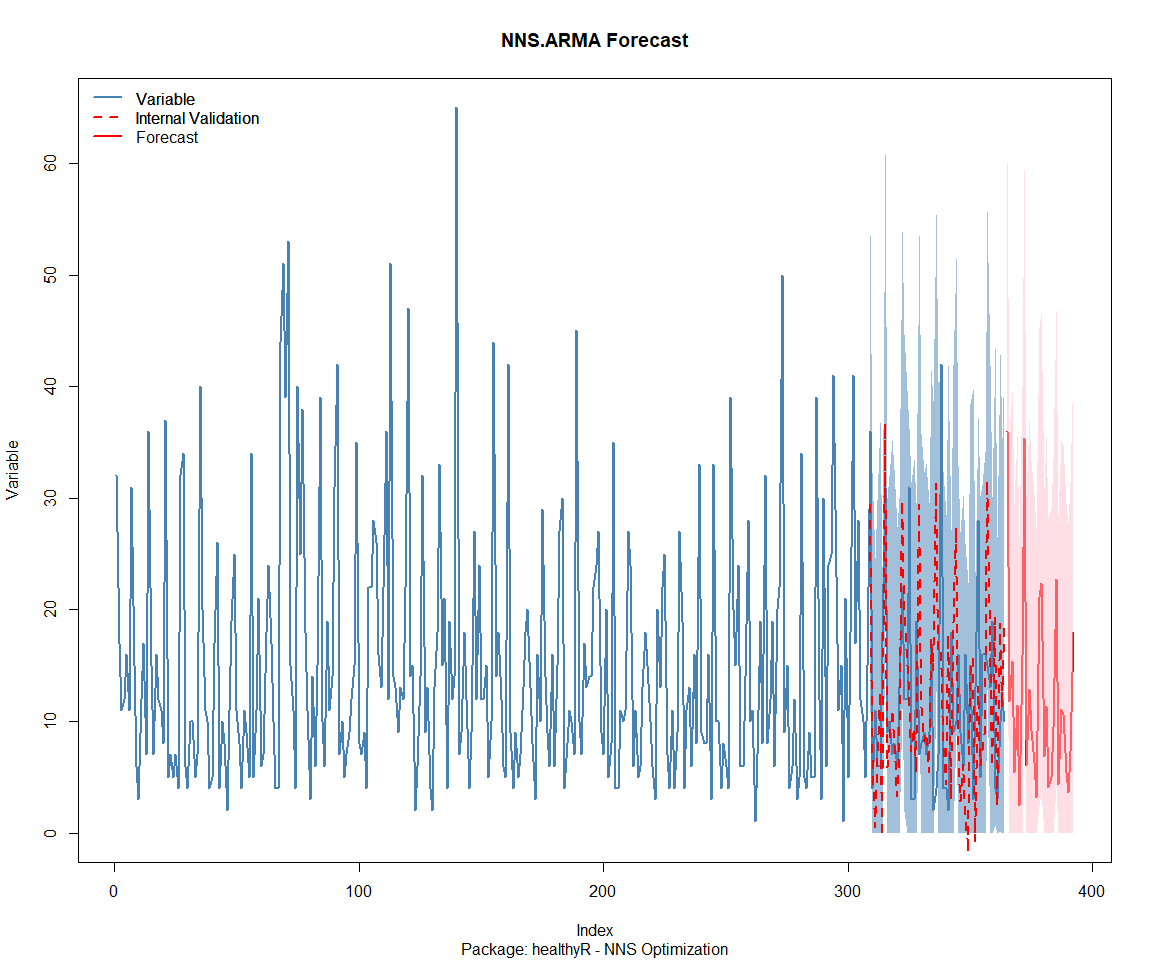

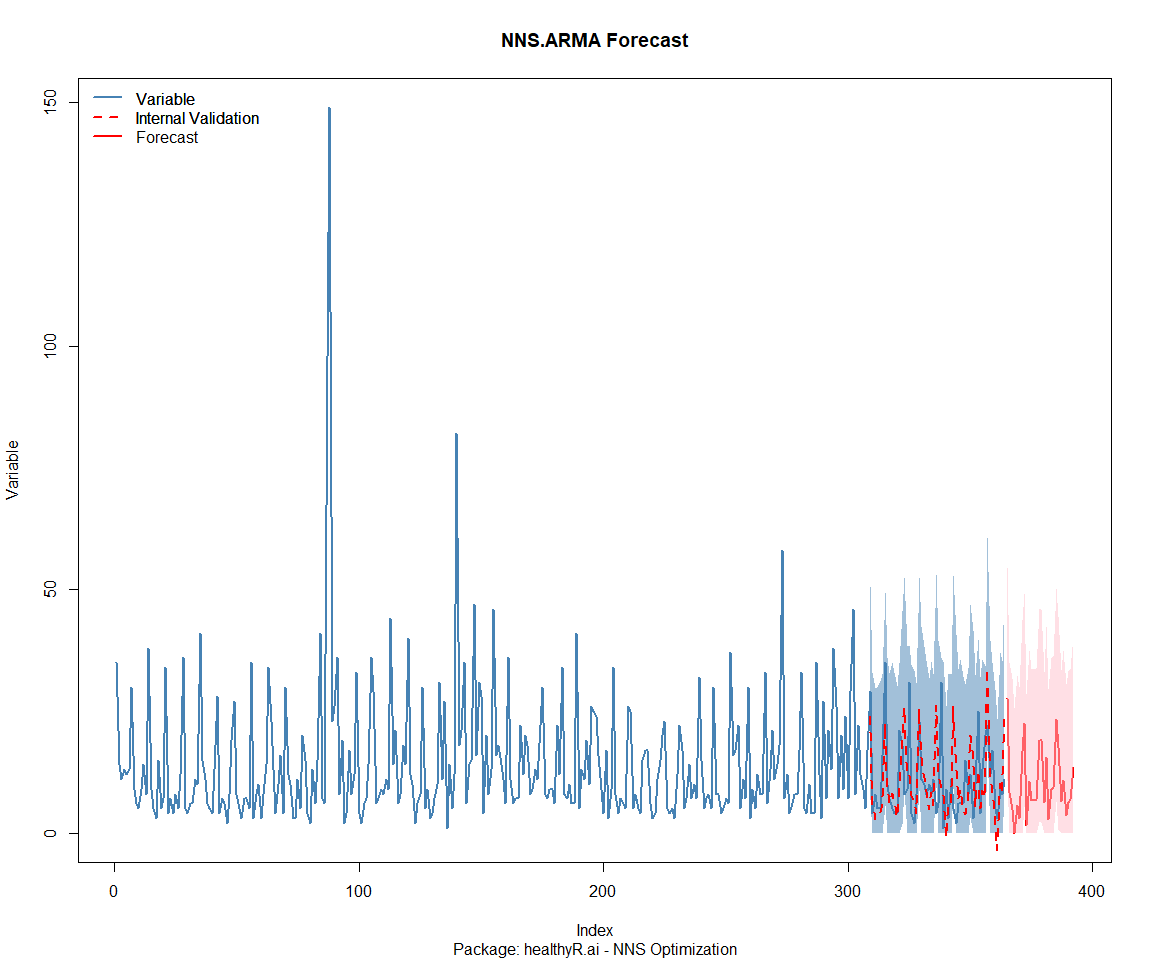

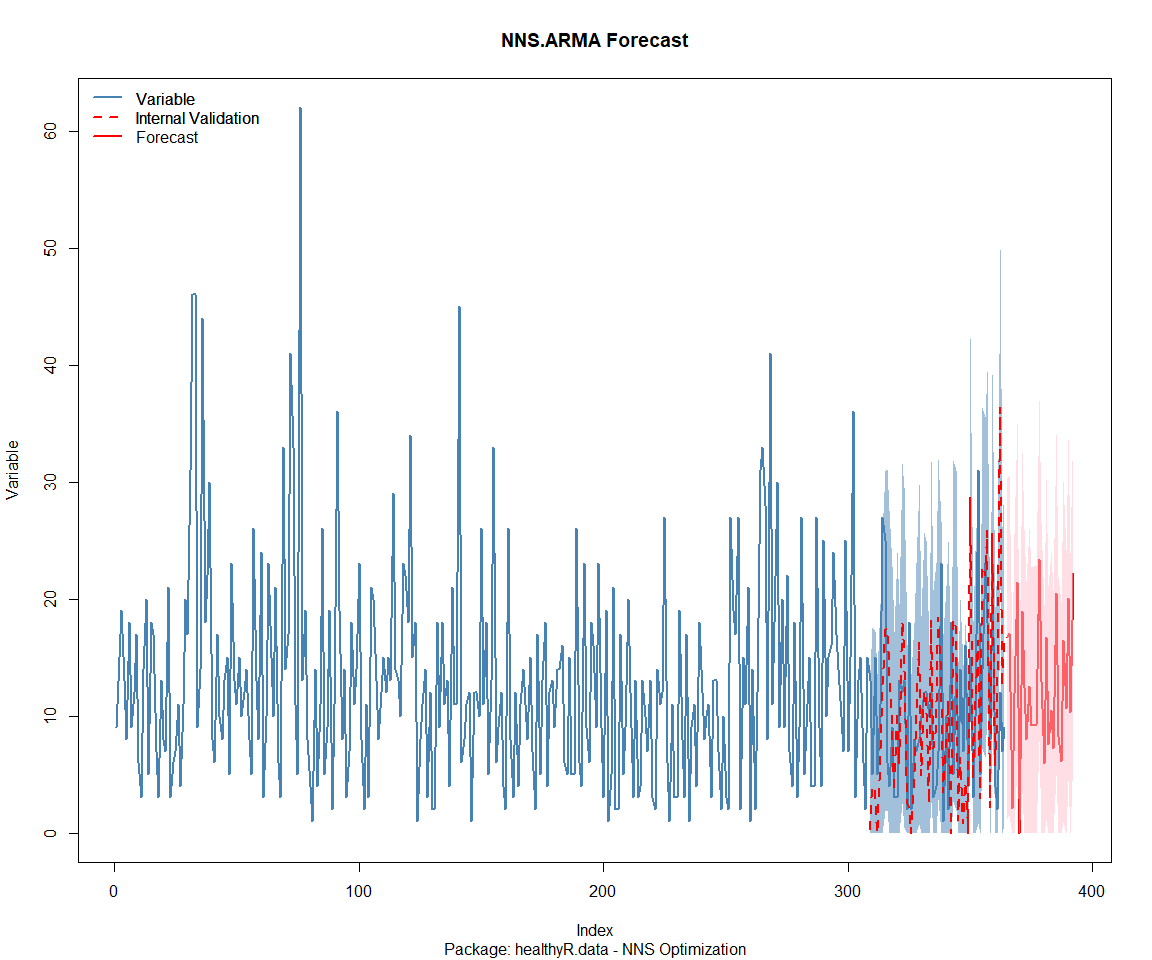

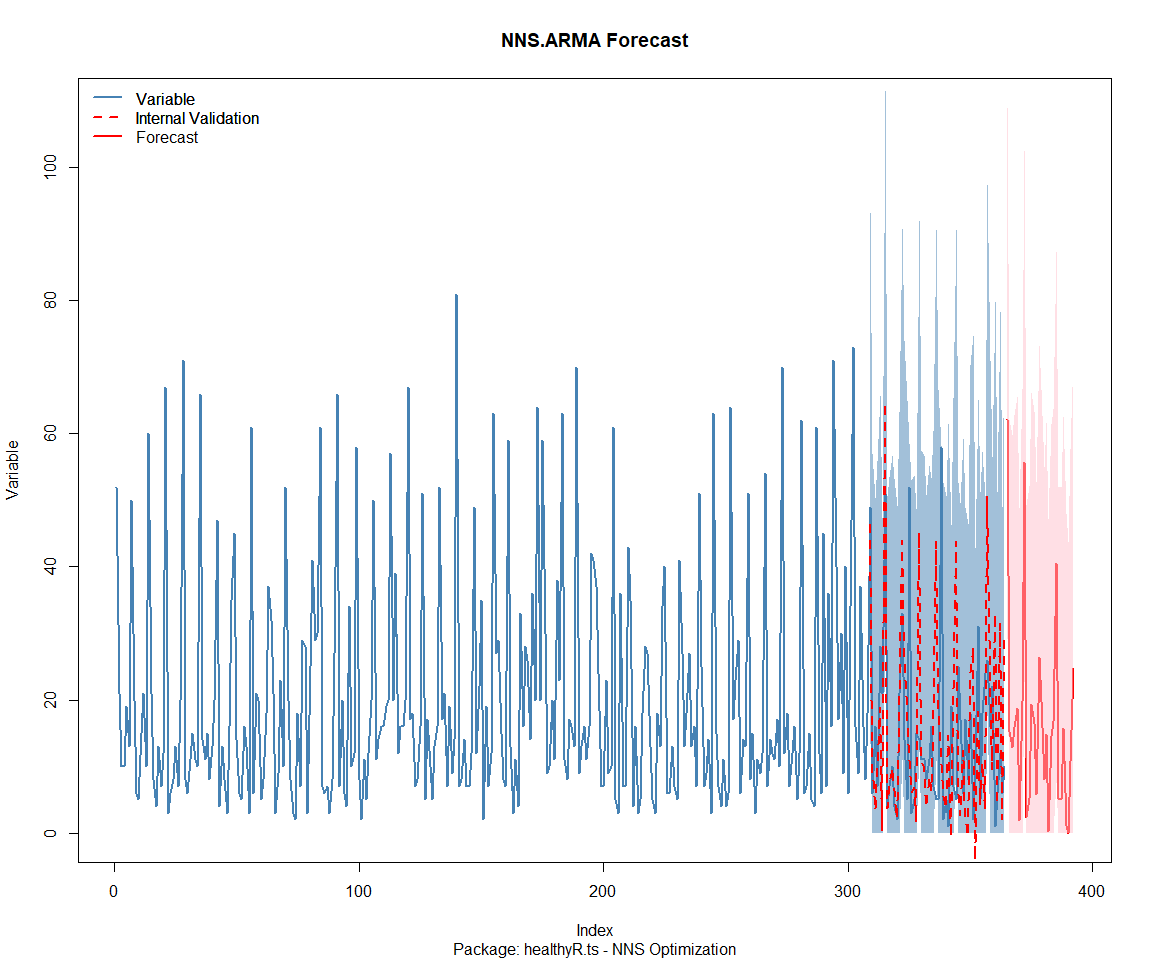

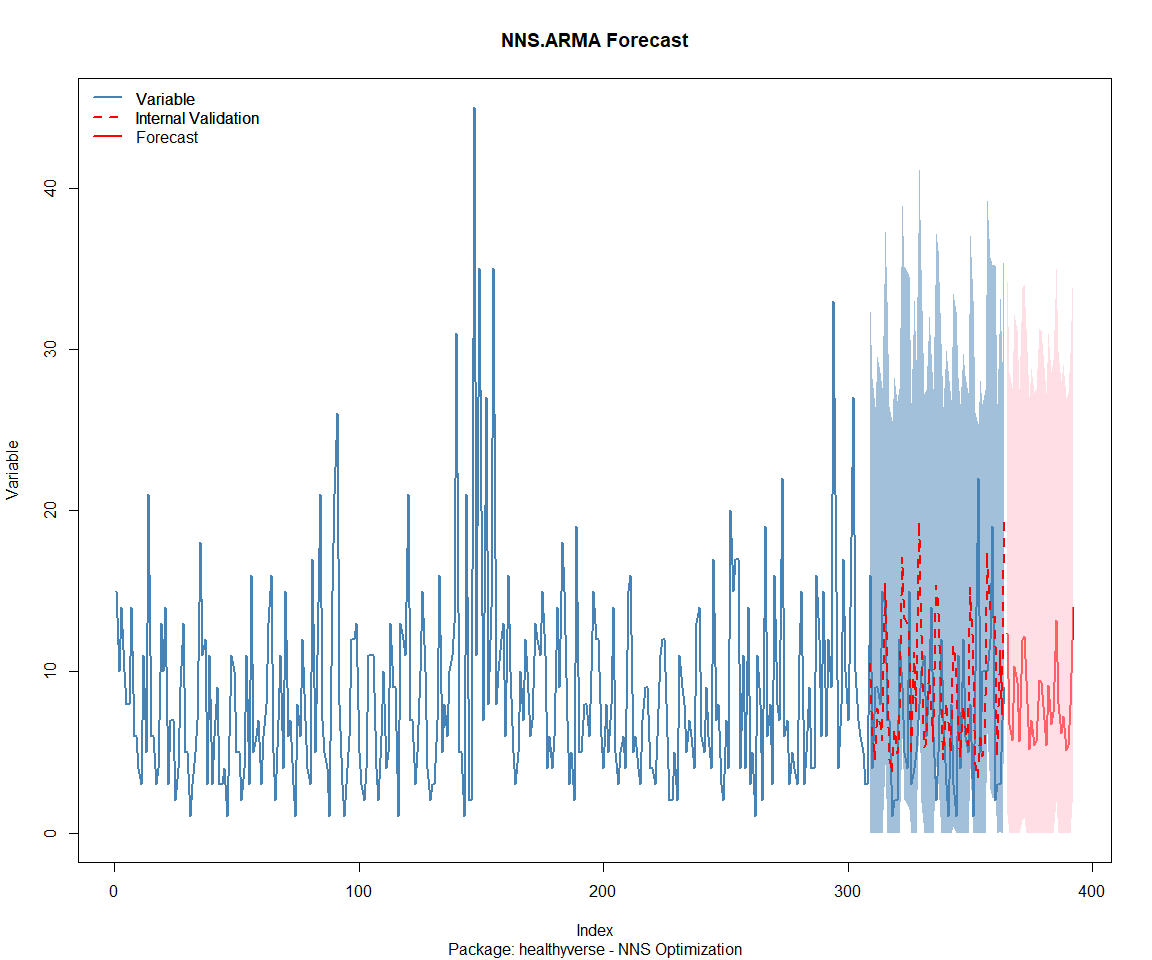

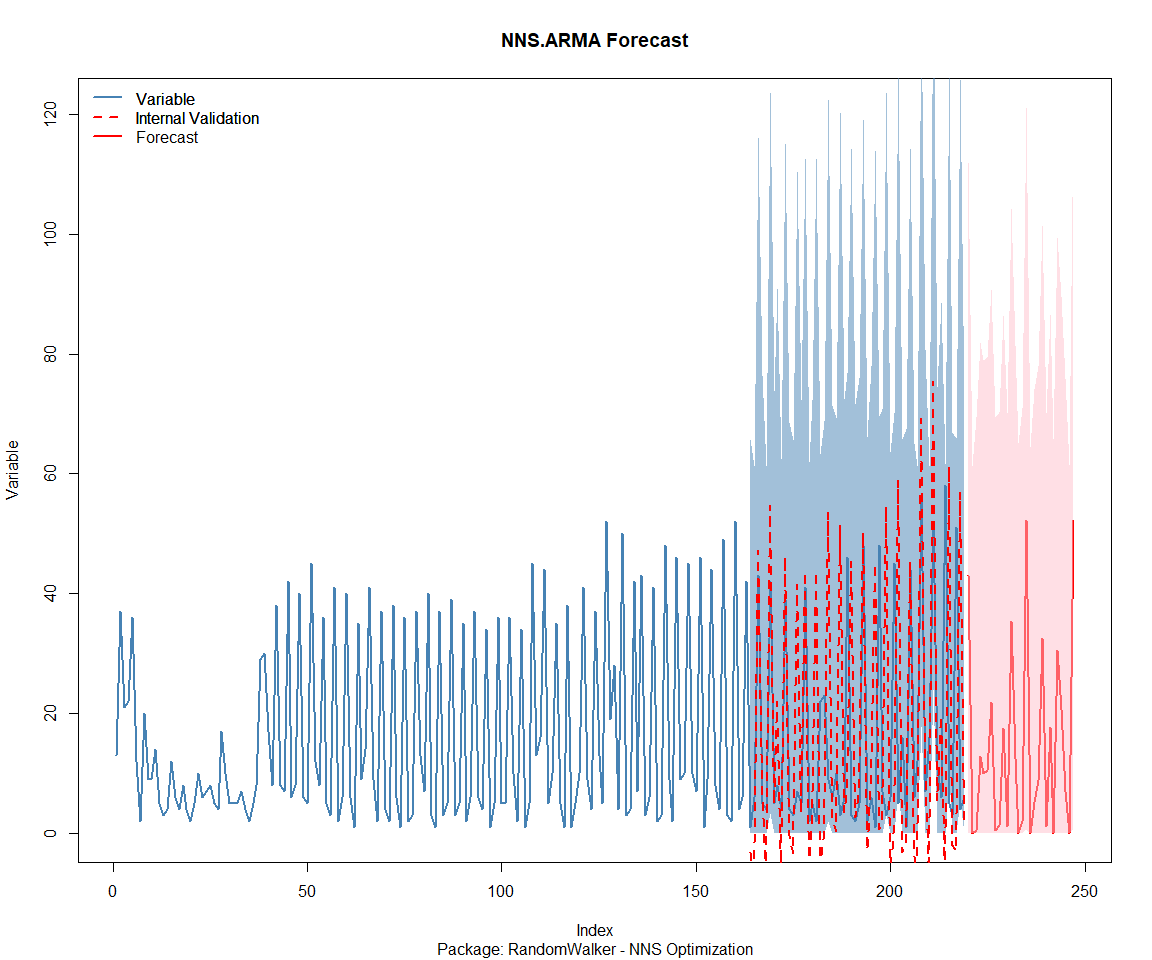

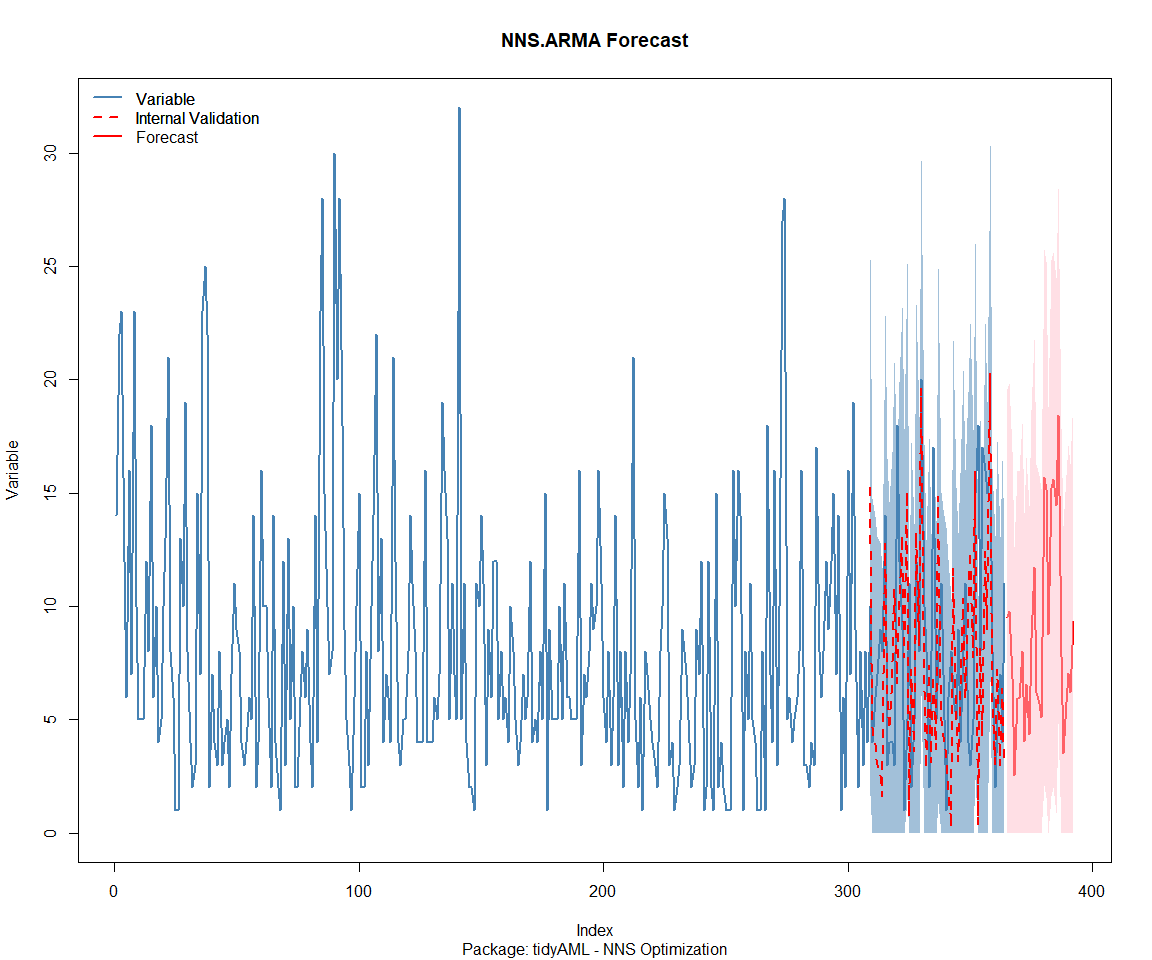

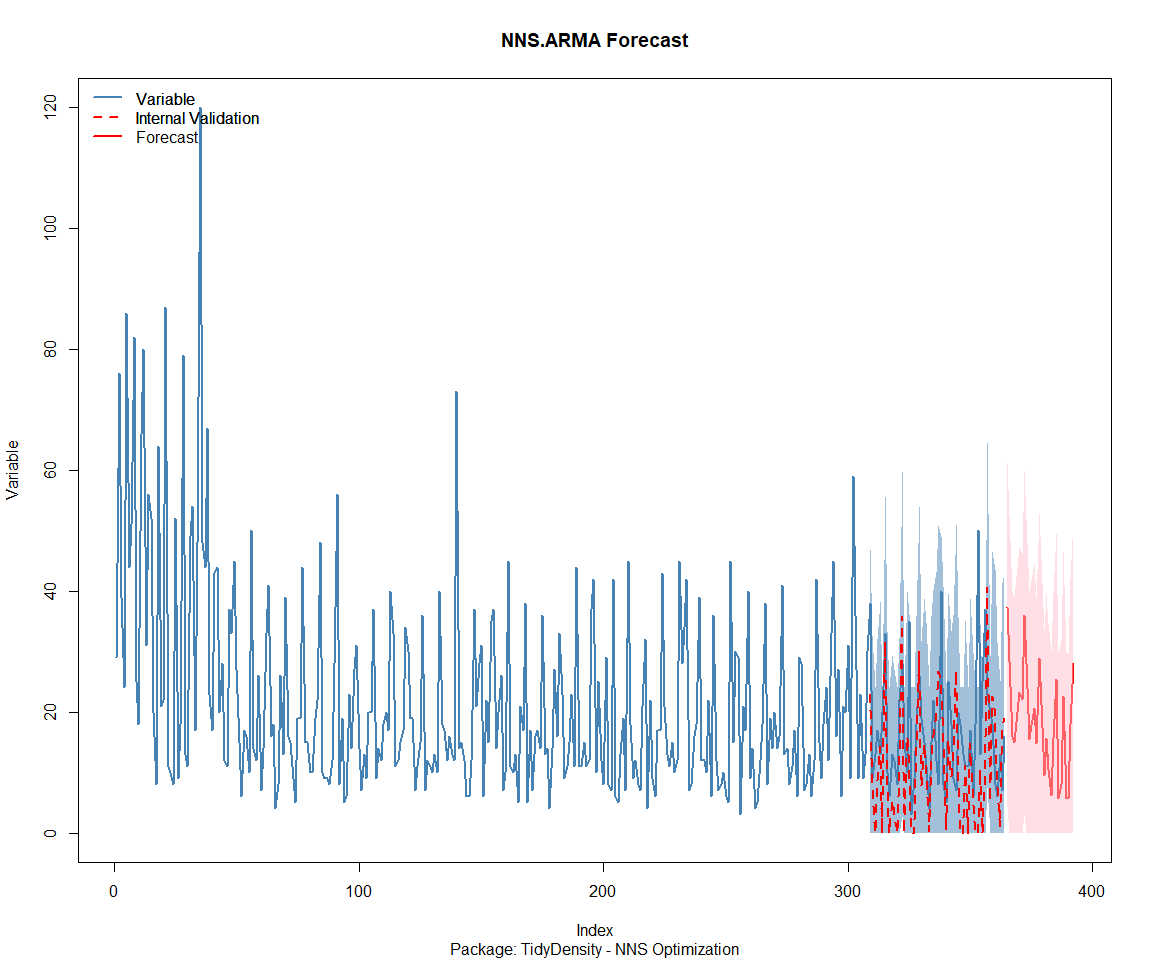

NNS Forecasting

This is something I have been wanting to try for a while. The NNS

package is a great package for forecasting time series data.

library(NNS)

data_list <- base_data |>

select(package, value) |>

group_split(package)

data_list |>

imap(

\(x, idx) {

obj <- x

x <- obj |> pull(value) |> tail(7*52)

train_set_size <- length(x) - 56

pkg <- obj |> pluck(1) |> unique()

# sf <- NNS.seas(x, modulo = 7, plot = FALSE)$periods

seas <- t(

sapply(

1:25,

function(i) c(

i,

sqrt(

mean((

NNS.ARMA(x,

h = 28,

training.set = train_set_size,

method = "lin",

seasonal.factor = i,

plot=FALSE

) - tail(x, 28)) ^ 2)))

)

)

colnames(seas) <- c("Period", "RMSE")

sf <- seas[which.min(seas[, 2]), 1]

cat(paste0("Package: ", pkg, "\n"))

NNS.ARMA.optim(

variable = x,

h = 28,

training.set = train_set_size,

#seasonal.factor = seq(12, 60, 7),

seasonal.factor = sf,

pred.int = 0.95,

plot = TRUE

)

title(

sub = paste0("\n",

"Package: ", pkg, " - NNS Optimization")

)

}

)

Package: healthyR

[1] "CURRNET METHOD: lin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'lin' , seasonal.factor = c( 18 ) ...)"

[1] "CURRENT lin OBJECTIVE FUNCTION = 43.7759021293342"

[1] "BEST method = 'lin' PATH MEMBER = c( 18 )"

[1] "BEST lin OBJECTIVE FUNCTION = 43.7759021293342"

[1] "CURRNET METHOD: nonlin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'nonlin' , seasonal.factor = c( 18 ) ...)"

[1] "CURRENT nonlin OBJECTIVE FUNCTION = 12.5244771716494"

[1] "BEST method = 'nonlin' PATH MEMBER = c( 18 )"

[1] "BEST nonlin OBJECTIVE FUNCTION = 12.5244771716494"

[1] "CURRNET METHOD: both"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'both' , seasonal.factor = c( 18 ) ...)"

[1] "CURRENT both OBJECTIVE FUNCTION = 21.3201679978738"

[1] "BEST method = 'both' PATH MEMBER = c( 18 )"

[1] "BEST both OBJECTIVE FUNCTION = 21.3201679978738"

Package: healthyR.ai

[1] "CURRNET METHOD: lin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'lin' , seasonal.factor = c( 13 ) ...)"

[1] "CURRENT lin OBJECTIVE FUNCTION = 25.3074344632045"

[1] "BEST method = 'lin' PATH MEMBER = c( 13 )"

[1] "BEST lin OBJECTIVE FUNCTION = 25.3074344632045"

[1] "CURRNET METHOD: nonlin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'nonlin' , seasonal.factor = c( 13 ) ...)"

[1] "CURRENT nonlin OBJECTIVE FUNCTION = 12.1353235986173"

[1] "BEST method = 'nonlin' PATH MEMBER = c( 13 )"

[1] "BEST nonlin OBJECTIVE FUNCTION = 12.1353235986173"

[1] "CURRNET METHOD: both"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'both' , seasonal.factor = c( 13 ) ...)"

[1] "CURRENT both OBJECTIVE FUNCTION = 18.9168265936723"

[1] "BEST method = 'both' PATH MEMBER = c( 13 )"

[1] "BEST both OBJECTIVE FUNCTION = 18.9168265936723"

Package: healthyR.data

[1] "CURRNET METHOD: lin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'lin' , seasonal.factor = c( 13 ) ...)"

[1] "CURRENT lin OBJECTIVE FUNCTION = 11.7751731852104"

[1] "BEST method = 'lin' PATH MEMBER = c( 13 )"

[1] "BEST lin OBJECTIVE FUNCTION = 11.7751731852104"

[1] "CURRNET METHOD: nonlin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'nonlin' , seasonal.factor = c( 13 ) ...)"

[1] "CURRENT nonlin OBJECTIVE FUNCTION = 24.7484895504385"

[1] "BEST method = 'nonlin' PATH MEMBER = c( 13 )"

[1] "BEST nonlin OBJECTIVE FUNCTION = 24.7484895504385"

[1] "CURRNET METHOD: both"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'both' , seasonal.factor = c( 13 ) ...)"

[1] "CURRENT both OBJECTIVE FUNCTION = 20.2915858323777"

[1] "BEST method = 'both' PATH MEMBER = c( 13 )"

[1] "BEST both OBJECTIVE FUNCTION = 20.2915858323777"

Package: healthyR.ts

[1] "CURRNET METHOD: lin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'lin' , seasonal.factor = c( 19 ) ...)"

[1] "CURRENT lin OBJECTIVE FUNCTION = 21.6259422713186"

[1] "BEST method = 'lin' PATH MEMBER = c( 19 )"

[1] "BEST lin OBJECTIVE FUNCTION = 21.6259422713186"

[1] "CURRNET METHOD: nonlin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'nonlin' , seasonal.factor = c( 19 ) ...)"

[1] "CURRENT nonlin OBJECTIVE FUNCTION = 6.6816473766716"

[1] "BEST method = 'nonlin' PATH MEMBER = c( 19 )"

[1] "BEST nonlin OBJECTIVE FUNCTION = 6.6816473766716"

[1] "CURRNET METHOD: both"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'both' , seasonal.factor = c( 19 ) ...)"

[1] "CURRENT both OBJECTIVE FUNCTION = 7.79418264719735"

[1] "BEST method = 'both' PATH MEMBER = c( 19 )"

[1] "BEST both OBJECTIVE FUNCTION = 7.79418264719735"

Package: healthyverse

[1] "CURRNET METHOD: lin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'lin' , seasonal.factor = c( 1 ) ...)"

[1] "CURRENT lin OBJECTIVE FUNCTION = 240.105590229643"

[1] "BEST method = 'lin' PATH MEMBER = c( 1 )"

[1] "BEST lin OBJECTIVE FUNCTION = 240.105590229643"

[1] "CURRNET METHOD: nonlin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'nonlin' , seasonal.factor = c( 1 ) ...)"

[1] "CURRENT nonlin OBJECTIVE FUNCTION = 107.101196807476"

[1] "BEST method = 'nonlin' PATH MEMBER = c( 1 )"

[1] "BEST nonlin OBJECTIVE FUNCTION = 107.101196807476"

[1] "CURRNET METHOD: both"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'both' , seasonal.factor = c( 1 ) ...)"

[1] "CURRENT both OBJECTIVE FUNCTION = 71.3080683899981"

[1] "BEST method = 'both' PATH MEMBER = c( 1 )"

[1] "BEST both OBJECTIVE FUNCTION = 71.3080683899981"

Package: RandomWalker

[1] "CURRNET METHOD: lin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'lin' , seasonal.factor = c( 23 ) ...)"

[1] "CURRENT lin OBJECTIVE FUNCTION = 6.73515809513789"

[1] "BEST method = 'lin' PATH MEMBER = c( 23 )"

[1] "BEST lin OBJECTIVE FUNCTION = 6.73515809513789"

[1] "CURRNET METHOD: nonlin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'nonlin' , seasonal.factor = c( 23 ) ...)"

[1] "CURRENT nonlin OBJECTIVE FUNCTION = 3.95744543978893"

[1] "BEST method = 'nonlin' PATH MEMBER = c( 23 )"

[1] "BEST nonlin OBJECTIVE FUNCTION = 3.95744543978893"

[1] "CURRNET METHOD: both"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'both' , seasonal.factor = c( 23 ) ...)"

[1] "CURRENT both OBJECTIVE FUNCTION = 4.46821865730444"

[1] "BEST method = 'both' PATH MEMBER = c( 23 )"

[1] "BEST both OBJECTIVE FUNCTION = 4.46821865730444"

Package: tidyAML

[1] "CURRNET METHOD: lin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'lin' , seasonal.factor = c( 14 ) ...)"

[1] "CURRENT lin OBJECTIVE FUNCTION = 9.80074156181386"

[1] "BEST method = 'lin' PATH MEMBER = c( 14 )"

[1] "BEST lin OBJECTIVE FUNCTION = 9.80074156181386"

[1] "CURRNET METHOD: nonlin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'nonlin' , seasonal.factor = c( 14 ) ...)"

[1] "CURRENT nonlin OBJECTIVE FUNCTION = 16.5093971478679"

[1] "BEST method = 'nonlin' PATH MEMBER = c( 14 )"

[1] "BEST nonlin OBJECTIVE FUNCTION = 16.5093971478679"

[1] "CURRNET METHOD: both"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'both' , seasonal.factor = c( 14 ) ...)"

[1] "CURRENT both OBJECTIVE FUNCTION = 16.7614237997592"

[1] "BEST method = 'both' PATH MEMBER = c( 14 )"

[1] "BEST both OBJECTIVE FUNCTION = 16.7614237997592"

Package: TidyDensity

[1] "CURRNET METHOD: lin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'lin' , seasonal.factor = c( 20 ) ...)"

[1] "CURRENT lin OBJECTIVE FUNCTION = 5.74590043484087"

[1] "BEST method = 'lin' PATH MEMBER = c( 20 )"

[1] "BEST lin OBJECTIVE FUNCTION = 5.74590043484087"

[1] "CURRNET METHOD: nonlin"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'nonlin' , seasonal.factor = c( 20 ) ...)"

[1] "CURRENT nonlin OBJECTIVE FUNCTION = 4.04021881313532"

[1] "BEST method = 'nonlin' PATH MEMBER = c( 20 )"

[1] "BEST nonlin OBJECTIVE FUNCTION = 4.04021881313532"

[1] "CURRNET METHOD: both"

[1] "COPY LATEST PARAMETERS DIRECTLY FOR NNS.ARMA() IF ERROR:"

[1] "NNS.ARMA(... method = 'both' , seasonal.factor = c( 20 ) ...)"

[1] "CURRENT both OBJECTIVE FUNCTION = 4.14988107785406"

[1] "BEST method = 'both' PATH MEMBER = c( 20 )"

[1] "BEST both OBJECTIVE FUNCTION = 4.14988107785406"

[[1]]

NULL

[[2]]

NULL

[[3]]

NULL

[[4]]

NULL

[[5]]

NULL

[[6]]

NULL

[[7]]

NULL

[[8]]

NULL

Pre-Processing

Now we are going to do some basic pre-processing.

data_padded_tbl <- base_data %>%

pad_by_time(

.date_var = date,

.pad_value = 0

)

# Get log interval and standardization parameters

log_params <- liv(data_padded_tbl$value, limit_lower = 0, offset = 1, silent = TRUE)

limit_lower <- log_params$limit_lower

limit_upper <- log_params$limit_upper

offset <- log_params$offset

data_liv_tbl <- data_padded_tbl %>%

# Get log interval transform

mutate(value_trans = liv(value, limit_lower = 0, offset = 1, silent = TRUE)$log_scaled)

# Get Standardization Params

std_params <- standard_vec(data_liv_tbl$value_trans, silent = TRUE)

std_mean <- std_params$mean

std_sd <- std_params$sd

data_transformed_tbl <- data_liv_tbl %>%

group_by(package) %>%

# get standardization

mutate(value_trans = standard_vec(value_trans, silent = TRUE)$standard_scaled) %>%

tk_augment_fourier(

.date_var = date,

.periods = c(7, 14, 30, 90, 180),

.K = 2

) %>%

tk_augment_timeseries_signature(

.date_var = date

) %>%

ungroup() %>%

select(-c(value, -year.iso))

Since this is panel data we can follow one of two different modeling strategies. We can search for a global model in the panel data or we can use nested forecasting finding the best model for each of the time series. Since we only have 5 panels, we will use nested forecasting.

To do this we will use the nest_timeseries and

split_nested_timeseries functions to create a nested tibble.

horizon <- 4*7

nested_data_tbl <- data_transformed_tbl %>%

# 0. Filter out column where package is NA

filter(!is.na(package)) %>%

# 1. Extending: We'll predict n days into the future.

extend_timeseries(

.id_var = package,

.date_var = date,

.length_future = horizon

) %>%

# 2. Nesting: We'll group by id, and create a future dataset

# that forecasts n days of extended data and

# an actual dataset that contains n*2 days

nest_timeseries(

.id_var = package,

.length_future = horizon

#.length_actual = horizon*2

) %>%

# 3. Splitting: We'll take the actual data and create splits

# for accuracy and confidence interval estimation of n das (test)

# and the rest is training data

split_nested_timeseries(

.length_test = horizon

)

nested_data_tbl

# A tibble: 8 × 4

package .actual_data .future_data .splits

<fct> <list> <list> <list>

1 healthyR.data <tibble [1,950 × 50]> <tibble [28 × 50]> <split [1922|28]>

2 healthyR <tibble [1,944 × 50]> <tibble [28 × 50]> <split [1916|28]>

3 healthyR.ts <tibble [1,880 × 50]> <tibble [28 × 50]> <split [1852|28]>

4 healthyverse <tibble [1,823 × 50]> <tibble [28 × 50]> <split [1795|28]>

5 healthyR.ai <tibble [1,686 × 50]> <tibble [28 × 50]> <split [1658|28]>

6 TidyDensity <tibble [1,537 × 50]> <tibble [28 × 50]> <split [1509|28]>

7 tidyAML <tibble [1,143 × 50]> <tibble [28 × 50]> <split [1115|28]>

8 RandomWalker <tibble [567 × 50]> <tibble [28 × 50]> <split [539|28]>

Now it is time to make some recipes and models using the modeltime workflow.

Modeltime Workflow

Recipe Object

recipe_base <- recipe(

value_trans ~ .

, data = extract_nested_test_split(nested_data_tbl)

)

recipe_base

recipe_date <- recipe(

value_trans ~ date

, data = extract_nested_test_split(nested_data_tbl)

)

Models

# Models ------------------------------------------------------------------

# Auto ARIMA --------------------------------------------------------------

model_spec_arima_no_boost <- arima_reg() %>%

set_engine(engine = "auto_arima")

wflw_auto_arima <- workflow() %>%

add_recipe(recipe = recipe_date) %>%

add_model(model_spec_arima_no_boost)

# NNETAR ------------------------------------------------------------------

model_spec_nnetar <- nnetar_reg(

mode = "regression"

, seasonal_period = "auto"

) %>%

set_engine("nnetar")

wflw_nnetar <- workflow() %>%

add_recipe(recipe = recipe_base) %>%

add_model(model_spec_nnetar)

# TSLM --------------------------------------------------------------------

model_spec_lm <- linear_reg() %>%

set_engine("lm")

wflw_lm <- workflow() %>%

add_recipe(recipe = recipe_base) %>%

add_model(model_spec_lm)

# MARS --------------------------------------------------------------------

model_spec_mars <- mars(mode = "regression") %>%

set_engine("earth")

wflw_mars <- workflow() %>%

add_recipe(recipe = recipe_date) %>%

add_model(model_spec_mars)

Nested Modeltime Tables

nested_modeltime_tbl <- modeltime_nested_fit(

# Nested Data

nested_data = nested_data_tbl,

control = control_nested_fit(

verbose = TRUE,

allow_par = FALSE

),

# Add workflows

wflw_auto_arima,

wflw_lm,

wflw_mars,

wflw_nnetar

)

nested_modeltime_tbl <- nested_modeltime_tbl[!is.na(nested_modeltime_tbl$package),]

Model Accuracy

nested_modeltime_tbl %>%

extract_nested_test_accuracy() %>%

filter(!is.na(package)) %>%

knitr::kable()

| package | .model_id | .model_desc | .type | mae | mape | mase | smape | rmse | rsq |

|---|---|---|---|---|---|---|---|---|---|

| healthyR.data | 1 | ARIMA | Test | 0.6575123 | 94.08070 | 0.7053621 | 138.49190 | 0.8553255 | 0.0007853 |

| healthyR.data | 2 | LM | Test | 0.6535150 | 131.03018 | 0.7010739 | 124.85307 | 0.8784872 | 0.0301008 |

| healthyR.data | 3 | EARTH | Test | 0.6867081 | 124.28015 | 0.7366825 | 144.15972 | 0.8460786 | 0.0306081 |

| healthyR.data | 4 | NNAR | Test | 0.7169396 | 154.97014 | 0.7691141 | 139.42866 | 0.9420807 | 0.0101227 |

| healthyR | 1 | ARIMA | Test | 0.6219278 | 2108.98610 | 0.8674012 | 126.45545 | 0.8923556 | 0.0162989 |

| healthyR | 2 | LM | Test | 0.5625909 | 1026.16217 | 0.7846442 | 130.38422 | 0.8127318 | 0.1039732 |

| healthyR | 3 | EARTH | Test | 1.3487305 | 8021.45949 | 1.8810711 | 133.80371 | 1.5744257 | 0.0048646 |

| healthyR | 4 | NNAR | Test | 0.5335600 | 1045.94817 | 0.7441549 | 117.65690 | 0.8231870 | 0.0735856 |

| healthyR.ts | 1 | ARIMA | Test | 0.5178392 | 273.01074 | 0.6125805 | 150.04195 | 0.7317030 | 0.0295343 |

| healthyR.ts | 2 | LM | Test | 0.6049721 | 468.38313 | 0.7156549 | 150.47834 | 0.7873840 | 0.0114605 |

| healthyR.ts | 3 | EARTH | Test | 0.5869034 | 338.54863 | 0.6942803 | 125.68642 | 0.8323433 | 0.0028269 |

| healthyR.ts | 4 | NNAR | Test | 0.6331446 | 286.03678 | 0.7489816 | 147.77595 | 0.8201067 | 0.0042800 |

| healthyverse | 1 | ARIMA | Test | 0.6020614 | 44.04730 | 0.9632265 | 49.38305 | 0.6912789 | 0.0026396 |

| healthyverse | 2 | LM | Test | 1.2862057 | 97.37310 | 2.0577757 | 153.06824 | 1.4007990 | 0.0936567 |

| healthyverse | 3 | EARTH | Test | 0.5533405 | 65.78699 | 0.8852788 | 39.92553 | 0.6703991 | 0.2018262 |

| healthyverse | 4 | NNAR | Test | 0.9824396 | 68.32724 | 1.5717862 | 111.84305 | 1.1415808 | 0.0056173 |

| healthyR.ai | 1 | ARIMA | Test | 0.4058741 | 171.08786 | 0.6653845 | 109.29722 | 0.6604525 | 0.0003116 |

| healthyR.ai | 2 | LM | Test | 0.4606003 | 211.87159 | 0.7551019 | 115.11247 | 0.6803803 | 0.0572092 |

| healthyR.ai | 3 | EARTH | Test | 0.4643847 | 125.41263 | 0.7613060 | 175.19277 | 0.6860255 | 0.0570944 |

| healthyR.ai | 4 | NNAR | Test | 0.4594029 | 222.35772 | 0.7531390 | 115.47025 | 0.7102191 | 0.0110203 |

| TidyDensity | 1 | ARIMA | Test | 1.1273739 | 139.53284 | 0.6986790 | 177.71987 | 1.2430442 | 0.1002272 |

| TidyDensity | 2 | LM | Test | 1.1423175 | 236.10189 | 0.7079401 | 152.04650 | 1.2255166 | 0.0408278 |

| TidyDensity | 3 | EARTH | Test | 1.1537384 | 200.94594 | 0.7150181 | 151.12541 | 1.2386705 | 0.0399884 |

| TidyDensity | 4 | NNAR | Test | 1.0815595 | 148.34266 | 0.6702860 | 162.02037 | 1.1819482 | 0.0482940 |

| tidyAML | 1 | ARIMA | Test | 0.7986067 | 218.53230 | 0.7543973 | 141.64324 | 1.0932317 | 0.1581029 |

| tidyAML | 2 | LM | Test | 0.7476940 | 297.44030 | 0.7063031 | 142.23695 | 0.9656292 | 0.1712060 |

| tidyAML | 3 | EARTH | Test | 0.7907601 | 137.49464 | 0.7469851 | 179.50805 | 1.0834875 | 0.0593094 |

| tidyAML | 4 | NNAR | Test | 0.7492075 | 268.10361 | 0.7077328 | 150.13379 | 0.9756032 | 0.1268103 |

| RandomWalker | 1 | ARIMA | Test | 0.6876561 | 90.11233 | 0.4566961 | 106.08473 | 0.8589581 | 0.3678785 |

| RandomWalker | 2 | LM | Test | 0.9471492 | 101.08311 | 0.6290344 | 151.48737 | 1.1388594 | 0.0000710 |

| RandomWalker | 3 | EARTH | Test | 0.9274246 | 93.87521 | 0.6159346 | 165.75490 | 1.1061269 | 0.0000017 |

| RandomWalker | 4 | NNAR | Test | 0.8964585 | 101.10110 | 0.5953690 | 138.90448 | 1.0864511 | 0.0273645 |

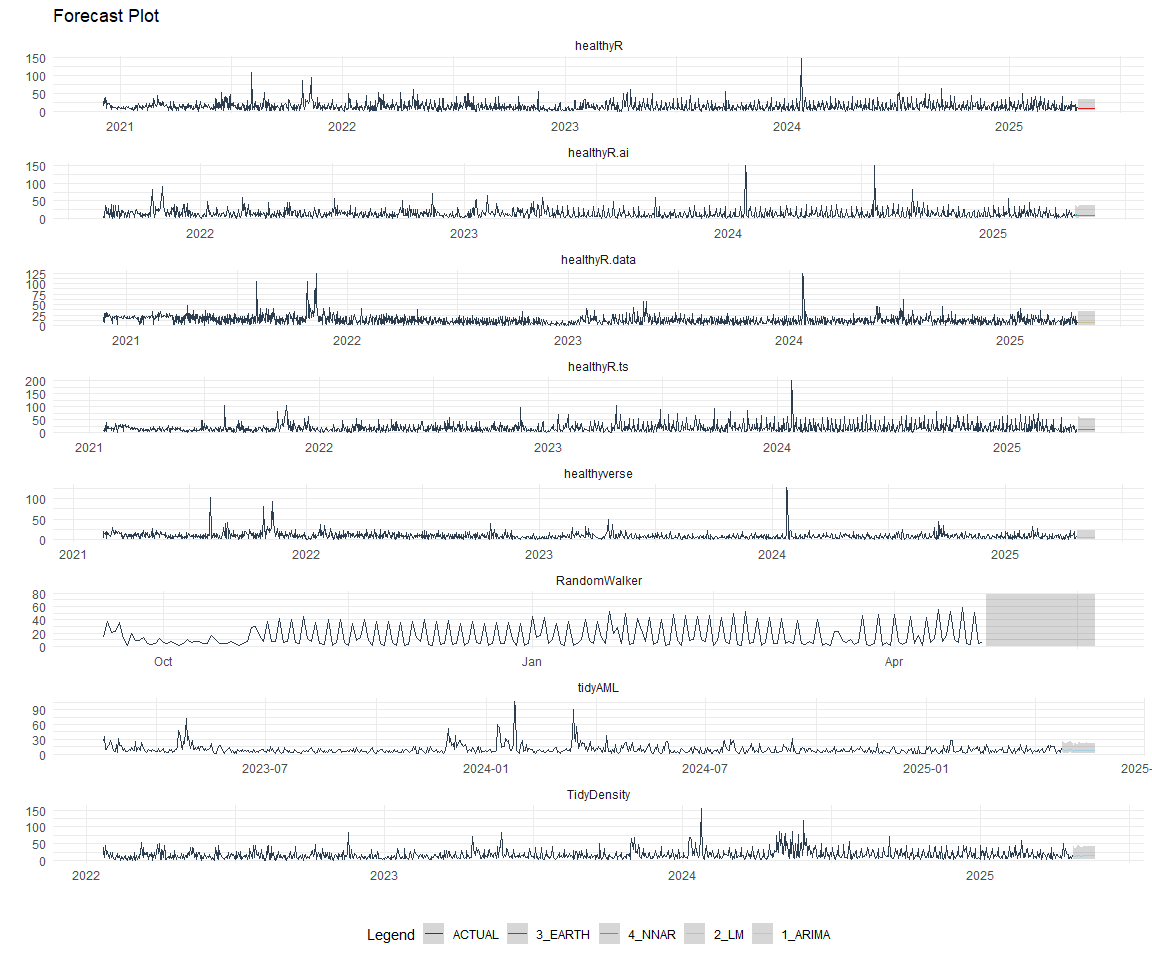

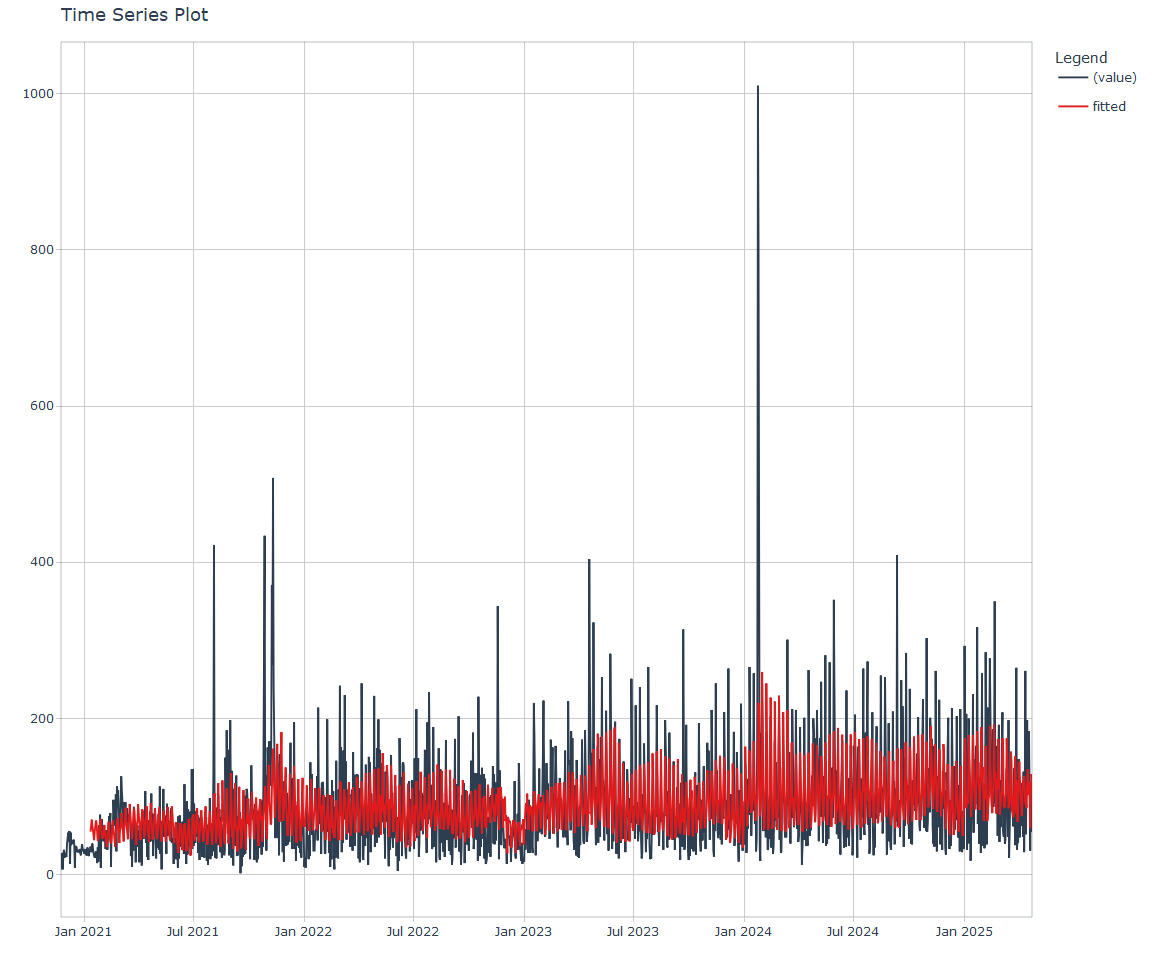

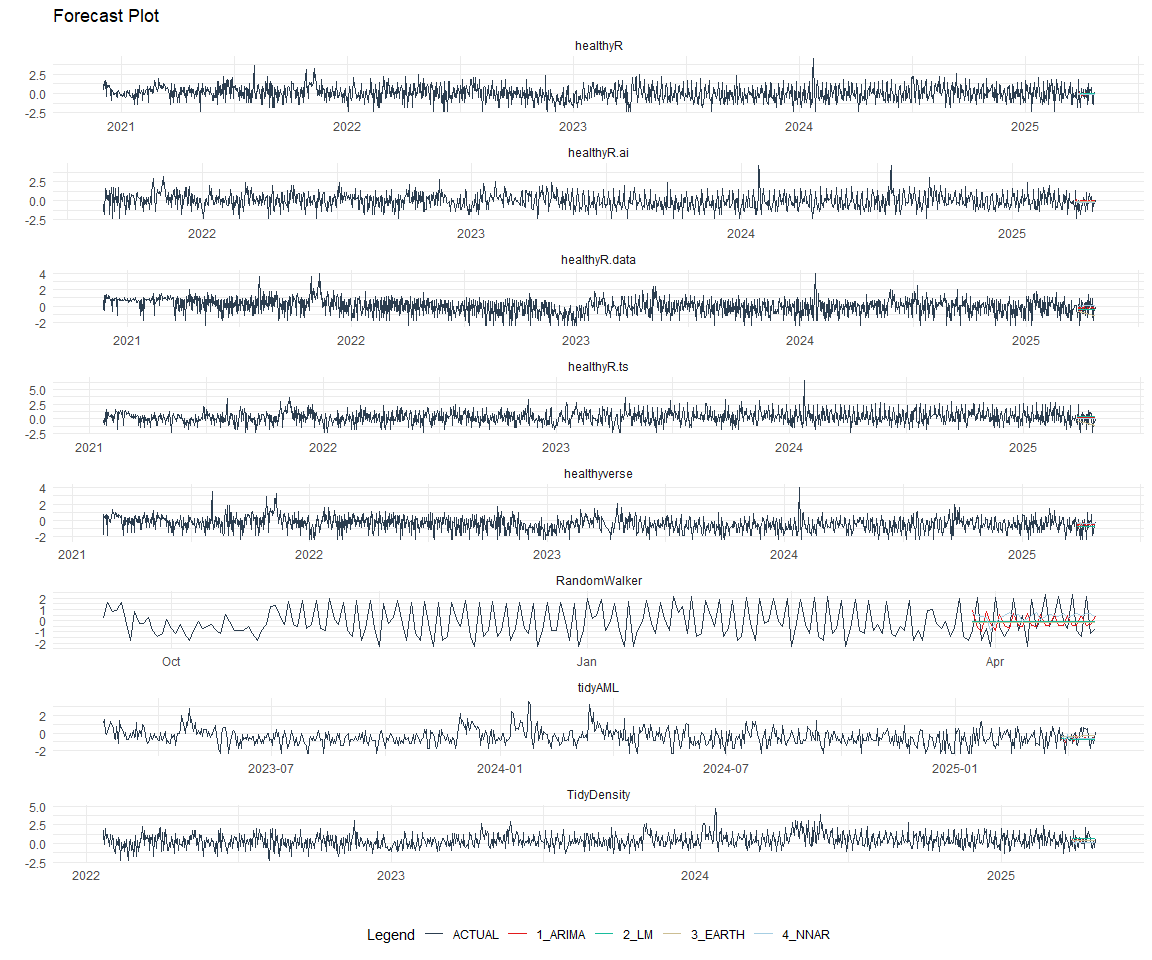

Plot Models

nested_modeltime_tbl %>%

extract_nested_test_forecast() %>%

group_by(package) %>%

filter_by_time(.date_var = .index, .start_date = max(.index) - 60) %>%

ungroup() %>%

plot_modeltime_forecast(

.interactive = FALSE,

.conf_interval_show = FALSE,

.facet_scales = "free"

) +

theme_minimal() +

facet_wrap(~ package, nrow = 3) +

theme(legend.position = "bottom")

Best Model

best_nested_modeltime_tbl <- nested_modeltime_tbl %>%

modeltime_nested_select_best(

metric = "rmse",

minimize = TRUE,

filter_test_forecasts = TRUE

)

best_nested_modeltime_tbl %>%

extract_nested_best_model_report()

# Nested Modeltime Table

# A tibble: 8 × 10

package .model_id .model_desc .type mae mape mase smape rmse rsq

<fct> <int> <chr> <chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 healthyR.d… 3 EARTH Test 0.687 124. 0.737 144. 0.846 3.06e-2

2 healthyR 2 LM Test 0.563 1026. 0.785 130. 0.813 1.04e-1

3 healthyR.ts 1 ARIMA Test 0.518 273. 0.613 150. 0.732 2.95e-2

4 healthyver… 3 EARTH Test 0.553 65.8 0.885 39.9 0.670 2.02e-1

5 healthyR.ai 1 ARIMA Test 0.406 171. 0.665 109. 0.660 3.12e-4

6 TidyDensity 4 NNAR Test 1.08 148. 0.670 162. 1.18 4.83e-2

7 tidyAML 2 LM Test 0.748 297. 0.706 142. 0.966 1.71e-1

8 RandomWalk… 1 ARIMA Test 0.688 90.1 0.457 106. 0.859 3.68e-1

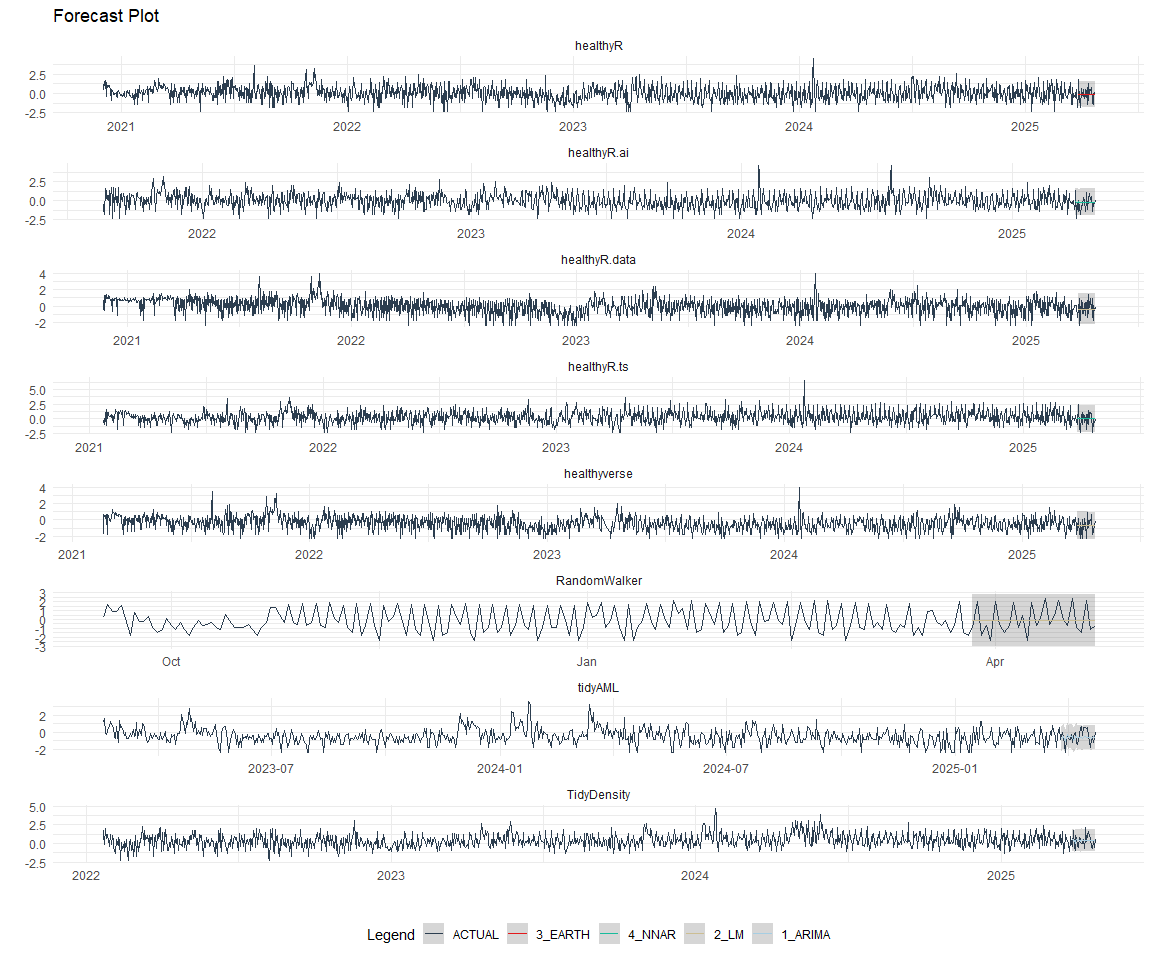

best_nested_modeltime_tbl %>%

extract_nested_test_forecast() %>%

#filter(!is.na(.model_id)) %>%

group_by(package) %>%

filter_by_time(.date_var = .index, .start_date = max(.index) - 60) %>%

ungroup() %>%

plot_modeltime_forecast(

.interactive = FALSE,

.conf_interval_alpha = 0.2,

.facet_scales = "free"

) +

facet_wrap(~ package, nrow = 3) +

theme_minimal() +

theme(legend.position = "bottom")

Refitting and Future Forecast

Now that we have the best models, we can make our future forecasts.

nested_modeltime_refit_tbl <- best_nested_modeltime_tbl %>%

modeltime_nested_refit(

control = control_nested_refit(verbose = TRUE)

)

nested_modeltime_refit_tbl

# Nested Modeltime Table

# A tibble: 8 × 5

package .actual_data .future_data .splits .modeltime_tables

<fct> <list> <list> <list> <list>

1 healthyR.data <tibble> <tibble> <split [1922|28]> <mdl_tm_t [1 × 5]>

2 healthyR <tibble> <tibble> <split [1916|28]> <mdl_tm_t [1 × 5]>

3 healthyR.ts <tibble> <tibble> <split [1852|28]> <mdl_tm_t [1 × 5]>

4 healthyverse <tibble> <tibble> <split [1795|28]> <mdl_tm_t [1 × 5]>

5 healthyR.ai <tibble> <tibble> <split [1658|28]> <mdl_tm_t [1 × 5]>

6 TidyDensity <tibble> <tibble> <split [1509|28]> <mdl_tm_t [1 × 5]>

7 tidyAML <tibble> <tibble> <split [1115|28]> <mdl_tm_t [1 × 5]>

8 RandomWalker <tibble> <tibble> <split [539|28]> <mdl_tm_t [1 × 5]>

nested_modeltime_refit_tbl %>%

extract_nested_future_forecast() %>%

group_by(package) %>%

mutate(across(.value:.conf_hi, .fns = ~ standard_inv_vec(

x = .,

mean = std_mean,

sd = std_sd

)$standard_inverse_value)) %>%

mutate(across(.value:.conf_hi, .fns = ~ liiv(

x = .,

limit_lower = limit_lower,

limit_upper = limit_upper,

offset = offset

)$rescaled_v)) %>%

filter_by_time(.date_var = .index, .start_date = max(.index) - 60) %>%

ungroup() %>%

plot_modeltime_forecast(

.interactive = FALSE,

.conf_interval_alpha = 0.2,

.facet_scales = "free"

) +

facet_wrap(~ package, nrow = 3) +

theme_minimal() +

theme(legend.position = "bottom")